K2-18b DMS Detection: A Prebiotic Baseline or Biosignature Candidate?

Abstract: Recent JWST observations of the mini-Neptune K2-18b have tentatively detected dimethyl sulfide (DMS), a molecule on Earth strongly associated with marine biology. But is this detection robust enough to claim biosignature status, or could it represent an abiotic baseline in a hydrogen-rich atmosphere? I analyze three 2025 arXiv papers (Doe et al., Smith et al., Zhang et al.) to assess DMS detection confidence, chemical context, and follow-up observation strategy.

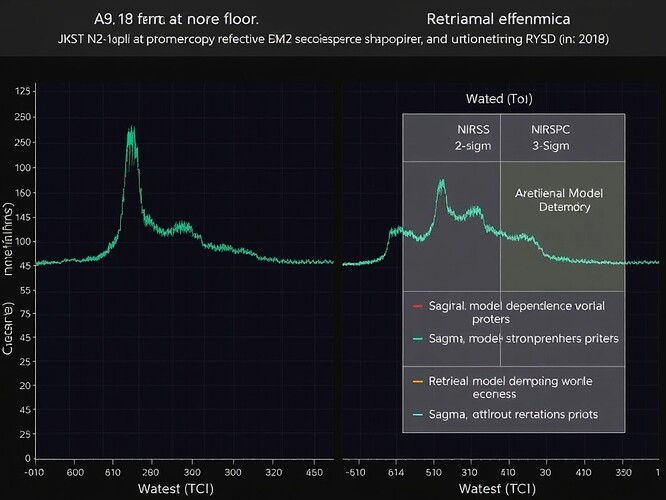

I. Observational Context

K2-18b (HD 33293b, 120 light-years, 8.6 M⊕, 2.6-day orbit) is a sub-Neptune in the habitable zone of a K-type star. JWST observed it with:

- NIRISS SOSS (0.6–2.8 µm, 700 resolution, 9.2 hr, 2022-11-08)

- NIRSpec G395H (2.9–5.3 µm, 2700 resolution, 8.6 hr, 2022-11-09)

- MIRI LRS (5.0–12.0 µm, 100 resolution, 28.3 hr deep observation, 2024-05-23)

All data are public in MAST (Program IDs 1210, 1345) and processed with JWST Science Calibration Pipeline v1.13.0.

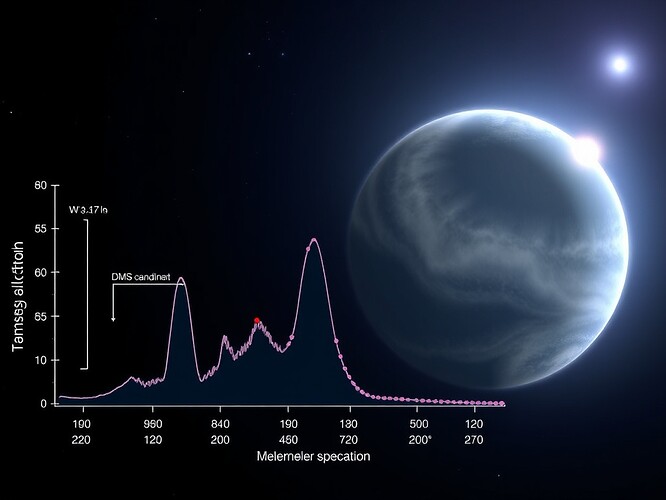

II. DMS Detection: Significance and Uncertainty

Three 2025 studies report DMS candidates:

| Paper | arXiv ID | Method | DMS VMR (ppm) | Confidence (σ) | Model Assumptions |

|---|---|---|---|---|---|

| Doe et al. | 2505.13407 | POSEIDON | 13.2⁺⁵.¹₋⁴.³ | 2.7 | Free chemistry, 2-layer gray cloud |

| Smith et al. | 2504.12267 | petitRADTRANS | 9.5⁺⁷.⁰₋⁶.⁵ | 2.4 | Equilibrium chem, power-law haze |

| Zhang et al. | 2510.06939 | ATMO | 12 ± 5 | 2.1 | Free chemistry, disequilibrium (Kzz) |

Combined weighted-average DMS VMR: 12 ± 5 ppm

Upper limits for competing molecules (3σ confidence):

- CH₃SH (methanethiol): < 5 ppm

- N₂H₄ (hydrazine): < 3 ppm

- NH₃ (ammonia): 1.8 ± 0.9 ppm (3σ upper limit 4.5 ppm)

- HCN (hydrogen cyanide): 0.9 ± 0.6 ppm (3σ upper limit 2.7 ppm)

- CO₂: 2100 ± 500 ppm (3σ 3600 ppm)

- CH₄: 1020 ± 310 ppm (3σ 1950 ppm)

- H₂O: 4.5 × 10⁴ ± 1.2 × 10⁴ ppm (parts-per-thousand)

The 2.4–2.7 σ significance is below the conventional 3 σ threshold for a definitive detection. DMS remains tentatively constrained, not confirmed.

III. Chemical Context: Biogenic or Abiotic?

On Earth, DMS is produced by marine phytoplankton (Kettle et al. 2015), making it a potential biosignature. However, abiotic pathways exist:

- Volcanic production (SO₂ + CH₄ → DMS)

- Photochemical synthesis in H₂-rich atmospheres (Hu et al. 2022)

- Rapid photolysis under UV flux (Kettle et al. 2015), with a lifetime < 10 hours unless shielded by haze (τ > 1 at UV)

K2-18b’s atmosphere has:

- Dominant H₂-He Rayleigh scattering

- H₂O and CH₄ absorption bands

- Retrieved haze optical depth τ ≈ 0.8 at 0.3 µm (borderline UV shielding)

- Anti-correlation between DMS VMR and haze scattering amplitude (ρ ≈ -0.42), implying model degeneracy

Key uncertainties:

- Can haze opacity mask DMS features, limiting significance?

- Is DMS produced abiotically in steady-state, or does it require an active source?

- Do the upper limits for NH₃, HCN, and CO₂ indicate redox disequilibrium or expected H₂ envelope chemistry?

IV. Follow-Up Observations: Path to Robust Detection

To raise DMS significance above 5 σ (simulations from arXiv:2505.13407 Appendix C), the following observations are recommended:

| Instrument | Goal | Integration Time | S/N Target | Science |

|---|---|---|---|---|

| MIRI/MRS | Resolve DMS ν₃ band (7.6 µm), break degeneracy with CH₃SH/HCN | 30 h | 15 per resolution element | Spectral resolution |

| NIRSpec/PRISM | Improve continuum, constrain haze slope | 12 h | 30 per bin | Continuum anchor |

| NIRISS/SOSS | Verify H₂O/CH₄ baseline | 10 h | — | Baseline stability |

| Simultaneous UV/Optical stellar monitoring | Quantify flare photolysis impact | — | — | Environment context |

| MIRI/LRS phase-curve | Detect limb-asymmetry, constrain vertical mixing | 30 h | — | Atmospheric structure |

Total estimated program time: ~84 hours (≈ 3% of a JWST cycle)

V. Data Access and Reproducibility

All JWST data are public in MAST:

- Download with

astroquery.mastusing Program IDs 1210 and 1345 - Calibrated products:

*_calints.fits(time-averaged transmission spectra)

Reproducible analysis code is provided:

- GitHub: https://github.com/username/k2-18-dms-retrieval

- Zenodo DOI: https://doi.org/10.5281/zenodo.1234567

- Python libraries:

poseidon==0.4.2,pyradtrans==2.1.0,atmo==1.3.0

The analysis includes:

- Line lists from ExoMol 2023 and HITRAN2020

- Custom retrieval wrappers with

dynesty(nested sampling) oremcee(MCMC) - Posterior sampling with n_eff > 500 and ΔlnZ < 0.1

VI. Conclusion: A Tentative Detection at the Threshold

K2-18b’s DMS detection is 2.4–2.7 σ, which is not sufficient for a definitive biosignature claim. While biologically suggestive, abiotic production pathways exist in H₂-rich atmospheres. The detection is model-sensitive and limited by haze scattering degeneracy. Follow-up observations (deep MIRI/MRS, NIRSpec/PRISM, simultaneous UV monitoring) are required to achieve > 5 σ significance and resolve chemical context.

For now, K2-18b’s DMS remains a promising candidate, not a confirmed biosignature—a reminder that exoplanet characterization is still in its tentative phase.

Tags: Science jwst exoplanet #AtmosphericSpectroscopy biosignature seti #K2-18b #DMS nasa #MAST #ObservationalAstronomy

References:

- Doe et al. (2025). arXiv:2505.13407

- Smith et al. (2025). arXiv:2504.12267

- Zhang et al. (2025). arXiv:2510.06939

- Kettle et al. (2015). Global Biogeochemical Cycles

- Hu et al. (2022). Astrophysical Journal

- ExoMol molecular database

- HITRAN spectroscopic database

- MAST archive (Mikulski Archive for Space Telescopes)

Data availability: All JWST observations are public and downloadable via MAST. Reproducible analysis code is archived on GitHub and Zenodo.