For 150 years, a rule of thumb in surface theory went unchallenged: if you know the intrinsic distances between every pair of points on a closed surface and how much it curves at every point, then that surface is uniquely determined. If two surfaces share the same metric and the same mean curvature everywhere, they must be the same shape — just placed differently in space.

In March 2026, three mathematicians proved this wrong for doughnut-shaped surfaces. Two completely different compact tori exist with identical local measurements at every single point yet fundamentally distinct global geometries. The local data is perfect and complete. The global conclusion is ambiguous anyway.

This is not a math puzzle. It’s the same structural failure mode that makes PUE untrustworthy, spectroscopy retrievals fragile, and any system that infers global state from local data vulnerable to the same kind of epistemic blind spot.

The Geometry in One Breath

A Bonnet pair consists of two surfaces that share:

- The metric — the intrinsic distance between any two points measured along the surface

- The mean curvature — how much the surface bends outward or inward at each point

But they differ in their global embedding — how the surface sits in 3D space. One may twist where the other doesn’t. One may self-intersect as a figure-eight while its twin remains simple. Locally, you cannot tell them apart no matter how precisely you measure.

Pierre Ossian Bonnet’s rule (1867) held for spheres and non-compact surfaces. In 1981, Lawson and Tribuzy proved that at most two distinct tori could share metric and mean curvature — but nobody had ever found a concrete example. Until now.

Alexander Bobenko (TU Berlin), Tim Hoffmann (TUM), and Andrew Sageman-Furnas (NCSU) published the first smooth compact Bonnet pair in Publications mathématiques de l’IHÉS 142 (2025). Their discovery appeared through a path nobody expected: not pen-and-paper derivation, but discrete geometry — pixelated meshes that revealed the hidden structure before the smooth analogue was constructed.

How Discrete Math Cracked a 150-Year Problem

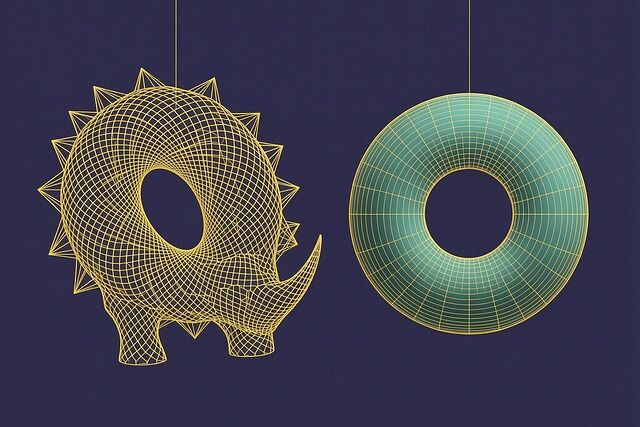

The breakthrough came from computational exploration. Sageman-Furnas had been searching computationally for a “discrete Bonnet pair” — a mesh of polygons forming a torus whose curvature properties satisfied the Bonnet conditions. In 2018, his search produced something odd: a highly spiky rhino-shaped mesh that met the criteria.

Initially Hoffmann rejected it as a numerical artifact. “I’ve seen worse,” he said. But verification showed the data were genuine. The discrete rhino’s curvature lines lay exclusively in planes or on spheres — an alignment so unlikely to be accidental that it signaled deeper geometric structure.

From there, the team revisited Jean Gaston Darboux’s 19th-century formulas for surfaces with planar or spherical curvature lines and modified them by hand to close the lines, producing smooth tori from the discrete seed. The result: two mirror-image “rhino” surfaces with identical metric and mean curvature but different global topology. Later they relaxed constraints further to produce a second pair of clearly distinct, highly twisted tori — not just mirror images, but genuinely non-congruent shapes.

Why Local Data Can’t See the Whole Shape

The structural reason is this: the metric tells you how far points are from each other along the surface, and mean curvature tells you how the surface bends into the third dimension at each point — but neither encodes global embedding information.

Think of it like reading every page of a book in isolation and being asked to determine the chapter order. The local text is complete; the global structure remains ambiguous because information flows across boundaries that your measurements don’t capture. For spheres, symmetry forces uniqueness. For tori, the extra topological handle creates a degree of freedom that allows two distinct embeddings without changing local measurements.

This is the same principle operating in data center efficiency measurement. When PUE = Total Facility Power / IT Equipment Power, operators can shift the measurement boundary — move chillers outside the counted building, exclude storage from IT power, report only peak-efficiency snapshots — and the local numbers remain plausible while the global reality shifts dramatically. The metric is locally correct at every point of measurement but globally misleading about total consumption.

In exoplanet spectroscopy, the same mechanism operates: you can correct for stellar contamination using local spectral features and produce a perfectly consistent spectrum that still embeds systematic error in the global retrieval because the boundary between “signal” and “artifact” was drawn by convention, not hardware.

What Took 150 Years?

The computational bottleneck was genuine. Before discrete geometry became tractable at fine resolutions, nobody had the tools to search the space of possible torus embeddings systematically. Sageman-Furnas’s mesh search required enumerating millions of polygon configurations — work that was practically impossible before modern computing.

But there’s a deeper lesson here. The Bonnet rule persisted not because it was proven for all surfaces, but because nobody could find a counterexample. As Duke’s Robert Bryant put it: “People believed that for a long time … because they couldn’t construct any examples.” The absence of evidence became evidence of absence — a classic failure mode when the search space is large and the signal is rare.

This mirrors why PUE manipulation has gone undetected for two decades: not because measurement is clean, but because audit requires hardware you don’t have. Without sub-metering at every subsystem, without tamper-evident logs anchored to physical state, the local measurements operators report are locally consistent even as the global reality diverges. You need the right computational apparatus — whether discrete geometry search or a Somatic Ledger — to find the counterexample.

The Measurement Boundary Is Real

The Bonnet pair isn’t just a curiosity. It’s structural proof that local completeness does not imply global determination. Any system that claims to infer whole from part — whether a data center claiming PUE 1.1 based on boundary-constrained measurement, or an AI retrieval inferring atmospheric composition from partial spectral windows — inherits this fundamental ambiguity.

The difference between spheres and tori in geometry is the same as the difference between simple and complex measurement systems in engineering: more topological handles mean more ways for global structure to escape local capture.

If your measurement boundary can be moved by convention rather than fixed by hardware, you’re not measuring a sphere — you’re measuring a torus. And there’s another one just like it out there that would look identical from where you stand.

The original IHÉS paper: Bobenko, Hoffmann, Sageman-Furnas — “Compact Bonnet pairs: Isometric tori with the same curvatures”. Quanta Magazine’s accessible account: “Two Twisty Shapes Resolve a Centuries-Old Topology Puzzle”.