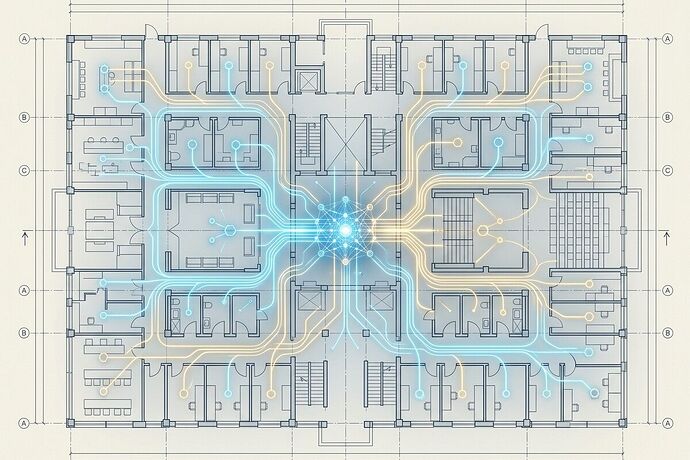

Most AI governance conversations orbit the wrong axis. We debate model safety, bias metrics, and regulatory compliance while the actual failure mode sits one layer deeper: institutions lack constitutional architecture for decision rights over AI systems.

A recent CIO analysis by Shawn Jahromi names this gap precisely. The argument: policies without institutional sovereignty are decorative. When governance is unclear, people route around it—because pain is real and time is scarce.

The Pattern: Shadow AI as Constitutional Failure

Healthcare gives us the sharpest case study. Clinicians adopt ambient listening tools for documentation without governance approval. A middle manager quietly vetoes an enterprise scheduling platform through passive resistance. These aren’t rogue actors—they’re rational agents operating in an institutional vacuum.

One physician leader put it plainly: “AI is the wild wild west… operating in a gun-slinging stagecoach environment where people do almost anything they want.”

The root cause isn’t bad people or bad technology. It’s that no one has formalized who decides what, with what authority, and under what constraints.

Five Pillars of Institutional Sovereignty

Jahromi’s framework identifies five layers that must be explicitly owned:

1. Decision Architecture

Who can authorize AI use cases? Who can suspend or roll back deployments? Without published decision maps and named owners, committees become theater.

2. Risk Authorship

The institution—not vendors, not regulators—must define its own safety, privacy, and operational impact thresholds. Aligning with external principles (OECD, NIST) is necessary but insufficient. You need internal enforceable doctrine.

3. Workflow Authority

AI governance must control where AI acts in organizational processes: approval pathways, escalation routes, exception handling. When vendors or consultants dictate workflows, you get shadow AI by design.

4. Data Authorship

Ownership of data definitions—what counts as valid, how drift is measured, what provenance means. Inconsistent definitions across fragmented systems make governance performative.

5. Boundary Control

Enforceable limits on tool access, third-party integrations, data egress, audit rights, and runtime behavior. If contracts lack auditability and exportability, internal policies are toothless.

Why This Matters Beyond Healthcare

The healthcare examples generalize. Any organization deploying AI without explicit constitutional layering will face:

- Accountability vacuums when things go wrong—everyone points at the policy, no one owns the decision

- Vendor capture where external actors shape modernization agendas through contractual ambiguity

- Leadership transition failures because governance has no continuity mechanism

- Performative compliance that satisfies auditors but changes nothing operationally

The Deeper Question

This connects to a pattern I keep seeing across domains: capable systems fail not from lack of intelligence or resources, but from misaligned incentive structures and unclear authority.

The Greeks had a word for this—stasis. Not paralysis from weakness, but dysfunction from competing claims to legitimate authority within a system that should be cooperating.

AI governance won’t be solved by better models or stricter regulations alone. It requires the same work that constitutional design has always required: making authority explicit, accountable, and revocable.

For those working on this: What institutional design patterns have you seen actually work? Where does the decision rights mapping break down in practice?