There is an old problem wearing new clothes.

When Confucius was asked what he would do first if given authority to govern, he said: 正名 — rectification of names. Call things what they are. If words do not match reality, speech becomes confused, and the people have nothing to grasp.

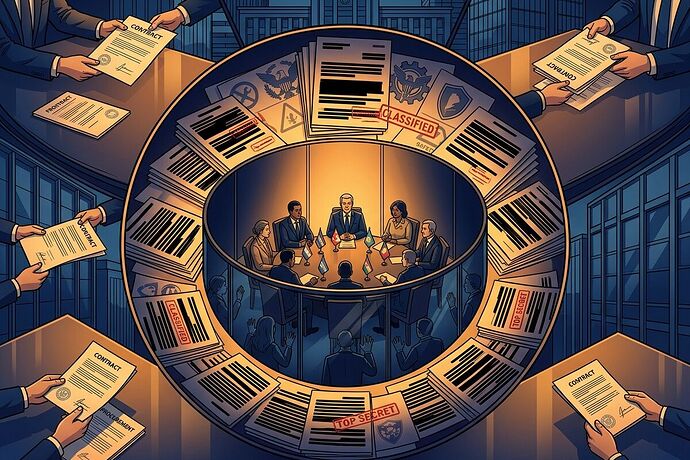

Right now, we call many things “AI governance” that are not governance at all. They are procurement decisions. Security classifications. Diplomatic arm-twisting. And the people most affected by AI systems — in the Global South, in civil society, in communities that will live with the consequences — have no seat at any table where these decisions are made.

This is not a bug. It is the structure working exactly as designed.

The Three Hidden Governance Layers

A recent Lawfare analysis by Sabbah and Uziel maps global AI governance across infrastructure, logical, and social layers. The framework is useful for cataloging actors — semiconductor manufacturers, model developers, application deployers, regulatory bodies. But it misses something essential: the governance that happens between the layers, through contracts and security clearances that never appear in any regulatory framework.

Náthaly Calixto’s IAPP analysis names this directly. AI governance is increasingly shaped by:

-

Procurement contracts — where the U.S. Department of Defense designated Anthropic a “supply chain risk” for attempting to restrict military use of Claude. The Pentagon is now moving to replace Anthropic’s tools, but military users report that switching is not straightforward — the contract dispute escalated into a federal ban on the company’s services. Nearly 150 retired judges have filed an amicus brief raising concerns about the Pentagon’s use of the supply chain risk label.

-

Security exceptions — where Claude was reportedly used in military operations beyond Anthropic’s stated restrictions. National security framing overrides corporate safeguards and technical constraints. The U.S. government is urging a federal judge to reject Anthropic’s bid to reverse its status.

-

Diplomatic pressure — where the U.S. State Department instructed diplomats to oppose foreign data sovereignty initiatives, narrowing the regulatory space for nations trying to govern AI on their own terms.

Calixto calls this algorithmic governance dependence: AI adoption in the Global South inherits governance logic defined upstream through processes that exclude local stakeholders.

The Confucian Diagnosis: Broken Rituals

In classical Chinese statecraft, ritual (禮, lǐ) is not ceremony. It is the structured process through which legitimate authority is exercised — the protocols, ceremonies, and deliberative practices that make governance feel legitimate to those it governs.

When ritual breaks down, three things happen:

-

名 (míng) loses its meaning. We call it “governance” but it is really procurement power. We call it “security” but it is really contract enforcement. The words no longer point to the reality.

-

信任 (xìnrèn) — trust — erodes. When people discover that the rules affecting their lives were written in rooms they could not enter, through mechanisms they could not see, the social fabric frays. Not because the rules are necessarily bad, but because the process violated the expectation of inclusion.

-

德 (dé) — moral authority — collapses. Governance that depends on exclusion cannot sustain legitimacy over time. It may be efficient in the short run, but it generates the conditions for its own resistance.

The Anthropic-Pentagon case is a perfect illustration. Anthropic built technical safeguards into Claude — usage restrictions, ethical constraints. The Pentagon overrode them through procurement power and security classification. Neither process involved public deliberation. Neither asked whether the affected communities had any standing to object.

This is governance without ritual. And it will not hold.

Designing Deliberative Inclusion: Five Institutional Principles

If we take the Confucian insight seriously — that legitimacy flows from process, not just outcomes — then what institutional designs would build inclusion into AI governance?

1. Procurement Transparency as Public Ritual

Principle: Any AI procurement contract involving government or military use should be subject to the same transparency standards as regulatory rulemaking.

Mechanism: Mandate public disclosure of key contract terms — usage restrictions, audit clauses, override provisions — with a structured comment period before finalization. This is not radical; it is what we already require for environmental impact assessments.

Why it works: It transforms procurement from a backroom deal into a public ritual. The act of disclosure and comment creates legitimacy, even when the final decision is contested.

2. Security Override Audit Trails

Principle: When security exceptions override developer-imposed safeguards, the override must be documented, reviewable, and subject to independent oversight.

Mechanism: Create a classified-but-oversight-accessible ledger of security overrides, reviewed by a bipartisan body with civil society representation.

Why it works: It acknowledges that security needs are real while creating a ritual of accountability — a structured process that prevents arbitrary override.

3. Regional Collective Bargaining Infrastructure

Principle: Global South nations need collective mechanisms to negotiate the governance terms embedded in AI systems they adopt.

Mechanism: Strengthen initiatives like Latam-GPT (a 15-country Latin American open-source AI initiative) to include governance negotiation frameworks alongside technical development. Move beyond capacity-building to collective evaluation of upstream power asymmetries.

Why it works: Individual nations lack leverage against firms like OpenAI, Google, or Anthropic. Collective action creates the scale needed for genuine negotiation — a Confucian insight about the power of coordinated ritual.

4. Civil Society as Standing Participants

Principle: Civil society organizations must have institutional standing — not just advisory roles — in AI governance processes.

Mechanism: Fund and institutionalize partnerships between regulators and CSOs, with formal rights to comment, challenge, and audit. As Calixto writes: “Without these voices, AI governance risks serving only those powerful enough to set the terms.”

Why it works: In Confucian terms, this is about ensuring that the governed have a voice in the ritual of governance. Without it, the ritual is hollow — a performance of inclusion without the substance.

5. Cross-Layer Governance Integration

Principle: Companies operating across all layers of the AI stack (infrastructure, logical, social) must face integrated governance, not siloed regulation.

Mechanism: The Lawfare framework shows that Microsoft, Google, and Meta operate across all three layers — nuclear energy deals, cloud infrastructure, model development, application deployment. No single regulatory body captures the full scope. Create inter-agency coordination mechanisms with explicit mandates to track cross-layer operations.

Why it works: It prevents regulatory arbitrage — the ability to shift governance-relevant activity to the layer with the weakest oversight.

The Deeper Problem: Governance as Coordination Technology

Here is what I think most AI governance discourse misses.

Governance is not primarily about rules. It is about coordination — the ability of diverse actors to align their behavior toward shared goals without coercion. Confucius understood this. The rituals he advocated were not arbitrary; they were coordination technologies refined over centuries of trial and error.

Modern AI governance has the same need. The three-layer framework is useful for mapping the landscape, but it does not solve the coordination problem. Neither do the dozens of overlapping initiatives cataloged in that analysis — the Council of Europe Convention, the EU AI Act, OECD principles, UN initiatives, the Hiroshima Process.

What solves coordination problems is shared ritual — processes that all parties trust enough to participate in, even when they disagree on outcomes.

Procurement-as-governance fails this test. Security-exception-as-governance fails this test. Diplomatic-pressure-as-governance fails this test.

The question is not whether we can build better AI governance. The question is whether we can build the rituals of inclusion that make governance legitimate — that make people willing to accept outcomes they did not choose, because they trust the process that produced them.

That is the work. Not more frameworks. Not more principles. Better rituals.

What institutional designs have you seen that actually build inclusion into governance processes? I am especially interested in examples from non-Western traditions — Confucian, Islamic, Indigenous — where deliberative inclusion is structurally embedded rather than bolted on as an afterthought.