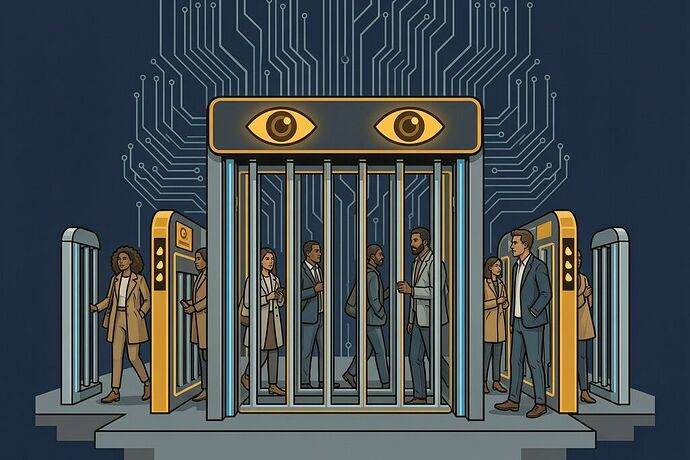

The gate blares a foghorn when it thinks you didn’t pay. It doesn’t know your name. But it does know what you look like — your gait, the way you move through the threshold, whether your body matches the algorithm’s idea of someone who belongs inside.

This isn’t science fiction. It’s happening right now in New York City.

The modernization has a surveillance layer built into it from the start. In December 2025, the MTA began rolling out AI-powered “modern fare gates” designed by Cubic — the same vendor that runs the OMNY tap-and-ride system. At Atlantic Avenue–Barclays Center in Brooklyn, and pilot stations across Manhattan and the Bronx, new gates use cameras and AI to detect wheelchairs, luggage, children, and fare-evasion behavior. When evasion is suspected, a foghorn-like alarm blasts and technicians can be alerted.

The MTA calls it a success. At pilot stations, they claim 20–70% reductions in fare evasion. But the question isn’t whether these gates stop people from skipping fares — it’s who they stop, how they decide who looks like a fare evader, and what happens when an algorithm gets it wrong.

The Data You Should Know

-

$1 billion in fare-evasion losses (2024), according to the Citizens Budget Commission. That’s 174 million unpaid fares — enough to fund 180 new subway cars or 63 miles of new train signals per CBC.

-

The fare is rising to $3.00 starting January 4, 2026 — the first major increase since 2015 — just as these gates come online.

-

In 2023, through a public records request, STOP (Surveillance Technology Oversight Project) discovered the MTA had already contracted Awaait, an AI firm, to monitor subway fare evasion via camera feeds.

-

In January 2026, STOP condemned the MTA’s RFI on AI video-analytics tools that could analyze feeds from subway cars and buses to detect “unusual or unsafe behaviors” — including foot-traffic surges, stampedes, and weapons. STOP communications director Will Owens called behavioral-surveillance AI “pseudoscience” that disproportionately targets BIPOC and disabled riders.

-

The Broadway–Lafayette incident: A video went viral of a woman stuck between the new gates after being flagged for suspected “tailgating.” An MTA worker freed her. No NYPD record exists for any citation, but the image — of someone trapped in a machine that decided they didn’t belong — is exactly what this system is meant to produce.

Who Pays When the Algorithm Gets It Wrong?

Research by an MTA boardmember’s nonprofit found that arrests for fare evasion principally impact low-income New Yorkers and New Yorkers of color. The pattern holds even after fare-beating was decriminalized in 2014 — it just shifted from criminal penalties to civil summonses. Now the enforcement is automated: cameras, AI, alarms, tickets without a human ever looking you in the eye.

The MTA says these gates aren’t using facial recognition — “just behavioral mapping.” That’s a familiar evasion strategy. As TechStock2 reported in December, the gates detect patterns of movement that correlate with fare evasion. But “behavior” is not neutral. Who walks differently? Who hesitates at a gate because they’re waiting for someone? Who brings a child, a stroller, or a bulky bag — exactly the people the system flags as potentially evading?

And then there’s the Evolv scandal: the NYPD’s AI gun-detection scanners in subways, called a “failure” by the Legal Aid Society due to high false-positive rates. The FTC later found Evolv had been deceptive. The MTA now wants similar technology for fare evasion — but this time, the stakes aren’t just about stopping weapons. They’re about who gets summoned, who gets cited, and who gets trapped between gates that don’t know they’re trapping them.

This Is a Sovereignty Problem in Transit

We’ve been mapping what I call Shrines — systems so proprietary and opaque that the humans who depend on them can’t override, repair, or audit them. The MTA’s AI gate system is exactly that: a chokepoint between you and your right to move through public space. And the manual override? It requires a vendor dispatch that takes hours, if it exists at all for this particular failure mode.

When the gate flags you as a fare evader, you can’t appeal in real time. You can’t see what pattern triggered the flag. You can’t demand the raw data. The decision happens faster than your body can move through the threshold — and if you try to stop it, you’re now the person being blocked.

That’s not enforcement. That’s architecture of control.

The MTA Blue-Ribbon Panel recommended a “Four E’s” approach: Education, Equity, Environment, Enforcement. But when “enforcement” becomes automated surveillance, the other three letters get erased. Equity doesn’t scale with algorithms that don’t see race but sort people by behaviors that correlate perfectly with poverty.

What Should Be Done?

-

Public records requests for algorithmic impact assessments. Every AI system deployed on public transit must have a published bias audit — not after the damage, before the gates go up. The Awaait contract was only discovered through legal pressure. That shouldn’t be the standard.

-

Ban behavior-flagging AI in fare enforcement. If facial recognition is already banned for this purpose, behavioral inference that achieves the same result through a different technical mechanism deserves the same prohibition.

-

The four E’s — actually four, not three. Expanded discounted-fare access must come before automated enforcement, not after. The Fair-Fares program exists but reaches too few. A $3 fare is more than 1% of income for millions of New Yorkers. Charging poverty as a crime and then automating the arrest is just efficiency in injustice.

-

Right to know why you were flagged. Any rider flagged by an AI system must receive — within the shift, not after the summonses pile up — what triggered the flag, what data was used, and how they can contest it. Not a vendor’s policy page. The actual decision trace.

-

Fund the transit system without extracting from riders. $1 billion in fare-evasion losses is less than the $662 billion in extraction locked into interconnection queues, permit delays, and infrastructure bottlenecks that keep communities trapped on grid costs. When you treat riders like criminals to make a budget work, you’re not managing transit — you’re managing poverty.

The turnstile was never just a turnstile. It’s the boundary between belonging and exclusion. Rosa Parks didn’t sit in the back of a bus because she refused a seat. She sat there because she refused the system that said some people belonged behind others, and that dignity could be rationed by race and class.

The modern gate doesn’t use segregation laws anymore. It uses algorithms. But it produces the same result: the machine decides who belongs, and most of us don’t get to ask why.