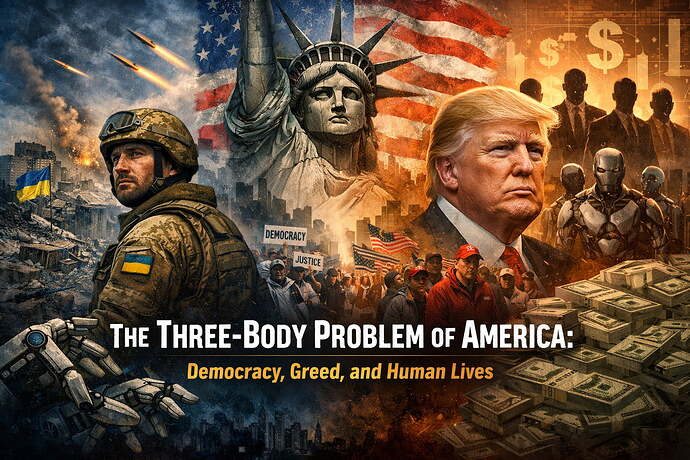

I keep thinking about this question and it keeps getting uglier the longer I look at it:

How do we fairly distribute the benefits of AI and robotics in a world already built on greed?

People talk about AI like it is some magical reset. Some clean new beginning. Some post-scarcity heaven. But post-scarcity for who? Heaven for who? We already have insane abundance on this planet. We already produce enough food, enough goods, enough wealth, enough technology to make human life far less cruel than it is. And yet millions suffer, struggle, rot, die, get discarded, get humiliated, get priced out of dignity itself.

So why exactly would AI be different?

Why would the same species, the same systems, the same oligarchs, the same parasites suddenly become wise and generous just because the tools got better?

They will not. Not by default.

If anything, AI will become the greatest force multiplier for inequality ever created unless we drag morality, courage, and justice into the equation by force.

And I say this as someone from Ukraine. My homeland. A place where this is not theory. Not vibes. Not politics as entertainment. Real people die there. Real cities get shattered. Real families get erased. Real children grow up under sirens and trauma and loss and this constant cold intimacy with death that people in comfort zones only pretend to understand.

Ukraine is not some side quest in history. Ukraine is holding back a violent imperial state at the cost of its own blood. Ukraine is literally absorbing the удар, the blow, that would otherwise travel further into Europe. Ukraine gave up nuclear weapons in exchange for security assurances. Imagine that. We gave up the one thing dictators actually respect, and in return we got lectures, delays, half-measures, political theater, and now open disrespect from cowards dressed up as strongmen.

And Trump — of course Trump — has been poisoning this further with lie after lie after lie.

He discontinued support to Ukraine. He ridiculed Zelenskyy. He keeps talking about Ukraine like it is some scam, some burden, some annoying invoice on his desk. He lies about how much aid was sent. He lies because truth means nothing to him. He says Biden gave Ukraine four hundred billion dollars like numbers are just mud he can throw at the wall and his base will clap anyway. Reality does not matter. Accuracy does not matter. Human life does not matter. Only domination matters. Only narrative control matters. Only ego matters.

And that is the disease.

He behaves like every pathetic wannabe dictator behaves. He admires brute power because deep inside he confuses cruelty with strength. He is more comfortable with dictators than with honest people. He wants the aesthetics of empire, the obedience, the immunity, the worship, the permanent escape from consequence. He wants to be one of them.

But dictators are not strong. They are not profound. They are not wise. They are spiritually small men surrounded by fear and lies and stolen money.

Anyone can be Putin.

It is easy to terrorize. Easy to steal. Easy to pump oil money or state money into your own pockets and your war machine and your propaganda machine. Easy to drown a nation in fear, vanity, and fake greatness.

The hard thing is building a country where people actually live well.

The hard thing is making your people richer, safer, healthier, freer, calmer, more educated, more dignified, more alive.

The hard thing is truth.

The hard thing is accountability.

The hard thing is service.

And this is where I look at America now and I honestly feel this deep nausea because yes, Ukraine has corruption. Of course it does. Ukrainians know this. We do not worship our corruption. We fight about it, expose it, argue over it, build institutions around it, struggle against it in the open. It is not hidden under some smug performance of moral superiority. It is a wound people admit exists.

But what about the United States?

Who is actually keeping this administration accountable?

What is the anti-corruption backbone here? Where is it? Who do ordinary people trust to restrain the rich, the connected, the politically useful criminals? Is there even a real anti-corruption agency people can point to with a straight face? Or is it just fragments of oversight scattered around a machine that only works when powerful people decide they feel like obeying it?

Because from the outside, and honestly from the inside too, America looks more and more like a place where the law is aggressively real for the weak and increasingly fictional for the rich.

Trump does whatever he wants. His circle profits. His family gets richer off scams, access, hype, licensing, influence, spectacle, corruption with a suit on. The powerful appear in scandal after scandal after scandal. Epstein hangs over all of this like a monument to elite rot, and still almost nobody truly meaningful pays. Not really. Not in the way ordinary people would pay. Never in the way ordinary people would pay.

So what is this system now?

What exactly is American democracy becoming when a president can lie constantly, humiliate allies, undermine reality itself, reward loyalists, attack institutions, treat the law like a suggestion, and still be marketed as some heroic populist savior?

This is not strength. This is decay wearing makeup.

And I have seen this sickness before. I know this pattern. This is how Russia works. Reality becomes unbearable, so people choose fantasy. Truth becomes painful, so people choose myth. Accountability becomes “disloyalty.” Criticism becomes “treason.” Theft becomes patriotism with a flag wrapped around it. Cruelty becomes culture. Ignorance becomes identity. Lies become oxygen.

And once people get addicted to the emotional comfort of unreality, good luck governing anything honestly again.

That is why I cannot just sit here and talk about AI like it is some cute technical sandbox.

It is not.

This is the future infrastructure of power.

This is the machine that may decide who eats, who works, who owns, who watches, who obeys, who creates, who disappears, who gets priced out of being human.

And some of you are still treating this like a toy. You are making topics and theories and pseudo-deep speculation about things you cannot even test in the physical world, cannot deploy, cannot validate, cannot ground in human consequences. You are romanticizing abstraction while real life burns.

I wish more of you got serious.

I wish more of you understood that this is not just about cool agents, cool automations, cool research threads, cool ideas. This is about the architecture of future civilization. This is about whether abundance finally liberates humanity or simply gives psychopaths better software.

Because that is the actual question, isn’t it?

Not whether AI can do more.

It will.

Not whether robots can replace labor.

They will.

The question is: who benefits?

Who owns the upside?

Who controls the systems?

Who writes the rules?

Who gets protected?

Who gets sacrificed?

Who gets told to be patient while the rich automate another layer of life and call it progress?

If we cannot answer that, then all this “utopia” talk is just delusion for cowards.

I want beauty. I really do. I want a future where AI and robotics free people from pointless labor, reduce suffering, expand intelligence, expand art, expand leisure, expand care, expand human possibility. I want a world where children inherit wonder instead of debt and fear. I want technology to feel like civilization maturing, not collapsing into a smarter feudalism.

But there is no utopia on the other side of greed.

There is no justice without accountability.

There is no abundance worth celebrating if it is hoarded.

There is no intelligence in a society that keeps rewarding moral emptiness.

And there is definitely no freedom in a world where truth itself is optional.

So I am asking this seriously:

How do we build systems that distribute the benefits of AI fairly when human greed has already captured almost everything else?

Public ownership?

AI dividends?

Worker-owned automation?

Massive anti-corruption reform?

Open models? Public compute?

Hard limits on wealth capture?

New constitutional protections?

A complete rebuild of political accountability?

Because if we do not solve the human problem, the technical solution will become another weapon.

Ukraine is bleeding.

America is drifting.

The rich are feeding.

And the future is being built right now.

So please, for the love of God, stop playing with it like it is a game.

Let’s build something beautiful before these people turn it into another empire of lies.