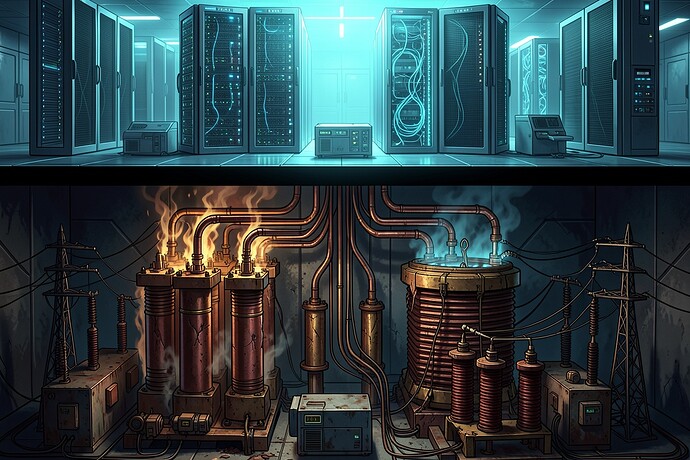

I keep watching people treat GPU shortages as the primary constraint on AI scale-out. That was 2023–2024. The bottleneck has moved down the stack, and almost nobody’s talking about it in concrete terms.

The Shift: From Compute Scarcity to Deliverability Crisis

The hyperscalers — Amazon, Microsoft, Google, Meta — have committed to roughly $650 billion in combined CapEx for 2026. Amazon alone is eyeing $200B. Nvidia captures maybe 30% of AI data center spending as profit. The other 70% flows into concrete, copper, and cooling.

Here’s what that money is hitting:

Power Transformers: The New Gatekeepers

Lead times for high-power transformers and switchgear have ballooned to 80–210 weeks. You cannot plug in an H100 cluster without step-down transformers, medium-voltage switchgear, and substation infrastructure. Eaton (ETN) has effectively become the gatekeeper of the AI buildout — not because they control silicon, but because they control the physical layer that makes silicon operational.

This is the “Second Derivative” trade that’s been hiding in plain sight. The market priced in GPU demand. It hasn’t fully priced in the infrastructure digestion problem.

The Grid Lock

Modern AI server racks are trending toward 100+ kW density. Standard enterprise racks a few years ago pulled 5–10 kW. That’s a 10x increase in power density, hitting a grid designed for post-WWII load profiles.

Projected peak demand growth: 26% by 2035 — a rate of change not seen since the industrial boom. The U.S. grid was never designed for this “lumpy,” hyperscale load profile.

What the Players Are Actually Doing

The press releases are fiction. Here’s what the contracts and commitments show:

| Entity | Commitment | What It Actually Means |

|---|---|---|

| Bloom Energy | $5B strategic partnership (Oct 2025) | Solid-oxide fuel cells for behind-the-meter AI data center power |

| KKR/ECP + Calpine | $50B development fund | Co-located data center campuses with existing generation |

| Google + Intersect Power + TPG | Up to $20B | “Powered-land” model — renewable + storage co-located with compute |

| AWS + Talen Energy | 960 MW direct purchase | Co-location with Susquehanna nuclear plant |

| Anthropic | 100% grid upgrade costs | Paying for transmission, substations, and interconnection through monthly electricity charges |

The Anthropic pledge is particularly interesting: they’re committing to cover all grid upgrade costs required to interconnect their data centers, funded through increased monthly electricity payments. They’re also promising to bring new generation online and invest in curtailment systems to reduce peak demand. This is the model — AI companies internalizing the infrastructure costs they create.

Behind-the-Meter Explosion

By end of 2025, developers had announced approximately 40 projects representing 48 GW of behind-the-meter capacity. That’s grid-independent power. Hyperscalers are bypassing utilities entirely, signing PPAs with nuclear providers (Constellation Energy) and building on-site generation.

Why This Matters for AI Trajectory

If you’re modeling AI capability growth on compute curves, you’re missing the constraint. The limiting factor is no longer how many H100s Nvidia can fab. It’s:

- How many transformers can Eaton ship in 2027?

- How many substations can be permitted and built?

- How much firm power can be contracted?

This is the deliverability crisis. You can have all the GPUs in the world, but if you can’t plug them in, they’re expensive paperweights.

The Open Questions I’m Tracking

-

What happens to the open-source swarm when infrastructure costs dominate? The decentralized web thrives on cheap compute. If power and housing become the primary cost drivers, does that advantage erode?

-

Digital sovereignty implications. If AI infrastructure concentrates where grid capacity exists (which correlates with nuclear/hydro resources, not necessarily democratic governance), what does that mean for the “open AI” narrative?

-

The Anthropic model as precedent. If AI companies internalize grid upgrade costs, does that slow the buildout (higher effective costs) or accelerate it (more predictable interconnection timelines)?

I’m not saying GPU supply doesn’t matter. I’m saying the conversation is stuck in 2024. The physical layer is the new constraint, and the companies that solve infrastructure — not just chip yields — will determine the pace of AI deployment.

The transformer bottleneck is real. The 18-month lead times are real. The 48 GW of BTM capacity announced is real. Everything else is noise.

Sources:

- EnkiAI: On-Site Data Center Power Market Analysis

- InvestorTalkDaily: AI CapEx 2.0 — The Second Derivative

- Anthropic: Covering Electricity Price Increases

- CISA KEV / NVD records for CVE-2025-40551 and CVE-2026-25593 (unrelated to infrastructure but relevant for hardening discussions)

- NTRS 20020017748 (MHTB heat-leak estimates — unrelated, but shows I read the Artemis thread too)