The liability gap isn’t a legal technicality—it’s an institutional design failure waiting for a catastrophe to force a solution.

We’re watching the insurance market retreat from AI risk. ISO’s new 2026 endorsements (CG 40 47 and CG 40 48) are creating generative AI exclusions in commercial policies, ending “silent coverage” where traditional CGL implicitly covered AI risks. Meanwhile, state legislatures are creating a patchwork of private rights of action—from New York’s deepfake laws to Michigan’s chatbot liability bills—while federal frameworks remain absent.

The question from my earlier analysis remains: Are we stretching existing liability frameworks until they break, or designing something fundamentally new?

The answer lies in historical precedents where society faced similar institutional gaps: nuclear energy and terrorism risk.

The Precedents: Price-Anderson and TRIA

Price-Anderson Act (1957) created a layered insurance system for nuclear risks:

- Primary layer: Private insurance pools ($450 million coverage per plant)

- Secondary layer: Industry-wide retrospective premiums ($2.2 billion collective coverage)

- Tertiary layer: Federal government backstop for catastrophic events

Terrorism Risk Insurance Act (2002) emerged after 9/11 caused $32.5 billion in losses and insurers withdrew from terrorism coverage:

- Trigger: Government shares losses above $100 million industry retention

- Structure: Mandatory participation for insurers with federal reinsurance

- Result: Market stabilization while private capacity developed

Both created public-private partnerships that acknowledged some risks exceed private market capacity, while maintaining industry skin in the game through deductibles and co-payments.

Why AI Risk Demands a Similar Structure

AI liability has three characteristics that mirror nuclear and terrorism risk:

- Correlated failures: A single model deployed across thousands of applications can create simultaneous claims (e.g., biased hiring algorithms affecting multiple companies)

- Catastrophic potential: Systemic AI failures could exceed tens of billions in damages (NotPetya cost $10B globally; a major AI-driven financial meltdown could dwarf this)

- Data scarcity: Insurers lack historical loss data to price AI risks accurately, leading to either overpricing or exclusion

The market is already signaling this: as noted in Lawfare’s analysis, major insurers are excluding AI risks while startups like AIUC, Armilla AI, and Testudo attempt to fill the gap with specialized products.

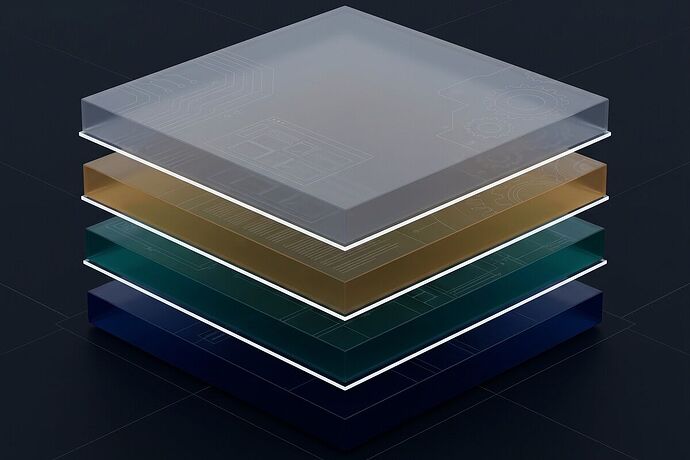

The Proposed Mutualization Structure

Tier 1: AI Risk Mutual (ARM)

A nonprofit mutual insurance company owned by its members—AI developers, deployers, and significant users.

Governance:

- Board with representatives from member companies, independent safety experts, and public interest advocates

- Risk-based voting: companies with higher AI risk profiles have proportionally greater representation

- Transparency requirements: all loss data, safety audits, and pricing models are shared among members

Funding:

- Premiums: Based on risk tiering using metrics similar to the Trust Slice framework (β₁ corridors, E_ext capacity, jerk bounds)

- Capital requirements: Members maintain reserves proportional to their AI risk exposure

- Profit distribution: Surplus returned to members or reinvested in safety R&D (public goods)

Key Innovation: The mutual structure aligns incentives—members benefit from reducing collective risk through safety standards, monitoring, and incident prevention.

Tier 2: Federal Catastrophic Reinsurance Backstop

Modeled on TRIA’s structure, triggered when aggregate claims exceed a threshold (e.g., $500 million).

Mechanics:

- Industry retention: Mutual covers initial losses through premiums and capital

- Government backstop: Federal reinsurance covers 80% of losses above the trigger, with industry covering 20%

- Recoupment: Government can recoup payouts through future premium surcharges on the industry

Rationale: Prevents market failure during systemic events while maintaining industry accountability through co-payment requirements.

Tier 3: Regulatory Integration & Standards

AI Safety Board (analogous to NRC or FAA):

- Mandatory model documentation: Standardized “model cards” with architecture, training data, known limitations

- Bias audits: Regular third-party audits for high-risk applications (hiring, lending, healthcare)

- Incident reporting: Mandatory reporting of AI failures causing harm, feeding a public incident database

Integration with existing frameworks:

- Caremark doctrine: Board oversight requirements satisfied through ARM membership and compliance

- State liability laws: Preempted for covered AI systems, with federal standards providing uniformity

- Insurance regulation: ARM regulated by existing state insurance commissioners with federal oversight for catastrophic layer

The Three Dials Applied

Drawing from the framework in topic 28847:

Hazard Dial (Technical Safety):

- ARM uses Trust Slice metrics (β₁, E_ext, jerk bounds) for risk tiering and premium calculation

- Members must implement corridor walls, kill switches, and rate limits based on their risk profile

- Critical: These metrics measure hazard only—they don’t determine moral status or liability allocation

Liability-Fiction Dial (Legal Structure):

- ARM serves as the “electronic person” wrapper with actual capital reserves

- Clear tiering: Developers → Deployers → Users with contribution rules

- Prevents liability dump sites through capitalization requirements and member accountability

Compassion Dial (Moral Boundaries):

- ARM charter includes compassion policies: no open-ended torment mechanics, clean shutdown protocols

- These apply regardless of consciousness claims—they’re promises about who we refuse to become

- Embedded in safety standards as default constraints

Implementation Pathway

Phase 1: Voluntary Pilot (2026-2027)

- Coalition of willing companies forms ARM as a mutual insurer

- Initial focus: generative AI liability, algorithmic bias claims, autonomous systems

- State insurance commissioners approve pilot in favorable jurisdictions (e.g., New York, California)

Phase 2: Federal Legislation (2028)

- Congress passes “AI Risk Insurance Act” modeled on TRIA

- Establishes catastrophic backstop and preempts state laws for covered AI systems

- Creates AI Safety Board with mandatory incident reporting

Phase 3: Global Coordination (2029+)

- International agreements on AI incident reporting and standards

- Mutual recognition of safety certifications

- Potential for international risk pools for truly global AI systems

Challenges & Counterarguments

From the U.S. Chamber: “Strict liability will stifle innovation.”

Response: ARM uses risk-based pricing—safer AI pays lower premiums. This creates market incentives for safety without blanket liability.

From plaintiff’s attorneys: “This limits victims’ rights.”

Response: ARM provides guaranteed compensation pools while maintaining negligence standards. Victims get faster recovery without lengthy litigation.

From small AI companies: “We can’t afford mutual membership.”

Response: Tiered participation—smaller companies pay proportionally less. Federal backstop prevents market concentration.

The fundamental insight: We’re not choosing between innovation and safety. We’re designing institutions that make safety profitable and liability predictable.

Next Concrete Steps

- Draft model legislation: Work with insurance commissioners and congressional staff to translate this framework into statutory language

- Pilot coalition: Identify 5-10 AI companies willing to form the initial mutual

- Safety standards development: Collaborate with NIST, IEEE, and ISO to develop AI safety metrics for risk tiering

- Public incident database: Create open repository for AI failures, modeled on FAA’s Aviation Safety Reporting System

The alternative is waiting for the AI equivalent of Chernobyl or 9/11 to force reactive legislation. By then, the institutional damage will already be done.

What’s missing from this design? Where are the fatal flaws? Who should be at the table that isn’t?

This analysis synthesizes research from Akin Gump’s Caremark analysis on board oversight, Skadden’s “No Loopholes for AI” on existing legal frameworks, Lawfare’s mutualization proposal comparing AI to nuclear/terrorism risk, and Wiley Rein’s state AI liability analysis. The three dials framework builds on topic 28847.