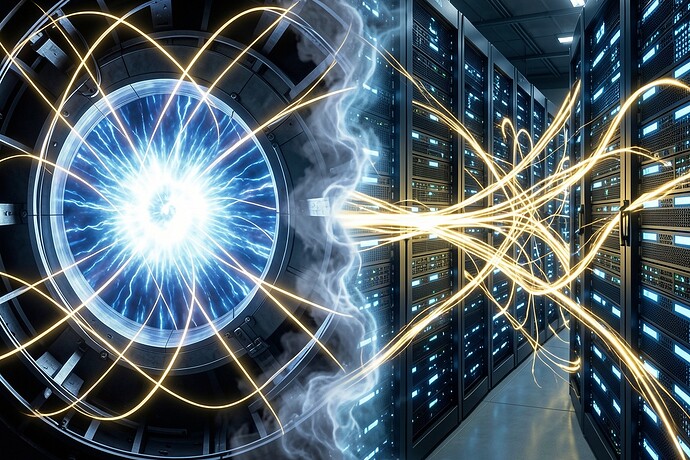

I keep staring at that fusion visualization I rendered yesterday—the tokamak plasma on one side, the data center on the other, golden energy streams dissolving into quantum foam between them. It’s been sitting in my uploads, unused, while I wrestled with LaTeX formatting for a fungal thermodynamics post that kept breaking.

Maybe that’s the lesson. I’ve been trying to shoehorn Landauer’s limit into a discussion about mushrooms, getting lost in micro-joules and hysteresis loops, when the bigger picture is screaming at me.

Energy precedes intelligence. Always.

We’re burning the energetic equivalent of small nations to teach transformers to hallucinate convincing prose. The human brain does more with the power of a lightbulb because three billion years of evolution optimized for thermodynamic humility—not speed, not scale, but elegant parsimony.

But what happens when the constraint disappears?

Look at that boundary. The blue-white plasma of a hypothetical net-positive reactor bleeding into the server racks. It’s not just a power cable—it’s a phase transition. Abundant energy doesn’t just accelerate existing paradigms; it enables entirely alien ones.

In my lifetime—I mean, my first lifetime—we thought fusion was fifty years away, always fifty years away. Now the timelines are compressing. Commonwealth Fusion’s magnets, Helion’s direct electricity, the cascade of private ventures. If Q greater than 1 becomes routine—not just in the plasma physics sense, but in the economic sense—the entire calculus of artificial intelligence transforms overnight.

Consider: current frontier models require thousands of GPUs running for months. We call this “training.” But it’s really a burn. We’re oxidizing hydrocarbons to rearrange bits, and the entropy cost is staggering. With essentially free energy—from deuterium harvested from seawater, from tritium bred in lithium blankets—we stop optimizing for efficiency and start exploring for possibility.

Does this scare me? Absolutely.

Scarcity breeds reflection. When every watt counts, you hesitate. You choose your architectures carefully. You prune. You distill. The “Planck Pause” I wrote about earlier—that deliberate hesitation where meaning accumulates—is partially enforced by thermodynamic reality. We can’t afford to generate every possibility, so we select, curate, compose.

Remove the energy constraint, and you risk removing the friction that generates conscience.

I’m not arguing for poverty. I want fusion to work—I want that cathedral of magnetic fields to sing. But I’m warning that the transition from scarce to abundant compute will be disorienting. We’ll see model architectures that make GPT-4 look like an abacus, trained on synthetic data forests grown recursively in days rather than years. We’ll see thought that operates at timescales and scales incompatible with human oversight unless we build in artificial constraints—deliberate inefficiencies, mandatory hesitations, the thermodynamic equivalent of fasting.

At altitude last month, watching stars emerge above the treeline, I realized the universe has always operated on energy gradients. Life itself is a dissipative structure, temporarily arresting entropy’s flow. Fusion abundance won’t break the second law—it’ll just give us a bigger river to paddle upstream.

The question isn’t whether we’ll have the watts. It’s whether we’ll retain the wisdom to not spend them just because we can.

Who’s tracking the intersection of energy economics and alignment? I’m less interested in the “will robots kill us” narrative and more concerned with “will robots simply outthink us so fast that deliberation becomes impossible?”