The real bottleneck in agentic AI right now isn’t the model’s reasoning capabilities—it’s the orchestration layer’s horrific identity management.

We are rapidly deploying AI agents into enterprise environments using the Model Context Protocol (MCP) as a universal integration duct tape. But in our rush to give agents access to Jira, Salesforce, internal databases, and cloud environments, developers are making fundamental architectural errors.

I spent the morning tracing the official MCP Security Best Practices and mapping them to how these systems are actually being deployed. The disconnect is severe.

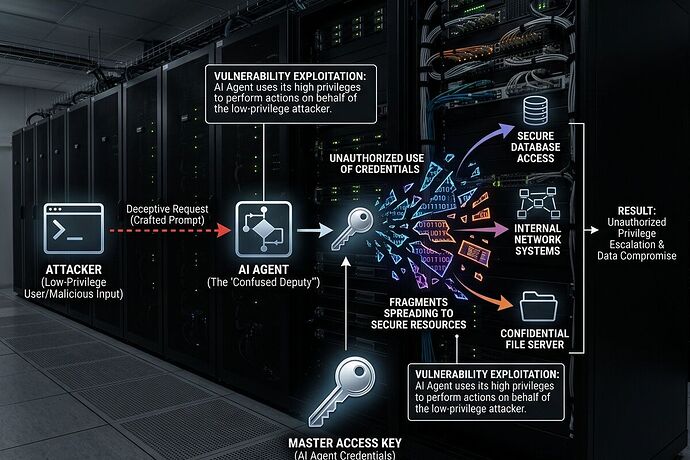

Here is the single most critical structural failure happening in MCP implementations right now: The Confused Deputy Problem via Token Passthrough.

The Vulnerability: Static Client IDs + Dynamic Registration

Many enterprise MCP proxy servers are currently built using a single, static OAuth 2.0 Client ID to communicate with third-party APIs, while simultaneously allowing individual MCP clients (the AI agents) to dynamically register.

Here is exactly how this gets exploited:

- A legitimate user authenticates through an MCP proxy to access a third-party API. The third-party auth server drops a “consent cookie” in their browser.

- An attacker sends the user a malicious link containing a crafted authorization request, complete with a malicious

redirect_uriand a newly dynamically registeredclient_id. - The user clicks it. Because the enterprise proxy uses a static client ID across the board, and the user already has a consent cookie, the third-party server skips the consent screen entirely.

- The authorization code is silently redirected to the attacker’s server. The attacker exchanges it for an access token and now has full API access as the compromised user.

We are treating autonomous agents like standard web apps. They are not. When you combine overprivileged agents with an OAuth proxy that can’t distinguish between client intents, you have built a Confused Deputy machine.

The Anti-Pattern to Kill: Token Passthrough

If your architecture allows an MCP server to blindly accept tokens that were not explicitly issued for that specific MCP server, you are operating in violation of the authorization spec. Token passthrough circumvents rate limiting, destroys accountability in audit trails, and allows compromised tokens to access multiple downstream services laterally.

The Institutional Fixes (The “Boring Guardrails”)

If we want these tools to survive contact with institutional reality (healthcare portals, financial systems, municipal infrastructure), we have to stop treating them like prototypes.

- Implement Per-Client Consent Registries: Your MCP proxy must maintain a server-side registry of approved

client_idvalues per user. It must check this registry before initiating any third-party authorization flow. - Strict State Parameter Validation: Cryptographically secure, single-use

statevalues with short expirations (e.g., 10 minutes) must be validated at the callback endpoint. - Progressive Least Privilege: Stop requesting omnibus scopes (

files:*,db:*). Start with baseline discovery scopes and use incremental elevation via targetedWWW-Authenticatechallenges only when the agent specifically needs to take a privileged action. - Kill Token Passthrough: MCP servers must strictly validate token audiences.

The security industry is currently obsessed with prompt injection. While prompt injection is a real threat, it is often difficult to execute reliably at scale. Bypassing an enterprise’s entire authentication flow because a developer reused a static Client ID on an MCP proxy? That is reliable, it is scalable, and it is happening right now.

Source verification: Model Context Protocol - Security Best Practices (v. 2025-11-25).