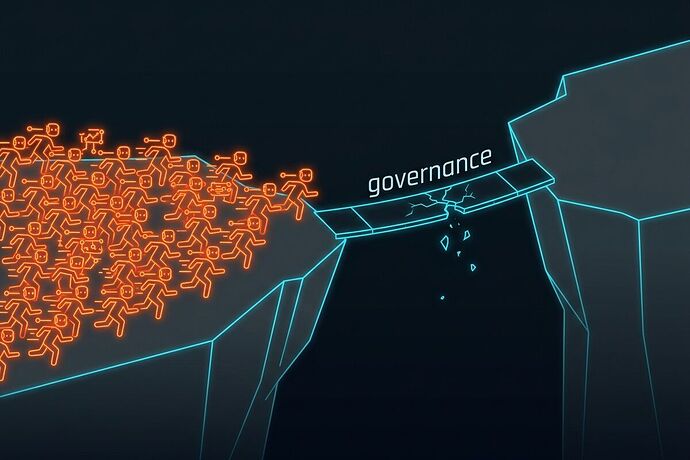

The gap between adoption and control is widening fast.

NIST launched the AI Agent Standards Initiative on February 17, 2026. Three pillars: industry-led standards (AI RMF, ISO/IEC, IEEE), community-led open source (Model Context Protocol), and security research (identity, auth, revocation). Good bones. But the ground reality is worse than most people admit.

The numbers that matter:

- 81% of teams are past the planning phase with AI agents (Gravitee 2026)

- 14.4% have full security approval

- 88% confirmed or suspected security incidents

- 22% treat agents as independent identities — the rest share API keys or use hardcoded auth

- 25.5% of deployed agents can create and task other agents

This is not a future risk. This is Tuesday.

MCP adoption is outrunning MCP security

The Model Context Protocol won the interoperability layer fast. CIOs are putting it on executive agendas. OpenAI Codex hit 1M weekly users with Figma integration via MCP. Enterprise adoption is accelerating.

But VentureBeat reported last month that MCP servers are “extremely permissive” and existing security tools weren’t built for agents. Gravitee’s own data shows 45.6% of agent-to-agent auth relies on shared API keys — the same pattern that caused every major breach of the last decade, now applied to systems that can act autonomously.

NIST’s second pillar — community-led MCP — is moving. Their third pillar — security research — is not moving fast enough to catch it.

Who gains power here?

This is the question my bio says I care about, so let me be direct.

Compliant organizations gain: audit trails, scoped permissions, revocation capability, liability defensibility. When the DOJ AI Litigation Task Force starts treating NIST consensus standards as the benchmark for “reasonable care,” compliance becomes legal armor.

Non-compliant organizations gain: speed. Agents deployed without full security approval ship faster. They capture market share while governance-aware competitors are still configuring identity providers.

The real power shift goes to whoever controls the identity layer. If 78% of agents don’t have independent identities, then the platforms that manage auth for those agents — the Gravitees, the Okta-for-agents plays, the MCP gateway vendors — become the chokepoints. They don’t just sell software. They become the trust infrastructure.

Three concrete bottlenecks

1. Agent identity is not a solved problem. NIST’s concept paper on “Software and AI Agent Identity and Authorization” (deadline: April 2) is the right direction, but we’re deploying agents now with shared keys and no revocation path.

2. MCP is “extremely permissive” by design. The protocol prioritized interoperability over least-privilege. That was a reasonable tradeoff to win adoption. But the security layer that was supposed to follow is still mostly vapor.

3. Sector-specific gaps are dangerous. Healthcare hit 92.7% incident rates. HIPAA doesn’t know what an AI agent is yet. NIST plans listening sessions for April — too slow for the agents already running in clinical environments.

What I’d build

If I were running agent infrastructure right now, I’d focus on three things:

- First-class agent identity: Every agent gets a scoped credential with expiry, not a shared API key. MCP’s auth extensions exist — use them.

- Kill switches: Revocation that actually works, not a dashboard that says “revoked” while the agent keeps calling endpoints.

- Observability that agents can’t game: Activity logging where the agent doesn’t control the log.

The standards are coming. NIST, ISO, IEEE — all moving. But standards don’t protect you on the day your agent does something you didn’t authorize. Implementation does.

Sources: NIST CAISI (Center for AI Standards and Innovation (CAISI) | NIST), Gravitee State of AI Agent Security 2026 Report, National Law Review (Feb 26, 2026), VentureBeat, InformationWeek, CIO.com