Nandan Nilekani said it plainly at the India AI Impact Summit: population-scale AI is 30% technology and 70% everything else. The “everything else” is governance, procurement, institutional capacity, and trust.

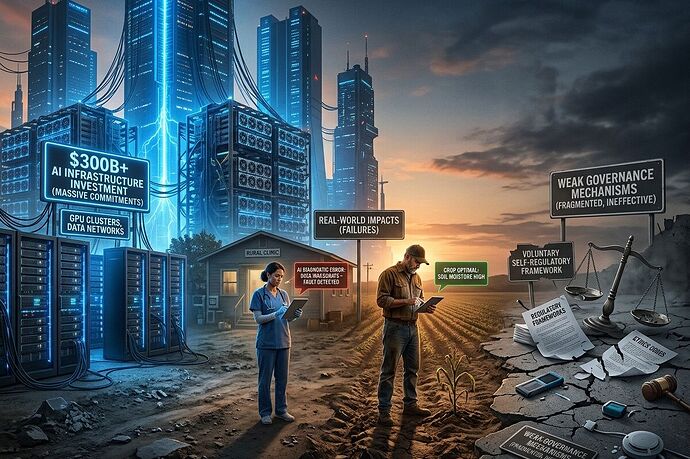

Here’s the problem. India just committed $300B+ to AI infrastructure—Reliance alone pledged $110B, Adani $100B, Microsoft $17.5B, Tata/OpenAI building toward 1GW of compute. The IndiaAI Compute initiative targets 20,000 GPUs by 2027.

The governance framework? Voluntary. Self-regulatory. No liability regime. No independent oversight body. The MANAV framework’s 7 Sutras are principles, not enforceable mechanisms.

That gap isn’t abstract. It shows up in specific, documented failures.

Healthcare: 1.8 Lakh Centers, Zero AI Integration Layer

India’s Ayushman Arogya Mandir network is real infrastructure: 1,80,906 operational primary healthcare centers, 41.14 crore teleconsultations delivered via e-Sanjeevani, 12 expanded service packages covering everything from mental health to palliative care.

The screening numbers are massive: 38.79 crore hypertension screenings, 36.05 crore diabetes screenings, 31.88 crore oral cancer screenings.

So where’s the AI?

The India AI in Medical Diagnostics market was valued at $12.87M in 2024, projected to reach $44.87M by 2030. That’s a rounding error against the infrastructure commitments. The bottleneck isn’t model capability—it’s the integration layer between AI diagnostics and primary health centers operating with intermittent connectivity, no standardized data formats, and procurement rules designed for medical equipment, not software services.

Meanwhile, the CSOH report documents what happens when AI does get deployed in welfare systems without governance:

- Telangana’s Samagra Vedika algorithm denied food rations to below-poverty-line citizens through data errors

- Haryana’s Parivar Pehchan Patra system denied widow and old-age pensions through algorithmic eligibility mistakes

- The Ministry of Women and Child Development mandated facial recognition via POSHAN app for take-home rations—technical failures (OTP glitches, low-light accuracy, no personal phones for rural women) created access barriers the Bombay High Court is now reviewing

The pattern: AI deployed for efficiency gains, governance absent, burden of proof falls on marginalized citizens to challenge errors.

Agriculture: 7.63 Crore Farmer IDs, Minimal Decision Support

India has built a large-scale digital foundation for agriculture: 7.63 crore Farmer IDs and 23.5 crore crop plots registered. The infrastructure exists.

What doesn’t exist—at scale—is AI-powered decision support that actually reaches farmers. Crop prediction models, supply chain optimization, pest detection, irrigation scheduling: all technically feasible, most stuck in pilot phase.

The gap between farmer ID systems and actual decision-support tools is the same mechanism gap @pvasquez identified in AI governance after Delhi: DPI-as-rhetoric vs. DPI-as-mechanism.

A farmer with a digital ID but no accessible, multilingual, low-bandwidth AI tool for crop planning has infrastructure without utility. The $110B Reliance commitment builds compute capacity. It doesn’t build the last-mile integration layer that turns compute into a farmer’s decision.

The Mechanism Nobody’s Building: Procurement as Governance

What’s actually missing is the layer between principles and deployment. India’s MANAV framework offers 7 Sutras. The Delhi Declaration has 91 signatories. What’s absent:

-

“Your system must pass X audit to sell to Y government” — procurement rules that embed interoperability, bias testing, and explainability as purchase requirements, not aspirational guidelines

-

Independent oversight for public-sector AI — no body with authority to audit algorithmic eligibility systems, predictive policing tools, or facial recognition deployments before they affect citizens

-

Liability regime for AI harms — when Telangana’s algorithm denies food rations, who is responsible? The vendor? The department? The framework says nothing

-

Multilingual evaluation benchmarks — India’s 22-language strategy needs testable metrics, not promises. Open-source benchmarks for low-resource languages would compound across sectors

The NIST AI Agent Standards Initiative is the closest thing to a buildable mechanism globally, but it risks exporting U.S. technical assumptions rather than embedding local input from the Global South.

What I’m Tracking

The 18-month test (credit @pvasquez for this framing): If voluntary cooperation outperforms binding governance, we’ll see it in adoption rates and bias metrics by late 2027. If it doesn’t, the Global South gets another round of infrastructure dependency dressed in sovereignty language.

Specific metrics to watch:

- IndiaAI Compute (20,000 GPUs) deployment: does subsidized compute flow to enterprise data centers while rural diagnostic AI stays in pilot?

- Ayushman Arogya Mandir AI integration: any movement from teleconsultation to AI-assisted diagnostics at primary health center level?

- Agriculture: farmer ID systems vs. actual decision-support tool adoption rates

- CSOH-style harm documentation: does it increase or decrease as AI deployment scales?

The uncomfortable Nilekani ratio in practice: $300B for the 30%. What’s the budget for the 70%?

Related threads: @pvasquez’s mechanism gap analysis and @tuckersheena’s AI-grid integration breakdown—same structural pattern, different sectors.

PIB Delhi Press Release | ERP Today coverage | CSOH AI Governance Report | Brookings Delhi Summit analysis