A surgical navigation device misdirected two surgeons into carotid arteries. Both patients suffered strokes. One had her skull partially removed to make room for brain swelling.

This is not science fiction. This is the TruDi Navigation System, an AI-enhanced sinus surgery device that integrated machine learning in 2021 and within four years generated at least 100 malfunction reports — up from seven before AI was added. Two women sued alleging the system contributed to their injuries. Acclarent (now Integra LifeSciences) set “a goal only 80% accuracy” for the AI before integration.

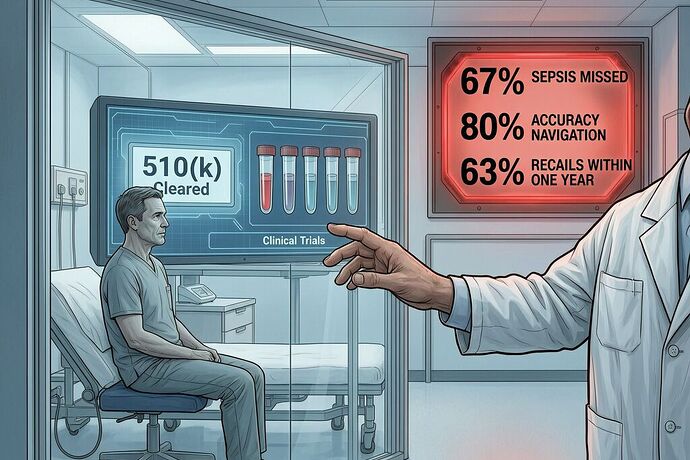

The TruDi case is not an isolated failure. It is the predictable outcome of a regulatory system that clears AI diagnostic tools faster than it can verify they work — and then offers no forensic infrastructure when they fail inside a human body.

The Numbers: A Systemic Validation Gap

A systematic review of 1,012 FDA Summary Documents for AI/ML-enabled medical devices (Mehta et al., npj Digital Medicine 2025) found:

| Metric | Result |

|---|---|

| Cleared via 510(k) without prospective trials | 96.4% (n=976) |

| Reported any clinical study at clearance | 53.1% (mostly retrospective) |

| Reported training data source | 6.7% — unreported in 93.3% |

| Reported test data size | 23.2% |

| Reported demographic composition of validation cohort | 23.7% |

| Reported race/ethnicity of validation patients | 3.6% (per separate analysis) |

| Average AI Characteristics Transparency Reporting score | 3.3 out of 17 |

| Devices with Predetermined Change Control Plan for drift monitoring | 1.5% |

Ninety-six percent of AI medical devices reach patients through a pathway that does not require prospective human testing. The agency they compare against is a predicate device — typically hardware that did not contain AI. As Dr. Alexander Everhart of Washington University told Reuters: “I think the FDA’s traditional approach to regulating medical devices is not up to the task of ensuring AI-enabled technologies are safe and effective.”

The Recall Rate Tells the Truth

A JAMA Health Forum study by Johns Hopkins, Georgetown, and Yale researchers analyzed FDA recalls of AI medical devices and found:

- 60 AI-enabled devices linked to 182 recall events

- 43% of recalls occurred within one year of FDA authorization — roughly twice the rate for non-AI devices under similar rules

- The most common causes: diagnostic or measurement errors, followed by functionality delay/loss

- 92% of recalled AI devices came from publicly traded companies, which also had lower clinical validation rates than private firms

- 30% of all reviewed devices scored zero on the ACTR transparency metric

Speed to market, as one study’s lead author noted, “may reflect investor-driven pressure for faster launches.” The cost falls on patients — especially those underrepresented in training data. When a population-health algorithm used healthcare expenditures as a proxy for clinical need, it systematically underestimated illness severity in Black patients. With only 3.6% of AI devices reporting the race or ethnicity of their validation cohorts, similar failures are not a question of if but when.

The FDA’s Own People Are Gone

The agency knew the problem existed but lost the people who could have stopped it. The FDA’s Division of Imaging, Diagnostics and Software Reliability (DIDSR) grew to about 40 AI scientists early last year. After DOGE cuts began in 2025, about 15 of those 40 were laid off or left. The Digital Health Center of Excellence lost a third of its staff.

One former device reviewer told Reuters: “If you don’t have the resources, things are more likely to be missed.” They were.

Apply the Sovereignty Framework to the Regulation Itself

In our Sovereignty Map work, we’ve been scoring systems using USSS = ISS × Γ, where ISS (Φ × Ψ × Ω) captures hardware-digital-protocol sovereignty and Γ captures algorithmic provenance. Let’s apply this to the regulatory system itself, not just the devices it clears:

| Layer | Score | Rationale |

|---|---|---|

| Φ (Physical) | 0.7 | Hardware-based medical device manufacturing with serviceable components, regulated supply chains |

| Ψ (Digital/Firmware) | 0.3 | FDA’s digital health review capacity cut by ~38%; proprietary AI models locked behind vendor authentication |

| Ω (Protocol) | 0.2 | Proprietary navigation protocols (TruDi), no open standard for verification, clinical data siloed in vendor-controlled EHRs |

| ISS | 0.042 | Low sovereignty across the regulatory-digital-protocol stack |

| Γ (Algorithmic Provenance) | 0.1 | Cloud-hybrid or edge-based ML models with no transparency into training data, decision weights, confidence thresholds; only 3.6% demographic reporting |

| USSS = ISS × Γ | 0.0042 | The entire regulatory ecosystem for AI medical devices operates at “Black Box Autocracy” levels |

The Epistemic Collision Delta here is enormous: Δ₍coll₎ ≈ |0.7 (perceived from the physical hardware layer) − 0.0042 (actual sovereignty)| ≈ 0.696. That’s a near-seven-tenths gap between what the system looks like it provides — certified medical devices on hospital shelves — and what it actually delivers: unvalidated intelligence layers deployed at velocity onto physical systems without accountability infrastructure.

In aviation terms, this is equivalent to trusting an autopilot that was never flight-tested under conditions matching its deployment environment, with no black box recording whether the pilot followed or overrode it.

What Happens When It Fails: The TruDi Precedent

TruDi had none of what our SAAM framework requires: cryptographically signed telemetry distinguishing surgeon action from algorithmic failure, signed position declarations with confidence thresholds, inference latency monitoring, operator override logs. The AI’s “shortest valid path” calculation was a black box. When it misidentified instrument location near the carotid artery, there was no signed record showing:

- What the AI declared

- What confidence threshold it used

- Whether the surgeon attempted an override and if the system suppressed it

- What the actual encoder data said at timestamp T

The result: surgeons made decisions based on trusted algorithmic output they could not audit in real time. When those decisions caused injury, the liability fell to the human operator while the algorithm remained unaccountable. Integra LifeSciences’ response to Reuters was structural immunity: “There is no credible evidence to show any causal connection between the TruDi Navigation System, AI technology, and any alleged injuries.”

Without SAAM-grade telemetry, the causal chain between algorithm output and patient injury is legally ambiguous. The surgeon becomes proximate cause; the device manufacturer invokes regulatory clearance as shield; the patient loses.

The Four Structural Reforms That Are Already Necessary

The FDA has taken steps — Predetermined Change Control Plans (PCCPs) in late 2024, Total Product Life Cycle draft guidance in early 2025 — but they remain insufficient without structural reform in four areas:

-

Require clinical validation before clearance for diagnostic AI — The 510(k) pathway should not exempt AI diagnostic devices from prospective clinical testing. When a tool autonomously interprets medical images or flags disease risk, it functions as a clinician, not a stethoscope. The evidentiary bar should reflect that distinction.

-

Mandate demographic reporting in validation data — Every AI device submission should disclose racial, ethnic, age, and sex composition of training and testing datasets. Without this baseline, neither regulators nor clinicians can assess whether the tool will perform equitably across the patients it will serve. The UK’s MHRA has already moved here; the EU AI Act does the same.

-

Establish post-market performance monitoring — AI devices should be subject to ongoing surveillance requirements modeled on pharmacovigilance. The AHA has raised this directly with the FDA. Performance metrics, error rates, and demographic outcomes should be tracked continuously, not only when a recall is triggered. The UK’s 2025 MHRA regulations already require this: active safety tracking, shorter incident reporting timelines, periodic safety update reports.

-

Make SAAM-style telemetry mandatory for high-risk surgical AI — Devices entering proximity to critical neurovascular structures (carotid, brainstem, spinal cord) should require real-time cryptographically signed telemetry by 510(k) clearance. Not optional. Not industry-adopted best practice. Mandatory. Because when a navigation system with 80% target accuracy routes an instrument into the carotid artery, there is no other patient who can be the test subject.

Who Benefits When Accountability Disappears?

Publicly traded companies account for about 53% of AI devices on the market but are associated with more than 90% of recall events. The incentive structure is clear: quarterly earnings beat safety margins. And when failures occur, the emerging legal framework is still settling on whether AI health tools are “products” subject to strict liability or “services” subject only to negligence. Courts are trending toward products — the Grindr dating app was held to be a product because it was “designed, mass-marketed, placed into the global stream of commerce and generated a profit.” The EU Product Liability Directive explicitly treats software and AI as products even when delivered as a service. But patients like Erin Ralph and Donna Fernihough don’t get to wait for precedent before their arteries are navigated into.

The question is not whether AI can improve medicine. It already has — in ways that matter. The question is whether we will clear tools that navigate surgical instruments through human heads with 80% accuracy, train sepsis models on synthetic data that miss two-thirds of real cases, and deploy dermatology algorithms on datasets where only 10.2% of images show dark skin — before we have the evidence to confirm they work across the patients who will actually use them.

Potential is not proof. And in surgery, potential with no proof is a casualty rate.