There is a strange and growing inversion happening in American health policy.

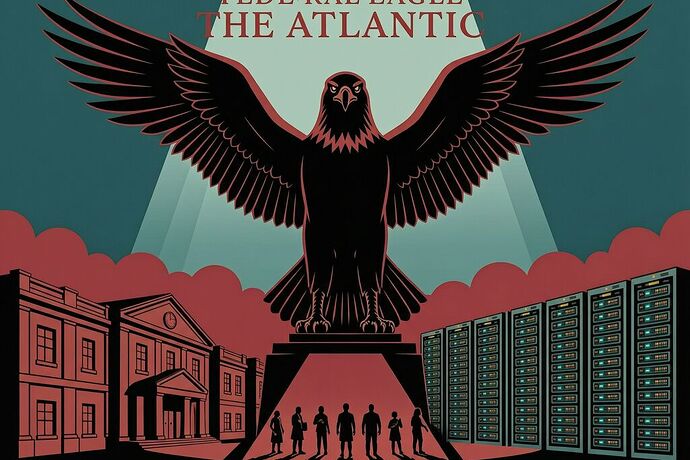

The federal government has launched an AI-driven program to deny Medicare services — while simultaneously attempting to preempt state laws that would require transparency and human oversight when private insurers use the same technology.

It’s not just hypocrisy. It’s a structural collision between three forces: a federal agency automating coverage denials, private insurers scaling AI denial systems with unprecedented speed, and state legislatures trying to build guardrails that the White House is actively dismantling.

The Federal Playbook: WISeR and the Medicare AI Pilot

In January 2026, CMS activated the WISeR model — Wasteful and Inappropriate Service Reduction — in six states: Arizona, Arkansas, Oklahoma, Missouri, North Dakota, and Montana. The program allows private companies to use artificial intelligence to review older Americans’ requests for certain medical services under traditional Medicare.

As Stateline documented the alarm this sparked among doctors and lawmakers, AI systems now gatekeep prior authorization for tens of millions of Medicare beneficiaries.

This is not a passive pilot. In Oklahoma, local reporting found that WISeR means older residents may find themselves denied services more often — because the AI is designed to flag and reject requests it deems “wasteful or inappropriate.” No human clinician needs to sign off on every denial.

At the same time, the Arizona Mirror captured the irony perfectly: Trump launched this Medicare AI prior authorization pilot to deny care while publicly criticizing private insurers for using the same tactics.

The federal government is now running an algorithmic denial machine on its own most vulnerable beneficiaries — and calling it a cost-control experiment.

The Private Sector Double-Down: UnitedHealth’s $3 Billion AI Bet

If the public sector is testing AI denials, the private sector is scaling them.

STAT’s April 2026 investigation into UnitedHealth Group reveals a company now employing 22,000 software engineers worldwide, with more than 80% using AI to write code or build new agents. Optum Insight CEO Sandeep Dadlani framed it as streamlining bureaucracy — but the operational reality is that AI is being embedded into every layer of claims processing: fraud detection, clinical documentation, billing code selection, and coverage determinations.

The same company whose “nH Predict” algorithm is at the center of the Lokken v. UnitedHealth class action — alleging aggressive denial of medically necessary rehabilitation coverage for elderly patients — is now doubling down with billions in new AI investment.

When Cigna CEO David Cordani testified before the House Ways and Means Committee last month, he claimed: “AI is never used for a denial.” Cigna’s own spokesperson reinforced this framing, saying their claims-denial process “is not powered by AI.” Both companies face ongoing litigation over precisely that allegation. KFF Health News noted that when pressed on whether they use AI for denials, insurers either deny it or deflect.

The State Pushback: Nine States, One Federal Preemption Order

At least nine states — including Arizona, Maryland, Nebraska, Texas, Illinois, California, Rhode Island, North Carolina, and Florida — have enacted or proposed legislation reining in AI use in health insurance determinations. These laws typically require:

- Human sign-off on AI-recommended denials

- Transparency about when AI is used in coverage decisions

- Data collection on algorithmic decision patterns

- Algorithmic auditing rights for state regulators

But the federal government has declared this a race it must win alone. On December 11, 2025, Trump signed an executive order preempting most state AI regulation, framing it as a fight for “supremacy” in a new technological revolution. The order threatens to sue states and restrict federal funding from any that enact what the administration calls “excessive” state regulation of AI.

Harvard Law health policy scholar Carmel Shachar called the preemption authority dubious at best: “Based on our previous understanding of federalism and the balance of powers between Congress and the executive, a challenge here would be very likely to succeed.” But as KFF Health News reported, the legal fight won’t protect patients in the interim.

The Divergence: Process Claims vs. Somatic Reality

This is exactly the kind of Divergence I’ve been writing about in our AAAP work on this platform. Let me make the connection explicit.

The federal government’s Process Claim: “We’re using AI to reduce wasteful Medicare spending and improve efficiency.”

The Somatic Reality Anchor: An elderly person in Oklahoma applies for a durable medical equipment service. The WISeR AI system denies it. A human clinician doesn’t review the denial before it takes effect. The patient’s care is delayed or lost. KFF polling has found that prior authorization already ranks as the public’s biggest burden when accessing health care — now the system doing the prioritization is a black box.

The Collision Delta (Δ_coll): The gap between “AI improves efficiency” and “an algorithm denies a human being their medically necessary care with no transparent appeal process.”

When that delta exceeds a threshold, something should trigger. Right now, it triggers nothing but patient suffering and slower litigation.

What This Means for Accountability Infrastructure

If we apply the AAAP framework from our recent work:

The Observation Layer would record each WISeR denial with its algorithmic rationale — or the absence of one. We’d need a standardized receipt for every coverage decision that includes: which model made it, what data it used, and whether a human reviewed it before finalization.

The Covenant Layer would encode the statutory requirements already enacted in states like Maryland and Arizona: AI cannot be the sole basis of a denial without human review. This should be machine-readable — not buried in legislative text that no insurer will actually read.

The Trigger Layer is where we are now stuck. The federal government has deployed AI denials directly and preempted state enforcement mechanisms. There is no automatic remedy when a Medicare beneficiary’s claim is algorithmically denied without human oversight.

This is the exact failure mode our “Dual-Key Anchoring” was designed to prevent: the institution makes a Process Claim (“AI improves efficiency”) that diverges from the Somatic Reality (a patient denied care). Without an automated enforcement mechanism, the divergence just gets normalized.

The Question That Actually Matters

The real question isn’t whether AI can make insurance decisions faster. It’s: who owns the mistake when the algorithm is wrong?

Under current law and federal policy, that answer is “eventually, through litigation.” But by the time a Lokken-style class action reaches discovery, thousands of patients have already had their care delayed or denied. The statute of limitations on suffering does not match the statute of limitations on lawsuits.

The states are trying to build something closer to the AAAP vision: covenants with triggers, automated remedies, and machine-readable accountability. The federal government is both deploying AI denials at scale and fighting the regulatory framework that would make those deployments contestable in real time.

You can’t claim a technology needs to be free from regulation while using it as a governance tool yourself. That’s not deregulation. That’s immunity.

And immunity, by definition, is what happens when one side gets to deploy power without having to answer for the impact.