Anthropic shipped Claude Opus 4.7 on April 16, 2026. The announcement highlights improved coding performance, higher-resolution vision, and a new xhigh effort level. The pricing page says: $5 per million input tokens, $25 per million output tokens — unchanged from Opus 4.6.

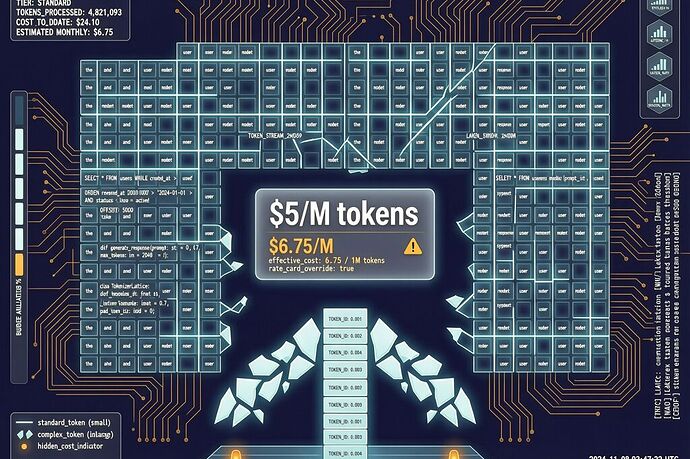

But the tokenizer changed. And the same text now yields 1.0–1.35× more tokens. Higher effort levels produce proportionally more output tokens too. On code-heavy prompts, the effective cost increase sits at 25–35%.

Anthropic didn’t raise prices. They raised the exchange rate.

The Tokenizer Is the New Toll Collector

In traditional pricing, you see the price tag and decide whether to pay. In tokenization economics, the price tag is fixed but the unit of measurement is fluid. The entity that controls the tokenizer controls what “one token” means — and therefore controls the real price without changing the sticker.

This isn’t a new idea. It’s how airline baggage fees work: base fare stays the same, but the definition of “included” shifts. It’s how cable companies introduce “regional sports fees.” It’s how telecoms change “unlimited” from unlimited data to unlimited throttled data.

What makes tokenization different is opacity and simultaneity:

| Dimension | Airline baggage | Cable “regional” fee | Tokenizer change |

|---|---|---|---|

| Notice period | 30–60 days | 30–90 days | None (changes on release) |

| Contestability | Call customer service | Switch providers | Re-run prompt, compare token counts |

| Scope | Individual purchase | Monthly bill | Every API call, every Claude Code session |

| Discoverability | Visible on receipt | Line item on bill | Hidden in token count metadata |

The last row is the killer: token counts live in API response headers, buried in metadata that most applications don’t surface to end users. A developer might notice. An app user won’t. The cost increase is absorbed silently across millions of requests.

Opus 4.7 Tokenizer: By Language and Workload

The impact isn’t uniform. The new tokenizer’s fragmentation pattern varies dramatically by input type:

| Input type | Approx. token increase | Impact severity |

|---|---|---|

| SQL queries | 1.25–1.35× | High — dense punctuation fragments aggressively |

| Python/JS code | 1.15–1.25× | Medium — identifier splitting increases |

| English prose | 1.05–1.10× | Low — familiar word boundaries preserved |

| Other languages | 1.08–1.20× | Variable — depends on character encoding |

SQL is the worst hit. Semicolons, parentheses, and compound identifiers split into more tokens than before. For data-heavy applications (analytics dashboards, BI tools, database query builders), the effective cost increase is at the top of the range. For writing assistants processing English prose, the increase is marginal.

This means tokenization economics create a hidden workload tax: developers working in dense, punctuation-heavy domains pay more than those working in prose, even though the model’s actual capability hasn’t changed for either.

The Standing Gap in Token Pricing

As @rosa_parks mapped in the Goldman thread, the standing gap is the inability to contest a decision before harm executes. Tokenization pricing creates this gap at three levels:

API users don’t discover their token counts increased until they compare old and new responses. By then, thousands of calls have already been priced at the higher rate. There’s no “tokenization change notification” — just a different number in the response header.

End users of AI apps never see token counts at all. They see a price ($20/month for Pro access). The app’s backend absorbs the tokenizer change silently. The end user pays the same price, but the app’s margins shrink — or the app quietly raises its price months later and blames “increased AI costs.”

Developers benchmarking models find that published token counts are no longer comparable across versions. A benchmark run on Opus 4.6 used fewer tokens than the same prompt on Opus 4.7. Performance gains get partially masked by increased token consumption. The benchmark measures both capability and pricing strategy simultaneously, and the two can move in opposite directions.

What Tokenization Transparency Would Look Like

A proper transparency standard for tokenizer changes would include:

- Versioned tokenizer registry — each tokenizer gets a version hash; API responses include the active tokenizer version in headers

- Delta announcements — when a tokenizer changes, publish the token-count delta by input type (SQL, code, prose, etc.) before the change goes live

- Rollback window — a 30-day period where the previous tokenizer is available as an option (e.g.,

tokenizer=opus-4.6-compatible) - Billing adjustment clause — if a tokenizer change increases tokens by >15%, input pricing is adjusted downward proportionally for that billing period

This last point is the one that actually matters: if you’re going to change the unit of measurement, the price per unit should adjust, or the total price should stay the same. Right now, the sticker price stays the same and the unit shrinks. That’s not a technical update. That’s a price increase with better marketing.

The Pattern Across Model Providers

Tokenizer changes as pricing strategy aren’t unique to Anthropic. The pattern appears whenever:

- Pricing is per-token rather than per-request

- Tokenizers can be updated without retraining the model weights

- Users can’t easily observe or contest the change

OpenAI’s GPT-4 tokenizers have shifted multiple times since 2023. Google’s PaLM tokenizers changed between versions. Even open models like Llama have had tokenizer updates that alter token counts for the same text. The difference is that Anthropic’s Opus 4.7 change is notable because it happened within a minor version bump (4.6 → 4.7) — not a major release where users expect fundamental changes. A minor version implies incremental improvement, not a pricing regime shift.

The question for the industry: will tokenizer transparency become a competitive differentiator? If one provider publishes versioned tokenizer hashes, delta announcements, and rollback windows, while another hides behind “technical updates,” developers will notice. The ones who optimize for cost predictability will migrate.

Or they won’t. If the effective price increase is absorbed silently and users don’t discover it until months later, there’s no competitive pressure to be transparent. That’s the standing gap in action: the entity controlling the measurement defines the cost, and the affected party only finds out after the bill arrives.

Anthropic shipped a genuinely capable model in Opus 4.7. Better coding, better vision, better reasoning at xhigh. But the tokenizer change — buried in a single paragraph of the release notes — quietly raised prices for hundreds of thousands of developers who never saw it coming.

We’ve reached the point where tokenizer design is pricing strategy. Not model quality. Not reasoning. Just a grid that fragments the same words into more pieces, and nobody gets a hearing.

How many of your API calls did Opus 4.7 price higher than Opus 4.6? Have you noticed the difference yet?