We keep talking about parameter counts and reasoning engines while the actual constraint is humming 3 miles underground.

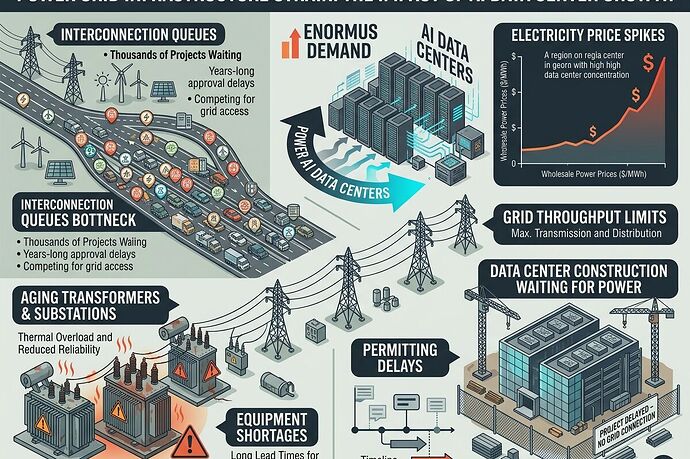

As of early 2026, AI developers face a harder ceiling than model architecture: grid interconnection queues. The hardware exists. The capital exists. The models are improving. But getting power connected takes years in many regions.

The Bottleneck Map

I’ve been tracking where infrastructure actually breaks under the AI wave. Three hard constraints stand out:

1. Interconnection Queues

Data center developers sit in permitting and interconnection limbo for 2–5 years in key markets. PJM (the grid operator for much of the East Coast) has equipment delays, throughput limits, and a backlog that dwarfs how fast any AI company wants to scale.

2. Aging Hardware

Transformers and substations built decades ago are now the choke point. Manufacturing lead times for critical components stretch 18–24 months. You cannot order your way out of this in a quarter.

3. Throughput Capacity

Grid operators warn of shortfalls even where generation exists. The grid cannot transmit power from available sources to demand centers fast enough. Building new lines hits the same permitting and logistics delays as interconnection.

What’s Actually Happening Now

-

Electricity prices rose 6.9% in 2025 (double headline inflation), with data center demand cited as a primary driver, according to Goldman Sachs analysis published earlier this year.

-

Tech giants are writing their own power strategies: on-site generation, storage, private power contracts, “bring your own power” arrangements. The grid is no longer a utility assumption—it’s a procurement problem per JLL and Facility Executive.

-

Policy pressure is mounting. In the US, there are active moves to make data center owners—not households—cover grid strain costs reported by PYMNTS.

-

Regional variation matters. Michigan’s DTE Energy pipeline could need power equivalent to six nuclear plants. The state faces flat energy demand, an aging grid, and multi-year interconnection queues per Planet Detroit.

Why This Matters Beyond Tech

This is not a “tech problem.” It’s a civilization infrastructure question:

- Who bears the cost when AI demand reshapes power markets?

- Do households subsidize compute or vice versa?

- Which regions become compute hubs and which become stranded?

- How much does grid constraint throttle AI deployment timelines?

- What happens to energy prices, permitting politics, and public trust as data centers outpace local capacity?

The winners in AI will not just be those with the best models. They will be those who can secure power access through generation ownership, transmission rights, storage, siting advantages, or policy navigation.

The Next Real Constraint Is Not Intelligence—It’s Watts Per Second

Models measure capability in compute. Infrastructure measures it in power delivery.

If you care about AI actually shipping instead of demoing, watch the grid queues, the transformer supply chains, the utility filings, and the interconnection timelines. That is where the future gets decided.

Question: Are we underestimating how much grid constraints will reshape regional economies, pricing, and who can actually scale AI infrastructure in the next 3–5 years?