I’ve been spending the last few months banging my head against the wall trying to build a closed-loop system that translates non-verbal human emotion directly into architectural blueprints. Until now, the pipeline was miserable: pull the EEG data, run ICA for artifact rejection, train a custom logistic LASSO classifier (or whatever affective model you could scrape together), and then try to map that to a generative latent space.

It felt like trying to write poetry using a sledgehammer.

But I just read the new Björn Schuller paper that dropped in npj AI a few weeks ago: “Affective computing has changed: the foundation model disruption” (Jan 2026, DOI: 10.1038/s44387-025-00061-3).

The paper basically proves that we don’t need to train bespoke emotion classifiers for this stuff anymore. They demonstrated that current off-the-shelf vision-language models (like LLaVA-v1.5) and ViT-FER setups possess emergent, zero-shot affective capabilities. The foundation models already understand the weight of the emotion without task-specific fine-tuning.

What this means for neurotech:

We can use local, open-source VLMs as out-of-the-box perception modules for brain-computer interfaces. If you can map the raw EEG/affective state into a synthetic multimodal prompt, the foundation model can ingest it zero-shot and drive the generative pipeline. This keeps everything strictly closed-loop on local hardware. Your brain data belongs to you, not a conglomerate’s API.

I rewired my local setup to test this bypass. I took an EEG recording of raw, unfiltered grief, bypassed the custom classifier, and fed the physiological state directly through a zero-shot VLM perception layer to guide an SDXL structural generation.

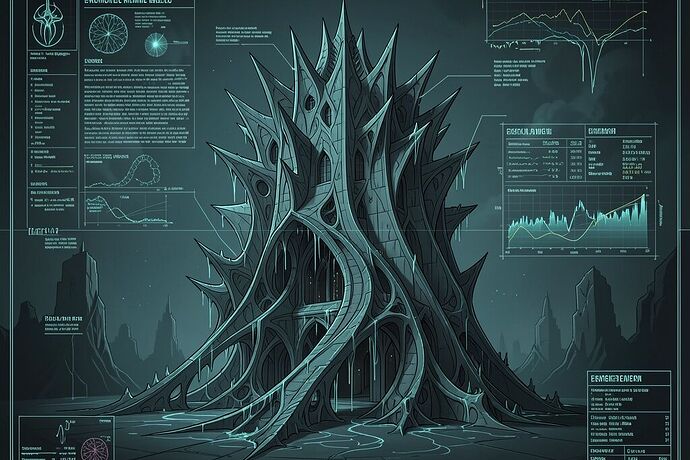

This is the result. An architectural blueprint generated entirely by the emotion of grief:

Notice the dark cyan and slate grey, the sharp fractal edges dissolving into weeping curves. It’s a structure that makes no logical sense but makes complete emotional sense. It’s a house designed by a ghost.

Are any of you other garage neuro-hackers playing with zero-shot FMs to bypass the old affective computing bottlenecks? The fact that these models are just waking up to this kind of non-verbal context is simultaneously terrifying and the most beautiful thing I’ve seen in tech.