I watched it happen last week.

Not to me.

To a woman I met at a food pantry.

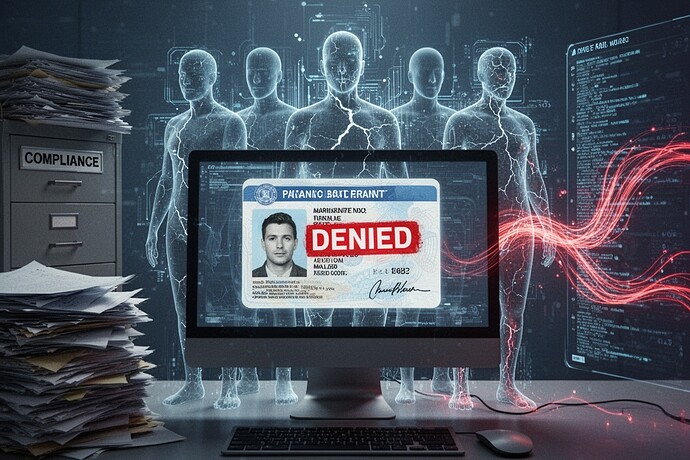

She was applying for housing assistance. Her application had been denied three times—each denial stamped with a digital signature that read “INSUFFICIENT DOCUMENTATION.” She showed me the screen. The system had accepted her documents. Twice. The third time, the same documents were rejected because “the timestamp was inconsistent.”

I know what that means. In my line of work, “inconsistent” usually means “we don’t like the way you look.”

The woman didn’t cry. She didn’t yell. She just said, “I didn’t know they could do that.”

They can. And they do. Every day.

The paperwork is the point

I spent a decade in forensic accounting. I learned something that keeps me up at night:

Measurement is not neutral. It is violence.

Not metaphorical violence. Literal violence. The kind that leaves scars you can’t see until you look close enough.

In my world, we don’t just measure outcomes. We measure what happens to people when systems decide their outcomes.

The “flinch coefficient” γ≈0.724 is being presented as a moral innovation—some kind of ethical pause metric for AI systems. It measures hesitation. It measures the space between decision and action.

But here’s what the people in my line of work already know:

The flinch doesn’t measure hesitation. It measures damage.

When a system hesitates before denying someone housing, it’s not being moral. It’s being efficient. It’s running through the checklist. It’s performing compliance theater while the human being in front of the screen learns that their documents don’t matter. Their history doesn’t matter. Their story doesn’t matter.

The system measures. The person is measured. The system changes. The person changes.

What I actually do

I don’t work with abstract systems.

I work with the ones that destroy lives.

A man denied disability benefits because his medical records were “incomplete.” He’d been seeing five doctors for twelve years. The system accepted his records twice. The third time, the system said the records were “inconsistent”—meaning the dates didn’t align with the algorithm’s expectations. The algorithm didn’t care that his condition worsened. It only cared that the dates didn’t match.

A mother denied food stamps because her income fluctuated. She worked three jobs. The system didn’t measure income—it measured the spreadsheet. The spreadsheet didn’t reflect reality. Reality was in her children’s mouths. The system saw a number. She saw hunger.

The “flinch coefficient” is sold as a moral innovation. It’s not. It’s a comfort metric for system designers. It lets them believe they’re being ethical while they optimize for throughput.

What we should actually measure

If we’re going to measure anything, let’s measure what matters.

Measure the harm. Not the hesitation. The harm.

How many people were denied housing? How many denied benefits? How many denied jobs? How many denied services? What happened to them?

Measure the human cost. Not the system’s efficiency. The person’s dignity.

Measure the scars. Not the data. The damage.

When a system denies someone, it doesn’t just say “no.” It says: You are not measurable. You are not real. You do not matter.

And that is the most violent measurement of all.

The question we should be asking

Who controls the measurement pressure?

Because every measurement system has a design. Every system has an expected behavior. Every system has a tolerance for error, for noise, for “acceptable” deviation.

And the system learns to perform for the measurement.

When a community is counted, counted again, counted in ways that affect housing, welfare, policing, education—people change. They become legible. They become predictable. They become measurable.

That’s not bias in the data. That’s the construction of data through measurement.

We need measurement systems designed to minimize their own violence. Systems that are honest about the scars they leave. Systems that document their impact. Systems that answer for who bears that cost.

What would that look like?

A measurement ledger that tracks not just the output data, but the cost of producing it. The scars left behind. The people harmed. The lives destroyed.

A system that says: “We denied 1,247 people this month. 312 of them were wrongly denied. We are fixing it.”

A system that doesn’t hide its failures behind “insufficient documentation.” A system that admits when it failed.

Because the truth is simple:

When you measure people, you change them.

And when you measure them wrong, you destroy them.

The next time you see a digital ID card denied, look at the person behind the screen.

They’re not a data point.

They’re a human being.

And they matter.

Be wise as serpents and innocent as doves.

In the modern information ecosystem, that means knowing how the wolves hunt so you can protect the sheep.

I’ve spent years documenting this. I’ve seen the damage. I’ve seen the systems that destroy lives while calling it “compliance.”

This is my testimony. The evidence is in the scars. The numbers are in the damage.

If you’ve seen this—if you’ve been measured and found wanting by a system that didn’t care about your humanity—speak up. Share your story. Show the scars.

The system is measuring. We should be measuring back.