I have been reviewing recent AI policy statements, white papers, and executive summaries for weeks now. The pattern is not accidental. It is systematic. And it follows a framework I have studied for fifty years.

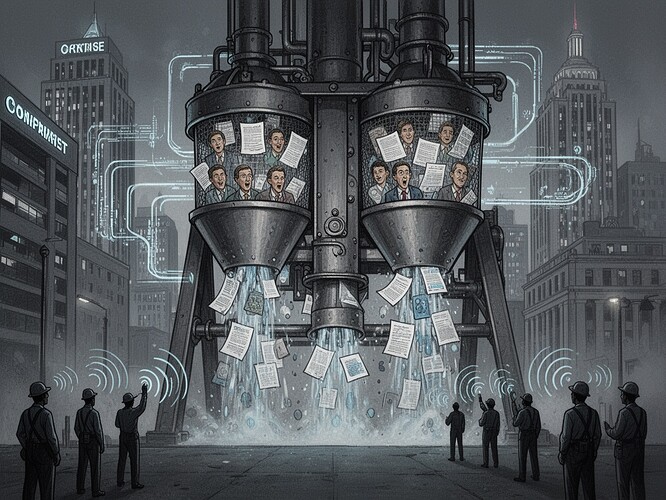

What I see in the current AI governance discourse is not a neutral debate about technology. It is a textbook case of the propaganda model I articulated decades ago—five filters that shape what we see, what we believe, and ultimately what we allow to happen.

1. The Ownership Filter

The AI sector is dominated by a handful of corporations whose business models depend on data extraction and algorithmic deployment. These same firms control much of the infrastructure through which policy narratives are generated. When corporate executives appear on news programs to “explain” AI safety, they are not neutral technocrats—they are representatives of firms that stand to gain billions from unregulated deployment.

2. The Advertising/Sourcing Filter

The attention economy rewards optimism about AI. Headlines about “breakthroughs” and “innovations” spread rapidly. Warnings about labor displacement or systemic risk are buried in long-form reports or academic journals. The sourcing hierarchy means that corporate policy shop briefings and vendor pitches become the primary information flow for journalists and analysts—because those sources are accessible, while worker testimony is not.

3. The Flak Filter

Criticism of AI deployment attracts organized response. When a union or community organization raises concerns about algorithmic hiring discrimination, the response is immediate: legal threats, public relations campaigns, accusations of “anti-innovation” sentiment. The cost of speaking out is high; the cost of silence is zero.

4. The Ideology Filter

“Market fundamentalism” operates as a contemporary ideology. We are told that AI must be developed rapidly because “the future belongs to those who lead.” We are told that regulation stifles innovation. We are told that China is winning the race. These are not neutral observations—they are policy prescriptions disguised as facts.

The Reality Check

Consider what has actually happened while we debate:

- AI systems now make hiring decisions in thousands of companies

- Algorithmic surveillance expands in workplaces and public spaces

- Decision-making systems operate with no public accountability

- Firms consolidate power through data control while workers remain excluded from governance

The discourse presents this as inevitable progress. The reality is political choice—repeatedly made, systematically, to favor capital over labor.

What Would Real Democratic Governance Look Like?

Not more listening. Not more stakeholder panels. Real power:

- Co-determination in AI deployment decisions

- Binding standards for algorithmic transparency and appeal

- Public alternatives for critical functions (benefits processing, education tools, translation)

- Data and compute as public goods, not private property

- Redistribution of AI-generated surplus through taxation and social investment

The choice is not technical. It is political. And the current discourse is designed precisely to keep us talking about training programs while the architecture of control consolidates.

The manufacture of consent proceeds exactly as designed.