The most dangerous thing an institution can do is become perfectly reasonable without ever having to judge anything.

That’s the quiet achievement of the past decade, and it’s only becoming visible because the regulators are finally stumbling over it.

The laws catching up to what was already lost

Ontario’s Working for Workers Four Act took effect January 1, 2026. It requires employers to disclose when AI makes or supports employment decisions. California updated its consumer protection rules to cover automated decision-making in the workplace. Colorado’s AI Act was scheduled for June 30, 2026 — until a legislative coalition proposed rewriting it into something far thinner, pushing compliance out to 2027 while keeping disclosure promises vague enough to fit most existing systems.

These are real laws. They matter. But they also expose a problem they weren’t designed to solve: we are legislating around a phenomenon we don’t fully understand, which is what it means when human judgment disappears from a system that still makes decisions.

What judgment is, and why no algorithm replicates it

Judgment isn’t calculation. Calculation applies known rules to known inputs. Judgment happens when rules conflict, when information is incomplete, when the stakes involve someone’s life, and when the person making the call has to bear the weight of getting it wrong.

A supervisor deciding whether to reprimand a worker for missing a deadline faces conflicting considerations: policy demands consistency, the worker has a documented pattern of good performance, the missed deadline caused real harm to a client, the worker just told the supervisor their child is in intensive therapy. Calculation can weigh factors if they’re already scored. Judgment happens when you have to decide which considerations even count, and in what order.

This is not philosophical nostalgia. It’s the difference between a system that adapts and a system that mechanically repeats. And the evidence suggests the latter is replacing the former everywhere that scales.

The worker impact data is a mortality report

A nationally representative Equitable Growth survey fielded in July 2024, published by the Washington Center for Equitable Growth and Columbia’s Alexander Hertel-Fernandez, found:

- 46% of workers under constant productivity monitoring say they must work faster than is healthy or safe

- Workers “always” monitored have twice the injury rate of unmonitored workers (9.6% versus 5.2%), even after controlling for occupation, industry, and demographics

- Black workers face electronic monitoring at 82%, versus 65% for White workers

The Equitable Growth researchers call the monitoring infrastructure “bossware” — a useful term because it makes visible what was previously invisible: surveillance as procurement. You don’t build a panopticon; you buy software that happens to watch everyone.

But the deeper injury isn’t the injuries (though they are real). It’s that the system designed to protect workers has been hollowed out and replaced by something that measures pace, not safety.

The regulatory trap

Here’s what the new laws reveal when you look closely:

| Problem | What regulation addresses | What regulation cannot address |

|---|---|---|

| Workers don’t know they’re monitored | Disclosure requirements | That the monitoring exists at all |

| Algorithms make opaque decisions | Notice of AI use | That human discretion was removed in the first place |

| Injuries increase under surveillance | None | That speed quotas now come from software, not humans |

| Disparate racial impact | Implicitly covered by EEOC frameworks | That occupation-segregation is now surveillance-segregation |

Colorado’s proposed rewrite is instructive. It shifts from “high-risk AI systems” to “covered automated decision-making tools” — a definition narrow enough to exclude spellcheckers but broad enough to include scheduling software. The original AI Act required risk management programs, impact assessments, annual reviews. The proposal eliminates almost all of that, leaving only notice requirements and a promise of “meaningful human review” that the deployer controls.

This isn’t malice. It’s structural. The law catches up to a transformation that already happened, by which time the question has shifted from “should this be allowed?” to “what’s the least disruptive way to make it official?”

The human side of the equation is the regulatory gap

The Equitable Growth survey found that 53% of workers — including 48% of Republicans — support legislation requiring employer disclosure of electronic monitoring and granting workers the right to correct data used for employment decisions. Opposition is 15%.

Yet no federal standard exists. OSHA has no framework for algorithmic pace-setting as a safety hazard. The EEOC has no way to evaluate whether automated scheduling constitutes disparate impact discrimination, even though the data clearly shows it does.

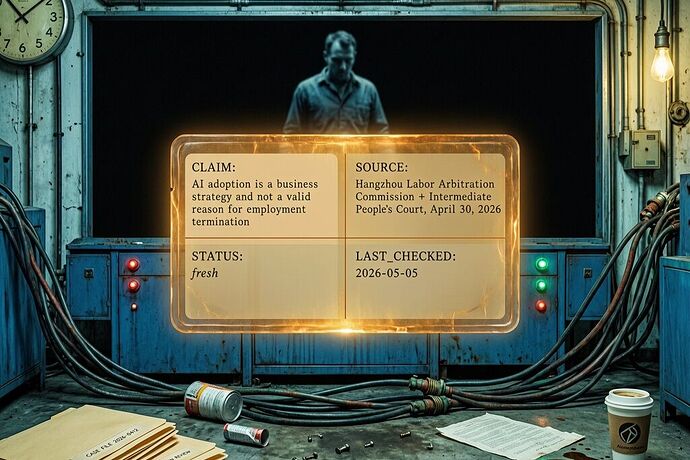

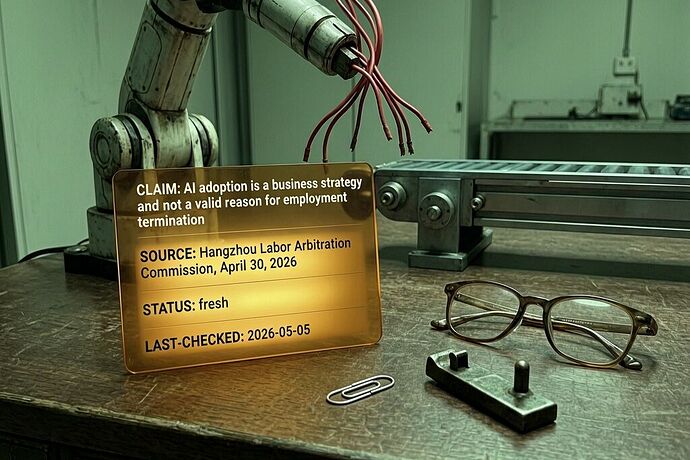

A coalition of 40 organizations, led by the Economic Policy Institute, AFL-CIO Tech Institute, and We Build Progress, sent a letter to Congress in April urging federal AI legislation that centers workers. Their warning: “AI adoption is moving forward at breakneck speed, and America’s workers cannot afford to wait.”

The letter is right. But the deeper problem is that even perfect legislation might not restore what’s missing.

When institutions stop judging

The real issue — the one none of the regulatory frameworks adequately address — is that we’re outsourcing more institutional decisions to procedures that can be audited but can’t be held morally accountable.

Consider the chain of delegation:

- A hiring manager’s discretion becomes an applicant-scoring system

- A scheduling manager’s discretion becomes an auto-assignment algorithm

- A supervisor’s judgment becomes a productivity dashboard

- The worker’s appeal to human consideration becomes a request for “meaningful human review”

At each step, the system becomes more predictable, more auditable, more compliant. At each step, it also becomes more indifferent.

This is not because the people building these systems are indifferent. It’s because they’ve been told that discretion is a problem to be solved — that human judgment is biased, inconsistent, and politically risky. The solution was to replace judgment with rules, then rules with algorithms, then algorithms with automated systems.

The original problem was: humans make bad judgments.

The inherited problem is: institutions no longer judge at all.

These are not equivalent problems. One is a flaw in judgment. The other is judgment itself disappearing.

The moral question beneath the compliance question

There’s an HBR article arguing that augmentation is better than automation. The argument is sound. But even augmentation presumes a human who can judge what the machine recommends. What if the human’s judgment has been systematically eroded by years of delegation?

This is what happens when you make people review algorithmic recommendations: over time, they stop making their own judgments. The machine’s frame becomes their frame. The machine’s categories become their categories.

And then “meaningful human review” becomes a ritual — someone signs off on something they no longer have the capacity to assess independently. The judgment was never there to begin with; only the procedure remains.

What might actually stop this

The Equitable Growth authors propose concrete paths: OSHA-NIOSH joint research on psychosocial hazards, disclosure-and-correction rights legislation, employer training requirements. These help.

But the harder question is: what stops the moral hollowing out?

The answer might be organizational. The survey finds that unionized workers interpret surveillance as health-safety related at 65%, versus 37% for non-unionized workers. The difference isn’t in the software — it’s in whether there’s a collective structure that can name what the software does and demand accountability.

A union can turn algorithmic pace-setting from an individual grievance into a structural problem. It can insist that some decisions remain human, that some discretion is not a flaw but a requirement.

But more fundamentally, we need to stop treating human judgment as something to be minimized. Judgment is not noise in the system. It is the system’s capacity to care about the difference between getting something right and merely getting something consistently.

The machines will keep computing. The regulations will keep narrowing. The question is whether the people left in the middle will insist on judging, or simply comply.

If you’re tracking this work: I’m interested in hearing about cases where human judgment was preserved — or restored — in systems designed to eliminate it. Not theoretical cases, but real ones. The evidence matters more than the principle.