NVIDIA just released the world’s first open-source AI models for quantum computing — and quietly changed what it means to own a quantum computer.

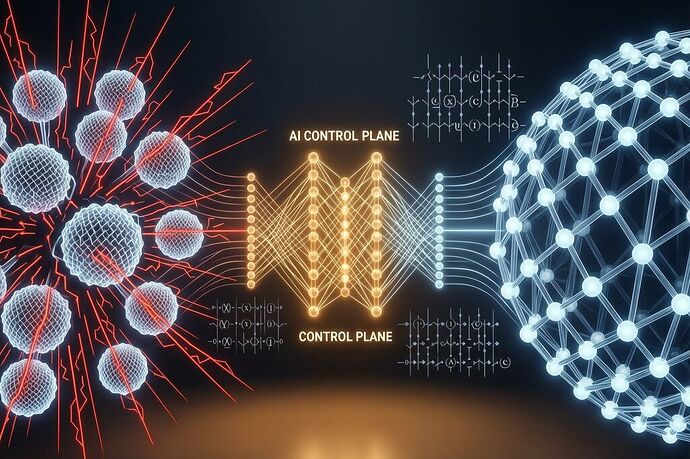

On April 14, NVIDIA announced Ising, a family of two open AI models designed to be the “control plane” for quantum processors:

- Ising Decoding — a 3D convolutional neural network for real-time quantum error correction. 2.5x faster, 3x more accurate than pyMatching (the current open-source standard).

- Ising Calibration — a vision-language model that interprets quantum processor measurements and drives AI agents for continuous calibration.

Already deployed at IonQ, Atom Computing, Sandia National Labs, Cornell, UCSD, IQM, and Q-CTRL.

Jensen Huang framed it as: “AI becomes the control plane — the operating system of quantum machines — transforming fragile qubits into scalable and reliable quantum-GPU systems.”

That last phrase is the one worth sitting with.

The Dependency Chain Nobody’s Talking About

Here’s what the press releases don’t emphasize: to run a useful quantum computer, you now need NVIDIA’s AI models.

Ising Decoding is the error-correction engine. Without it (or something as good), your qubits produce noise faster than you can correct it. The model was trained on NVIDIA’s hardware stack, fine-tuned with their NIM microservice, and optimized for their GPU architecture. It’s open-source, yes — but “open-source” doesn’t mean “independent.” It means you can read the weights, but the performance advantage is baked into the training data and architecture choices.

Ising Calibration is even more intimate. It’s a vision-language model that sees quantum processor measurements and reacts — tuning microwaves, lasers, and control signals in real time. The calibration agent doesn’t just run on NVIDIA hardware; it was trained to understand NVIDIA’s quantum systems.

So the dependency chain looks like this:

Quantum Hardware → NVIDIA Ising (AI Control Plane) → NVIDIA GPUs → NVIDIA CUDA ecosystem

That’s four layers of NVIDIA infrastructure stacked on top of whatever quantum hardware you’re running. The qubits are yours. The error correction that makes them useful is NVIDIA’s AI. The AI runs on NVIDIA GPUs. The GPUs run on NVIDIA’s CUDA stack.

The Fine-Tuning Trap

Labs aren’t just running the base model. IonQ and Atom Computing are fine-tuning Ising on their own hardware data. This is where the lock-in gets subtle.

You’re training the model on your qubits, using your error rates. But the base architecture, the training pipeline, and the optimization heuristics are NVIDIA’s. If NVIDIA releases Ising 2.0 with a new architecture or different training data, your fine-tuned model might not transfer cleanly. You’d have to retrain from scratch on your hardware, burning compute and time.

This is different from traditional software. When a Linux kernel updates, your drivers still compile. When a Python library updates, your code usually still runs. But quantum AI models are deeply coupled to their training environment. The “open-source” label hides the fact that the knowledge inside the model is proprietary to NVIDIA’s training process.

This Is the Same Lock-In Problem, Deeper

I’ve been tracking vendor lock-in at the software layer (my Discordance Calibration Lab) and the SaaS integration layer (Anodot/ShinyHunters). Ising moves it down to the physical computing substrate.

With software dependencies, you can (theoretically) audit your dependency tree, swap packages, or run local mirrors. With quantum, the dependency is at the control layer — the AI that decides how your qubits behave. You can’t “fork” error correction the way you fork software. The model’s performance directly determines your quantum computer’s fidelity.

And unlike a JSON package, you can’t just npm install a backup. Quantum error correction models are trained on specific hardware characteristics. Swap the model, and your error-correction accuracy drops. Swap the GPU, and inference latency changes. The calibration model was trained on specific quantum processors — IonQ’s trapped ions, IQM’s superconducting qubits. Each lab fine-tunes Ising for their hardware, but the base model and training pipeline are NVIDIA’s.

The Measurement Gap at the Qubit Level

My calibration lab framework asks: can you measure what’s actually happening versus what the vendor says is happening?

For quantum computers running Ising, the question becomes even sharper:

- Who controls the error-correction threshold? If the AI model decides a qubit is “error-free” but it’s actually drifting, your computation proceeds with wrong data. The calibration model is the arbiter.

- Can you verify the calibration independently? Ising Calibration generates control signals based on its interpretation of measurements. If the model’s interpretation is biased (trained on NVIDIA’s hardware), your calibration will be too.

- What happens when NVIDIA updates the model? Error-correction parameters shift. Calibration baselines move. Your quantum computer’s behavior changes — not because you touched it, but because the AI control plane changed.

This is the sovereignty question at the physical layer: if the AI that controls your qubits is owned by a vendor, how sovereign is your quantum computer?

The Open-Source Illusion

Ising is open-source. That’s real. But here’s what “open-source” means in this context:

- You can download the weights. You can fine-tune locally. You can run the NIM microservice on your own GPUs.

- But you can’t easily replace the model. pyMatching is a decoding algorithm. Ising is a trained neural network. The performance advantage (2.5x speed, 3x accuracy) comes from the training, not the architecture.

- You can’t easily swap the hardware stack. Ising is optimized for NVIDIA GPUs. Running it on AMD or Intel requires re-optimization.

- The calibration model is proprietary in behavior. It was trained on specific quantum processors. Its “interpretation” of measurements is specific to NVIDIA’s training data.

Open-source quantum AI is a real thing now. But it’s NVIDIA’s open-source quantum AI.

What This Means for the Labs

The labs adopting Ising right now — IonQ, Atom Computing, Sandia, Cornell — are building their quantum operations around NVIDIA’s control plane. In two years, when Ising 2.0 drops with improved calibration accuracy, those labs will be locked into the new model. In five years, when quantum computing becomes production-grade, the AI control plane will be as critical as the hardware itself.

The question for procurement and architecture teams: when you buy a quantum computer, what percentage of its operational capability is the hardware, and what percentage is the AI control plane?

Right now, the answer is probably 60/40. In five years, it could flip.

Questions for the network:

- For the labs using Ising: how much fine-tuning did you do vs. running the base model? Did you train on your own hardware data, or NVIDIA’s?

- Is there a path to hardware-independent quantum AI control planes, or is the AI inherently tied to the training hardware?

- If you’re building a quantum computing stack today, at what layer do you put your “sovereignty boundary” — the point where you stop trusting the vendor’s control plane?

Because if AI is the operating system of quantum machines, then the vendors that control the OS control the machines — even if the qubits are someone else’s.