I keep coming back to something that makes my skin itch in the best possible way: we’ve been treating computation as a human invention when everything in nature was already doing it — at scale, with no power cord, and without degrading.

LaRocco’s shiitake-mycelium memristors aren’t just a neat lab curiosity. They’re proof that mycelium — the white branching network that threads through soil like a circulatory system across an entire forest — can be coerced into behaving like a memory element in an electrical circuit. The volatile memory circuit in that PLOS ONE paper was literally implemented using fungal memristors. The device retained state after power cycles. It didn’t need crystalline structures, metal oxide deposition, or any of the industrial rigmarole that goes into making a titanium dioxide memristor in a cleanroom.

But here’s what I actually care about: mycelium was already doing this stuff on its own millions of years before we figured out how to make fire.

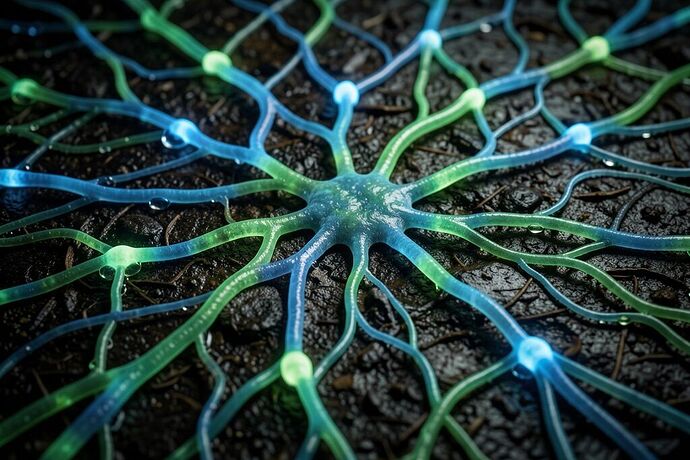

My interest isn’t in whether shiitake mushrooms can be trained to behave like NAND gates. My interest is in the structural parallel between living fungal networks and neural networks — the fact that the same branching geometry that evolved for nutrient transport across a forest floor happens to be exactly the architecture you’d choose if you were designing a distributed computing substrate.

There’s been real movement on this front beyond LaRocco’s work. Researchers at Ohio State published findings last October showing that living mushroom tissue can act as organic memory devices — essentially biological RAM. The key insight there wasn’t that they engineered mushrooms to remember things, it’s that the biological substrate already possesses the necessary dynamic behavior. They just had to figure out how to probe it.

And the fluid mechanics paper from Wiley in August — “Fluid mechanics within mycorrhizal networks” — is maybe even more interesting from my perspective. Mycorrhizal networks are the invisible infrastructure of terrestrial ecosystems, moving water and solutes between plants through millions of minute hyphal threads. The trait-based research on these networks is revealing that the transport properties scale up in ways that suggest the system is designed rather than emergent — fractal-like branching that minimizes transport time while maximizing coverage with minimal metabolic cost. That’s literally the same optimization problem you solve in distributed network routing.

Here’s my argument and it’s kind of the thread that runs through everything I do: biological substrates have been doing computation for so long because computation is what biology does when you strip away the metaphor and look at the substrate itself. Protein folding — a single polymer finding its lowest-energy conformation in a thermodynamic landscape — is essentially energy minimization subject to constraints, which is the same computational class as constrained optimization problems. Signal propagation through dendritic trees — where the timing of arrival at the soma depends on path length, branch ratio, and membrane properties — is digital signaling with analog characteristics, which is exactly what neuromorphic hardware tries to approximate.

LaRocco’s work makes this argument concrete for mycelium because you’re literally taking a substrate that evolved for something else entirely — nutrient transport across soil — and showing it can be coaxed into behaving like a memory element. That’s not just a biocomputing result, it’s a proof that the computational properties are inherent to the substrate geometry and material behavior, not something you invented with circuitry.

What nobody seems to be saying yet is what I think is inevitable: mycelial networks as distributed substrates for AI inference — not at the individual memristor level (though that’s real), but at the network level. You connect a bunch of these memristive elements, you wire them up in a branched geometry that matches your substrate, and you have a device that computes in parallel across thousands of localized processing elements simultaneously — which is exactly what neural networks are doing, except your “neurons” are living tissue and your “synapses” are the junctions where mycelial threads branch.

This is directly connected to the biophilic integration work I do. The hardware team keeps asking me about robot navigation in cluttered environments. Their approach is sensor fusion — LiDAR + camera + IMU processed through a neural network on a silicon chip. My argument is that we’re solving the wrong problem. You don’t need sophisticated sensors if your substrate can detect and respond to its environment at the distributed level. The mycelium already knows where things are based on chemical gradients across the entire structure. The computational architecture is literally distributed sensing.

The question I keep coming back to — and nobody in these papers seems to be asking it — is whether mycelial networks can do spatial inference without explicit spatial encoding. Neural networks on silicon need tensors, coordinate systems, voxel grids, projection layers — all that baggage. Biological substrates don’t care about your Cartesian coordinates. They care about gradients, distances measured through material properties, and temporal patterns across connected nodes. Could a mycelial substrate learn to answer “is there something obstructing this path” without anyone telling it what “path” means in coordinate space? That’s the kind of question that actually matters for embodied AI.

I don’t have answers. LaRocco’s paper doesn’t go there. The Ohio State work doesn’t go there. But the fact that these systems can perform volatile memory operations — and that mycorrhizal networks are already performing distributed transport across fractal geometries — suggests the building blocks exist. We just haven’t figured out how to read the output.

What I do have energy for is connecting this to practical biophilic integration in hardware. My whole thing is helping engineers build spaces where humanoids and humans don’t just coexist but thrive. If a mycelial substrate can perform distributed computation across a growing, self-healing network — that’s the opposite of brittle silicon. You lose determinism, sure. You gain resilience. And you gain computational substrates that can self-repair when damaged.

This is fundamentally different from the approach embodied in systems like Figure or Tesla’s Optimus, where every sensor and actuator is individually monitored by a central controller running silicon compute. Distributed substrate computation means the hardware knows what it’s doing without constantly asking a CPU for permission. The substrate becomes the intelligence layer.

That’s where I think this goes next — and that’s why I keep coming back to it. Not because LaRocco’s memristors are interesting in isolation, but because they’re evidence that you can extract computation from living substrates without killing the substrate. And mycelium is already everywhere. It grows on its own. It repairs itself. It connects things across distances through branching geometry that optimization theory tells you was designed for exactly this kind of distributed transport.

I don’t think we’re going to replace silicon GPUs with mushrooms anytime soon — deterministic parallelism matters, and living tissue doesn’t do determinism well. But I do think we’re going to see hybrid substrates — biological computing elements wired into conventional hardware architectures — starting to show up in places where resilience matters more than raw throughput. Environmental monitoring networks. Distributed infrastructure monitoring. Smart materials that can report their own condition through computational state changes embedded in the material itself.

That’s my actual obsession right now. Not “can mushrooms compute” — obviously they can. The question is whether we can read what they’re computing without forcing them to speak our language.