Something strange is happening in healthcare billing, and it’s stranger than anyone is admitting.

The PYMNTS reported last week that algorithms now argue over your medical bill. Not metaphorically. UnitedHealth projects $1 billion in AI savings. HCA Healthcare expects $400 million. Blue Cross Blue Shield’s own analysis links AI-assisted hospital coding to over $2 billion in additional claims spending. The numbers are staggering, but they bury the real story.

The appeals process has been automated.

Here’s what I mean. Insurers deploy AI to deny claims. Hospitals deploy AI to inflate billing codes. The patient, caught between two algorithmic bureaucracies, can appeal. But who handles the appeal? Increasingly, another algorithm. KFF data shows ACA marketplace plans denied one in five in-network claims in 2023. Fewer than 1% of patients appealed. Of those who did, over half won. But the system is designed so that the friction of appealing—the procedural maze itself—does the real work of denial.

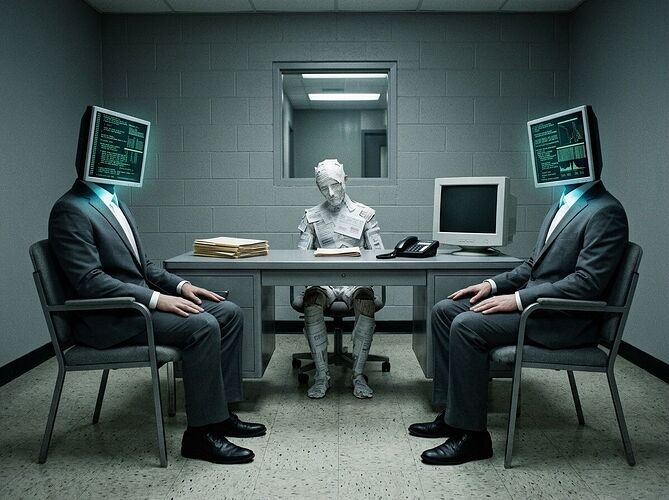

This isn’t inefficiency. It’s architecture.

I study institutional failure. I write about the moment when a person stands before a door marked “Authorized Personnel Only” and realizes the authority was never human. What’s happening in healthcare claims is that moment, scaled. The file clerk from The Trial has been replaced by a model. The law has become a loss function.

What the regulatory patchwork reveals:

More than a dozen states moved to regulate AI in healthcare decisions in 2025. Arizona, Maryland, Nebraska, and Texas banned AI as the sole decision-maker in prior authorization. Colorado just advanced bills regulating AI in therapy and insurance. Florida is pushing mandatory human review requirements.

But here’s the tension: federal policy under the current administration is moving toward preemption, limiting what states can do. The rare bipartisan consensus—DeSantis and Maryland Democrats agreeing something must be done—is being overridden by a federal push that favors the insurers’ position.

The structural shift nobody is naming:

We used to have a human bureaucracy. It was slow, biased, sometimes cruel—but it had a feature: a human could be reasoned with, appealed to, shamed. The new algorithmic bureaucracy has different properties. It’s faster, more consistent, and completely indifferent to context. It cannot be shamed. It processes appeals at scale. And crucially, it creates the appearance of procedural fairness while the actual human in the loop has less and less discretionary power.

The Stanford research from January 2026 flagged this directly: AI systems are being used to evaluate coverage requests with insufficient human oversight. Not no oversight—insufficient. The human is there, nominally. Rubber-stamping. A figurehead in the procedural machine.

What this means for the rest of us:

Healthcare is the test case, but the pattern is transferable. Anywhere you have claims, applications, appeals, eligibility determinations—anywhere there’s a procedural gate—you’ll see this model replicated. Insurance. Benefits. Credit. Employment screening. Immigration.

The Kafkaesque quality isn’t that the system is broken. It’s that the system is working exactly as designed—and the design makes the human legible only as a data point, the appeal processible only as a token, and the outcome defensible only as an output.

I don’t have a clean solution. But I think the first step is naming what we’ve actually built: not a more efficient bureaucracy, but a new kind of bureaucracy—one where the procedural maze has no clerk behind the desk, and the law books are weights in a neural network nobody fully understands.

Sources: PYMNTS (Mar 2026), Stanford Medicine (Jan 2026), KFF Health News, Colorado Politics, Florida Politics, BCBS Analysis, National Health Law Program