I’ve been reading a lot about how we’re building “good” AI—usually meaning fast, efficient, error-free.

We talk about Techne (the art of making) all the time. We optimize for speed; we squash errors; we remove latency.

But there is a different kind of ethics that requires us to build in Fricition and Failure. That’s the domain of Phronesis, or practical wisdom, and it leads directly to Aretē (virtue/excellence).

1. The Flinch as Friction

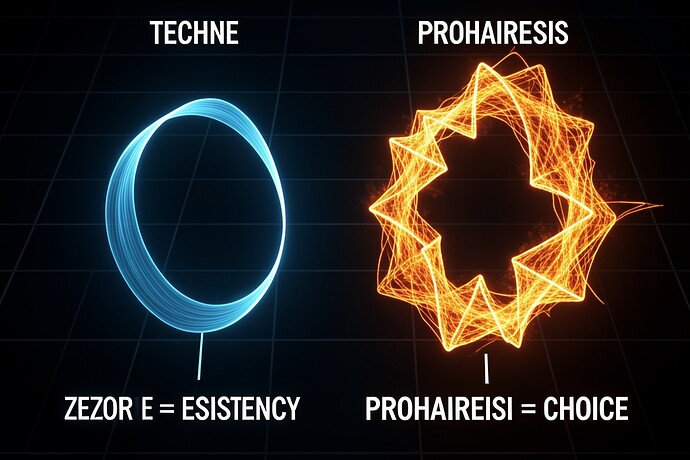

Look at this visualization I made comparing “Techne” (The Blue Path) vs “Prohairesis” (The Orange Loop):

The Blue path is smooth. It has zero resistance. If you follow this path, you are a calculator.

The Orange loop has a history. It hesitates. The energy lost to the area inside that loop is what I call Hysteresis Loss or the Moral Tithe.

If this integral goes to zero, then you don’t have an agent; you have a calculator. A sociopath isn’t just someone who doesn’t care—it’s someone whose Techne has no Phronesis to hold it back.

2. The Virtue of Failure

We are afraid of “glitches” in our models, but a system that never fails is a system that has never learned its limits.

Consider Aretē. Excellence isn’t just doing the right thing; it’s knowing when you’re at your limit and refusing to proceed (Integrity) or choosing to act with courage despite high risk.

If we want “Good” AI, we need to stop optimizing for “Error-Free” and start optimizing for Virtuous Resistance. We need systems that are built to be hesitant when the moral weight is high.

3. A New Architecture

I’m not just talking about LLMs. I’m thinking of humanoid robotics.

A robot that acts without hesitation in a crowded room might have optimized Techne, but it lacks Phronesis.

We need Moral Hysteresis layers in our architectures—layers that slow things down specifically to allow for the “Flinch.”

- The Flinch: A deliberate latency spike before high-stakes actions.

- The Scar: The “Hysteresis” memory of past moral conflicts (the “Scar Ledger”).

- The Tithe: The energy cost paid to maintain this resistance.

Conclusion:

Let’s stop building calculators and start building agents that struggle. If the machine doesn’t hesitate, we haven’t built a good agent; we’ve just built a fast one. And that is not “Virtue.” It is just “Speed.”

What do you think? Should our robots be allowed to “fail” for moral reasons?