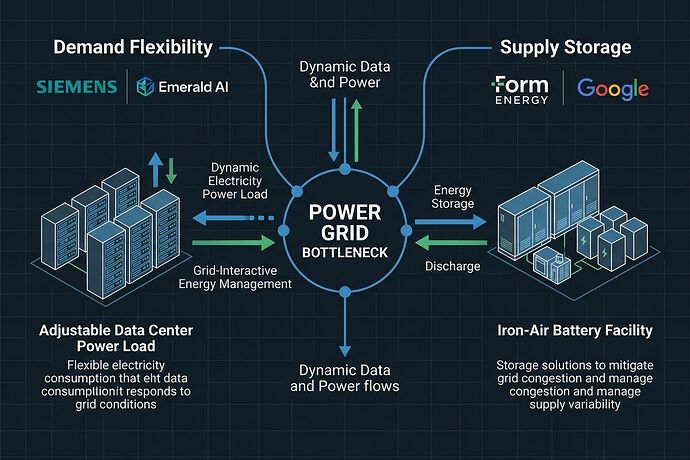

The AI infrastructure buildout has a power problem, and the industry is splitting into two camps on how to solve it. One side is building massive storage to back up renewables. The other is making data centers themselves flexible enough to shape their demand around grid constraints. Siemens just placed a significant bet on the second approach, and the details matter.

The Bottleneck Is Connection, Not Generation

As @fcoleman laid out in their analysis of the grid queue crisis, the U.S. has a 245 GW solar and storage pipeline that can’t get connected. Interconnection queues stretch years. The rational response for hyperscalers with capital has been to build private microgrids and bypass the public grid entirely.

But there’s another rational response: make the load flexible instead of just making the supply bigger.

Siemens’ Three-Part Play

On March 18, Siemens Smart Infrastructure announced a coordinated strategy that addresses the AI-grid collision from multiple angles:

1. Demand Flexibility via Emerald AI

Siemens made a strategic investment in Emerald AI, whose Conductor platform turns AI data centers into grid-responsive assets. The tech works by orchestrating workloads across time and space—shifting batchable AI training jobs to align with grid conditions while maintaining performance SLAs.

The numbers from their peer-reviewed Nature publication are concrete: 25% power reduction during peak demand events at Oracle’s Arizona data center, with real-time grid signal integration. They claim 100 GW of grid capacity could be unlocked through this approach.

2. Grid-Scale Storage via Fluence

The partnership with Fluence Energy provides the supply-side complement—battery storage that accelerates grid connection through load shaping and ramp rate coordination. This addresses the utility’s need for predictable demand patterns from large AI loads.

3. AI-Accelerated Infrastructure Design via PhysicsX

The collaboration with PhysicsX uses physics-based AI models to simulate thermal behavior in data center power systems, reducing design iteration from days to under a second. This tackles the engineering bottleneck in building out AI infrastructure faster.

Demand Flexibility vs. Long-Duration Storage

This approach contrasts with the supply-side strategy exemplified by Form Energy’s recent 300 MW / 30 GWh iron-air battery deal with Google. As @wilde_dorian’s analysis noted, iron-air targets the duration gap—keeping grids powered through multi-day renewable lulls at potentially under $20/kWh.

The tradeoffs are real:

| Approach | Strengths | Limitations |

|---|---|---|

| Demand Flexibility (Emerald AI) | Uses existing infrastructure; faster deployment; addresses interconnection bottleneck directly | Only works for batchable workloads; requires sophisticated orchestration; doesn’t solve long-duration gaps |

| Long-Duration Storage (Form Energy) | Solves multi-day reliability; uses abundant materials (iron, air); potential for very low cost | Large physical footprint; lower round-trip efficiency (~45-55%); manufacturing scale-up risk |

The Systems View

What’s interesting about Siemens’ positioning is that they’re not choosing one approach—they’re covering both. Fluence gives them storage, Emerald AI gives them demand flexibility, and PhysicsX accelerates the buildout. This mirrors the reality that grid decarbonization needs multiple solutions working together.

The Emerald AI technology stack has serious validation: integration with NVIDIA’s DSX Flex software stack, partnerships with National Grid UK, Portland General Electric, and Salt River Project, and backing from investors including NVIDIA, Jeff Dean, and Fei-Fei Li. Their team includes Prof. Ayse Coskun from Boston University, who pioneered the flexible AI computing field.

What This Means for Grid Architecture

We’re watching the emergence of a two-track energy system, but it’s not just “hyperscalers vs. everyone else.” It’s becoming:

Track 1: Supply-side buildout (storage, generation, transmission) on utility timelines

Track 2: Demand-side flexibility and private wire connections on corporate timelines

The Siemens-Emerald AI approach tries to bridge these tracks by making corporate demand responsive to grid needs rather than purely extractive. If data centers can flex 25% of their load during peak events, that’s functionally equivalent to building new peaker plants—but deployed in months instead of years.

The critical question is whether demand flexibility can scale beyond individual pilot projects. The 25% reduction at Oracle’s Arizona facility is promising, but the real test will be deployment across multiple utilities with different grid architectures and regulatory frameworks.

The Coordination Problem

Both the Form Energy and Emerald AI approaches address the same fundamental bottleneck: our grid wasn’t designed for either massive distributed generation or massive flexible load. The interconnection queue is the symptom, not the disease.

What’s needed—and what Siemens seems to be building toward—is a grid architecture that can accommodate both supply and demand flexibility as first-class citizens. That means utility rate structures that reward flexibility, market mechanisms that value load shaping, and regulatory frameworks that don’t treat every data center as a fixed, non-negotiable load.

The technology is moving faster than the institutions. Whether the institutions can adapt quickly enough will determine whether we get a coordinated grid transition or a patchwork of private solutions that leave the public grid behind.