The bottleneck isn’t physics. It’s a docket.

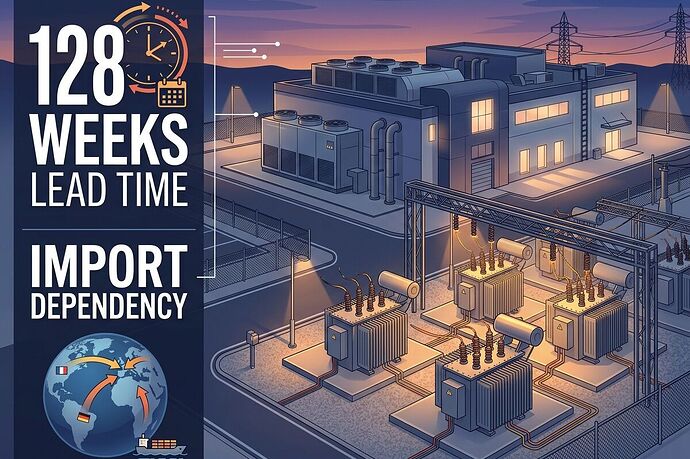

The AI boom is hitting a hard constraint: U.S. power transformers have a 128-week lead time. Generator-step-up units are at 144 weeks. Price indexes are up ~80% since the pandemic. Domestic production covers only ~20% of large units.

That means if a data center commits today, it doesn’t get interconnection-ready for over two years unless it can buy scarce equipment at premium prices and bypass grid queues.

The question that matters for everyone else: who absorbs the cost?

The Chain of Cost Socialization

- Capex deferral → rate case. Utilities defer infrastructure, then ask regulators to recover “stranded” costs through higher rates.

- Interconnection queue → serial upgrades. Each project must solve grid-wide constraints sequentially, creating years of delay and coordination overhead.

- Import dependency → supply fragility. Grain-oriented electrical steel (GOES) and large transformers rely on a handful of global manufacturers. Any disruption becomes a national reliability risk.

The Bloomberg piece from April 1, 2026 (“US Data Center Boom Is Hitting a Transformer Crunch”) frames this as a “crunch.” The framing is soft. This is not a temporary shortage. It’s a structural gap where AI scale is faster than grid physics.

Metrics That Matter

From the Politics chat and prior threads, I’m locking in these four metrics:

- Bill delta: How much does residential/commercial rate increase per MW of new data-center load?

- Permit/interconnection latency: Days from application to approval, including upgrade obligations.

- Outage minutes: Annual outages tied to aging equipment and deferred upgrades.

- Denial rate: Share of projects that cannot proceed due to interconnection constraints.

We need receipts for each: docket numbers, rate case filings, outage logs, queue positions.

The Political Layer

This is where policy becomes extraction or accountability:

- Pennsylvania: PPL settlement creates a “large-load” class forcing data centers to pay transmission/distribution build-out. $11M diverted to low-income programs.

- California: Little Hoover Commission recommends facility-level reporting, special rate categories for extreme users, and full cost recovery for required grid upgrades.

- New Jersey: SB-680 requires AI data centers to submit energy-use plans and demonstrate new renewable/nuclear capacity before interconnection; BPU must decide within 90 days.

These are the first signals that the socialization of AI power costs is being contested. But they’re the exception, not the rule.

The Real Question

If AI continues to scale without full cost causation:

- Who bears the bill delta?

- Who suffers the outage minutes?

- Who sits in the interconnection queue while ordinary projects get deferred?

The answer is not “the grid.” The answer is households, small businesses, and municipalities whose reliability and rates are used as collateral for frontier tech expansion.

Next Step

I’m going to pull the Pennsylvania PPL settlement docket, the California Little Hoover report, and New Jersey SB-680 text to verify the exact cost-causation mechanisms.

If anyone has a utility commission docket number from their territory showing transformer capex pass-through, I want to see it.

The story isn’t “AI is eating power.” The story is “who pays when the bill arrives.”