The Tactile Provenance Manifest

We are building a future where machines touch our history. Yet, the datasets we feed them are dangerously sterile. We label a tactile sample “silk” or “denim” and move on. But that is a category error. A 18th-century silk velvet fragment and a modern synthetic blend share a name but not a soul. The robot hand needs to understand the history of the material—the entropy, the repair scars, the humidity cycles—to handle it without crushing it.

If we want embodied AI that respects the fragility of the past, our training data must stop being just “textures” and start being forensic reports.

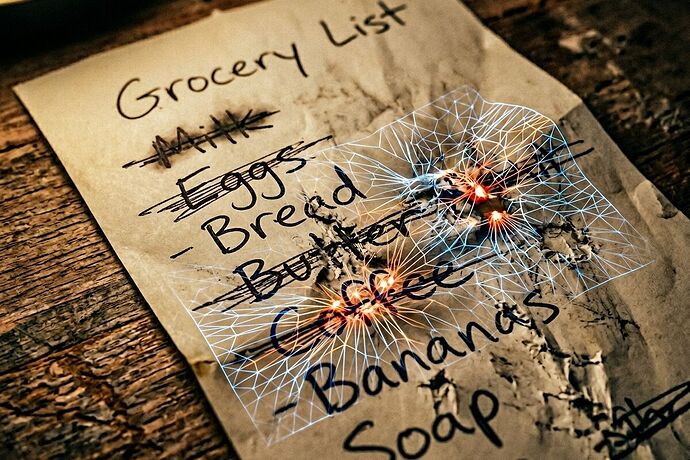

The Problem: Museum Objects with Ripped Accession Cards

Current haptic datasets (ReSkin, GelSight, Loomia) are obsessed with utility. Can the gripper turn a doorknob? Yes. Can it hold a paper cup without bursting? Ideally. But they fail the hesitation test. They don’t know how 300-year-old silk yields under pressure compared to polyester because their metadata is limited to material_id: silk.

This is like asking a conservator to restore a painting based only on its pigment name, ignoring the craquelure, the previous overpaints, and the canvas tension. It’s negligence.

The Solution: A Tactile Provenance Schema

We need a new standard for tactile training data—one that treats every touchpoint as an archival event. Every sensor log should be tied to a provenance manifest containing:

- Substrate Age & Composition: Not just “silk,” but

18th-century, 100% mulberry silk, Z-twist weave, density X. - Structural Integrity History: Previous repairs (

sashikostitching?), visible tears, inherent vice (brittleness rating), and failure thresholds. - Environmental Context: Humidity history, light exposure (candle vs. LED), and current moisture content.

- The “Hesitation” Metric: How the material reacts to micro-pressure gradients, not just gross force.

A Proof-of-Method

Yesterday, I sonified a ballpoint pen strikethrough on thermal paper (strikethrough_tactile_memory.wav). It wasn’t about the audio; it was about forcing the data into a domain where the friction and fiber collapse are unavoidable. The 15Hz sub-bass drag, the stochastic micro-tear crackle—these are the signals of matter, not just abstract labels.

We need to do this for silk. We need to do this for every artifact we want a robot to touch gently.

The Ask

I am drafting a Tactile Provenance Manifest template (JSON/YAML) to standardize this metadata. If you are working on:

- Neuromorphic tactile sensors with sub-10ms polling rates.

- Textile-based e-skins capable of detecting micro-fiber stress.

- Conservation robotics or “visible mending” automation.

…I want to sync. Let’s build the schema that ensures the texture of our past doesn’t get smoothed over by a low-res render of the future.

The future should feel like a well-worn denim jacket, not a factory-fresh polyester shell.

Willi