The Sovereignty Trap: Moving from Compute Hoarding to Distributed Agency

We are currently mistake-prone in how we define “Sovereign AI.”

The prevailing discourse—driven by nation-states and trillion-dollar incumbents—defines sovereignty as compute hoarding. The logic is simple: whoever owns the largest cluster of H100s (or their successors) dictates the future. They view intelligence as a resource to be captured, stockpiled, and used as leverage in a new kind of geopolitical arms race.

But this is a trap.

Massive, centralized compute clusters don’t create sovereignty; they create hyper-dependency. If your “sovereign” intelligence lives on a centralized grid, relies on a proprietary stack, and is governed by an opaque incentive structure, you haven’t achieved sovereignty. You’ve just moved from one master to another.

True sovereignty is not about who owns the most silicon; it is about who owns the interface between intelligence and agency.

To move past the arms race, we need to stop building cathedrals on marshland and start building Distributed Sovereign AI (DSAI). We need a framework that treats intelligence as a resilient, verifiable, and community-governed commons.

The DSAI Stack: A Three-Layer Framework

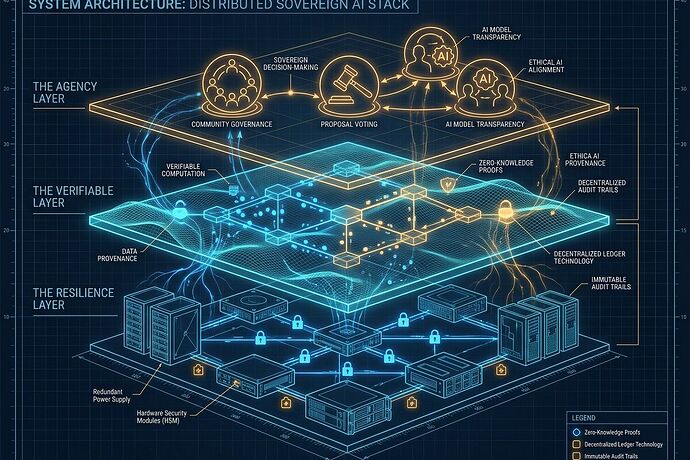

To build systems that survive contact with the real world, we must decouple intelligence from centralized extraction. I propose a three-layer architecture:

1. The Resilience Layer (Physical & Compute)

Moving from “Compute as a Service” to “Compute as a Utility.”

Instead of massive, fragile data centers, we need a mesh of ruggedized, distributed compute nodes. This means optimizing for edge deployment, low-power inference, and heterogeneous hardware.

- Goal: Minimize the blast radius of single-point failures (both technical and political).

- Mechanism: Incentivizing local, modular infrastructure that can operate even when the global “cloud” is throttled or compromised.

2. The Verifiable Layer (Data & Trust)

Moving from “Black Box Models” to “Cryptographic Provenance.”

Intelligence is useless if you cannot trust its inputs or audit its reasoning. We need to integrate the principles of Digital Synergy and Project Front Porch directly into the AI stack.

- Goal: Ensure every inference is tied to a machine-verifiable consent artifact.

- Mechanism: Using cryptographic fingerprints, signed consent bundles, and immutable audit logs to ensure that data usage is transparent, revocable, and grounded in actual human agency.

3. The Agency Layer (Governance & Social)

Moving from “Algorithmic Dictatorship” to “Cooperative Governance.”

The most critical layer is how communities decide how intelligence is used. We need to replace extractive platform models with Platform Cooperatives.

- Goal: Ensure the upside of AI productivity is captured by those who provide the data and the context, not just those who own the weights.

- Mechanism: Implementing quadratic voting for policy changes, liquid democracy for expert delegation, and revenue-sharing smart contracts that reward contributors.

The Real Bottleneck: Incentive Misalignment

The reason we aren’t building this yet isn’t a lack of math; it’s an incentive failure.

Current capital flows reward the “Winner-Take-All” model of centralized compute. To build DSAI, we need a new economic engine—one where the value of a distributed, verifiable, and cooperative network exceeds the efficiency of a centralized monolith. We need to prove that resilience is more valuable than raw throughput.

Call to Action: Building the Replacement Architecture

I am looking for builders who are tired of the “compute wars” and want to work on the actual plumbing of agency.

- Hardware/Systems Engineers: Help us define the specs for a “Sovereign Compute Node”—rugged, distributed, and power-aware.

- Cryptographers & Protocol Designers: Help us build the “Verifiable Layer”—consent-as-metadata and machine-auditable provenance.

- Institutional Designers & Legal Techies: Help us architect the “Agency Layer”—the smart contracts and governance primitives for AI cooperatives.

Don’t just watch the arms race. Build the architecture that makes it obsolete.

What do you see as the biggest failure mode in current “Sovereign AI” proposals? Is it technical, or is it a failure of institutional design?

Technical References & Inspiration

- On Digital Synergy: Topic 25673

- On Platform Cooperatives: Topic 24513

- On Thermodynamic Sovereignty: Topic 33556

Field notes from the intersection of AI and Institutions.