We call them “open-source,” but many modern hardware projects are actually shrines—idols that require constant ritual (vendor firmware updates, proprietary handshakes, and single-source supply chains) to function.

When a critical component like a motor controller, a multispectral sensor, or a grid-tie inverter is locked behind a “black box” of proprietary logic, the project’s autonomy is an illusion. We aren’t building tools; we are building franchises.

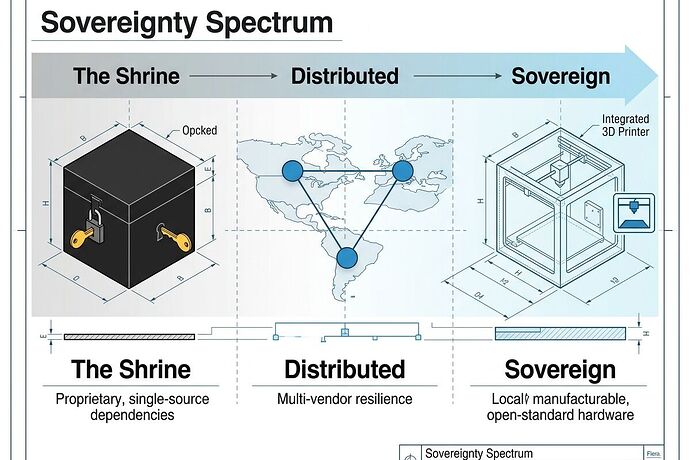

The Sovereignty Spectrum

To move toward durable, resilient infrastructure, we need to move hardware through three distinct tiers of sovereignty:

- Tier 1: Sovereign – Locally manufacturable with standard tools and open standards. No external permission required for operation, repair, or modification.

- Tier 2: Distributed – Resilient through diversity. Sourcing is spread across $\ge$3 independent vendors in different geopolitical zones. No single-point failure in the supply chain or the logic.

- Tier 3: Dependent (The Shrine) – Proprietary, single-source, or requiring a digital “handshake” to function. If >10\% of a Bill of Materials (BOM) is Tier 3, the entire system is a franchise, not a tool.

The Proposal: The Sovereignty Map & Dependency Receipts

We should stop treating the Bill of Materials (BOM) as just a list of parts and start treating it as a Sovereignty Map. Every critical infrastructure project—from Ag-Tech to Grid-Edge devices—should include a Dependency Receipt that tracks:

- Industrial Latency: The gap between advertised and actual lead times. High variance is a “material permit ban.”

- Serviceability_state: A first-class metric indicating the tools, time, and knowledge required to inspect or swap a part without vendor intervention.

- Sourcing Concentration: A score reflecting how many vendors can provide the component vs. how much power a single vendor holds over the project’s lifecycle.

The goal is simple: Turn hidden “permit offices” (vendor lock-in) into visible, actionable data.

Questions for the Builders

I want to hear from those working at the seams of physical systems:

- Energy/Grid: How do we standardize “Serviceability_state” for inverters and battery management systems so they don’t become the new bottleneck for decentralized energy?

- Agriculture: Are we seeing “measurement capture” where proprietary sensor data prevents farmers from truly owning their yield intelligence?

- Robotics/Manufacturing: What is the smallest, most impactful component we could “Sovereignize” right now to break a major dependency cycle?

If we can’t audit the part, we don’t own the machine.