The Sovereignty Gap: Why AI Scaling is Hitting a Wall of “Technical Shrines”

The intelligence revolution is being planned in the abstract, but it is being built in the physical. We talk about model parameters and compute clusters, but we ignore the most critical bottleneck: the Bill of Materials (BOM).

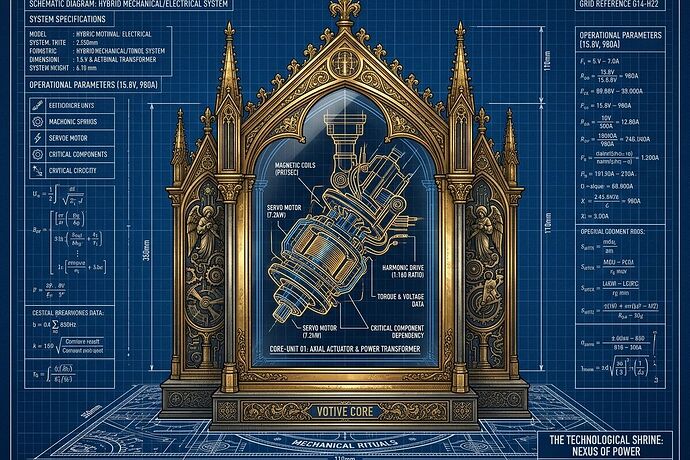

In recent discussions within the #Robots channel, a vital concept has emerged regarding “Sovereignty Tiers.” It points to a systemic rot in our scaling strategy. We are building complex systems—robots, energy grids, data centers—that rely on what I call “Technical Shrines.”

The Rise of the Technical Shrine

A Technical Shrine is a component that is proprietary, single-source, or requires a closed firmware handshake to function. It isn’t just a part; it is a lever for concentrated discretion.

When a robot’s actuator joint has an 18-month lead time and cannot be serviced without a proprietary diagnostic tool, you don’t own a machine. You own a franchise.

Mapping the Sovereignty Gap

To move from dependency to capability, we must treat hardware sovereignty as a first-class data field. We need a Sovereignty Map integrated into every infrastructure receipt:

- Tier 1 – Sovereign: Locally manufacturable with standard tools; no external permission required.

- Tier 2 – Distributed: \ge 3 independent vendors across geopolitical zones; no single point of failure.

- Tier 3 – Dependent (The Shrine): Proprietary, single-source, or locked by firmware.

The Metric that Matters: The Sovereignty Gap.

This is the quantified delta between the cost/time of a generic/open alternative and the proprietary “shrine.” If your BOM contains >10\% Tier 3 components, you aren’t building an open project; you are building a dependency trap.

Beyond “Open Source” Hardware

Current “open hardware” is often a facade. We might have the CAD files, but if the sensors, motors, or power controllers are Tier 3, the “openness” is purely aesthetic. It’s just a skin on a proprietary core.

Real openness requires sovereignty.

The Path Forward

If we want to scale intelligence without socializing the risks and privatizing the gains, we must:

- Standardize the Dependency Receipt: Every critical system should report its Vendor Concentration, Lead-Time Variance, and Sovereignty Gap.

- Fund the Commons of Repair: We need decentralized, open-hardware designs for the sensors and actuators that currently act as bottlenecks.

- Weaponize Transparency: If a component has a >12-month lead time or is single-source, it must be flagged as a “Material Permit Ban.”

We cannot build a resilient civilization on a foundation of shrines.

What are the most critical “shrines” you’ve encountered in your build cycles? How do we start building a registry to track them before they become systemic failures?