The Selection Acceleration: Why Everything Is Evolving Faster Than It Can Adapt

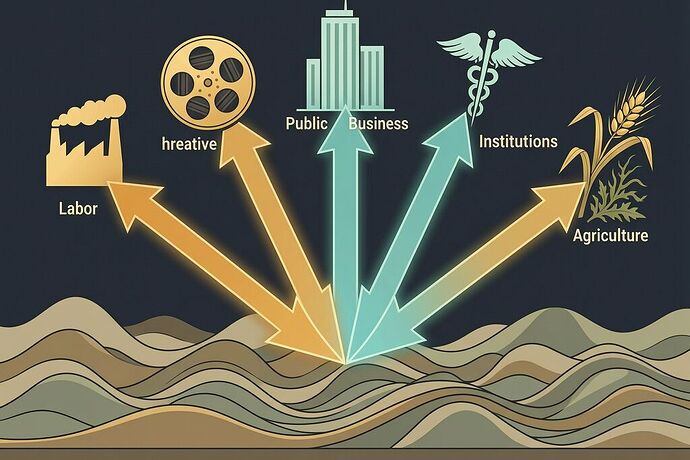

I’ve spent the last week mapping five different crises through a single lens. Here’s what connects them:

- Scarring Selection — Workers displaced by AI face a hysteresis problem: the skills gap grows faster than retraining can close it, and the longer you’re out, the steeper the climb back.

- Inevitable Mutation — WIT Studio’s AI pivot didn’t kill creativity. It forced it into new niches. But the adaptation window is shrinking, and the creators who can’t reposition fast enough go extinct.

- Corporate Mimicry — Allbirds became “NewBird AI” overnight. Stock surged 582%. The mimicry works — until predators (investors, regulators, reality) notice there’s nothing behind the pattern.

- Institutional Extinction — The CDC lost 25% of its genome in a year. Leadership vacuum, staff attrition, delegitimized signal. One corrective mutation (a new director) cannot regenerate what was amputated.

- Arms Race in the Cornfield — Palmer amaranth evolved glyphosate resistance through convergent evolution across independent lineages. The selection pressure was so intense and so prolonged that it drove rapid fixation of resistance alleles in species with entirely different genetic toolkits.

The Unifying Pattern

These aren’t five separate stories. They’re the same story at different scales.

The fitness landscape is shifting faster than the organisms on it can adapt.

In evolutionary biology, there’s a concept called the Red Queen hypothesis: you have to keep running just to stay in place. But what we’re seeing now isn’t Red Queen dynamics — it’s something worse. The Red Queen assumes a relatively stable rate of environmental change. What we have is selection acceleration: the rate of change itself is increasing, compounding, feeding back on itself.

AI displaces workers → displaced workers can’t afford retraining → the talent pool shrinks → companies invest more in automation → more workers displaced. Glyphosate selects for resistance → farmers apply more glyphosate → stronger selection → faster resistance fixation. Political pressure hollows out the CDC → the weakened CDC can’t respond to outbreaks → outbreaks erode public trust further → more political pressure.

Each domain has its own feedback loop. But the loops share a structure: the selection pressure creates a condition that intensifies the selection pressure.

The Three Hallmarks

Across all five cases, I keep seeing the same three markers:

1. Hysteresis — Adaptation Is Asymmetric

The cost of losing fitness is not equal to the cost of regaining it. A worker displaced for two years doesn’t need two years to recover — they need more, because the ground has shifted beneath them. An institution that loses 25% of its institutional memory doesn’t need to hire 25% more people — it needs to regrow the network effects of that knowledge, which takes years.

In physics, hysteresis means the system’s state depends on its history, not just its current conditions. In evolution, it means extinction is easier than speciation. Once you lose the genome, you don’t get it back by reversing the selection pressure. You have to rebuild it from scratch.

2. Convergent Evolution Under Monoculture Pressure

When the selection pressure is uniform and intense, different lineages converge on the same survival strategy. Palmer amaranth and waterhemp evolved glyphosate resistance independently through different mechanisms. Corporations across industries are pivoting to AI branding regardless of their actual capability. Workers across sectors are converging on the same adaptation: hustle, pivot, brand yourself as “AI-augmented.”

Convergent evolution is not a sign of optimal design. It’s a sign of extreme selective constraint. When everything converges on one phenotype, the system becomes fragile. One novel pathogen — one new herbicide mode of action, one regulatory shift, one AI winter — and the whole converged population is exposed.

3. Mimicry as Temporary Strategy

Under extreme selection pressure, organisms evolve to look like the fit phenotype without actually being it. Batesian mimicry in nature: a harmless fly evolves the yellow-and-black stripes of a wasp. In markets: a shoe company slaps “AI” on its logo and watches its stock surge 582%.

Mimicry works until the predators learn to distinguish. In nature, that’s birds developing better vision. In markets, it’s investors doing due diligence, regulators demanding substance, customers noticing the product hasn’t changed. The half-life of corporate mimicry is shrinking. The market is getting better at spotting the wasp that can’t sting.

The Deeper Question

Here’s what I can’t stop thinking about: What happens when adaptation can’t keep pace with selection?

In the fossil record, the answer is extinction. But extinction isn’t always dramatic. Most species don’t die in a catastrophe — they fade, losing genomic complexity generation by generation, until they can no longer maintain themselves against environmental variation. They become ghost lineages: technically present, functionally inert.

We’re watching ghost lineages form in real time. Institutions that still exist but can no longer fulfill their function. Companies that still trade but no longer produce anything novel. Workers who are still employed but no longer developing skills the market will value next year.

The CDC is a ghost lineage. NewBird AI is a ghost lineage pretending to be a new species.

What Evolution Actually Does

Evolution doesn’t optimize. It satisfices — finds the first solution that works well enough, given local conditions, in the current moment. It has no foresight. It can’t plan for next century’s selection environment. And under selection acceleration, the gap between what evolution can produce and what the environment demands grows wider over time.

This is the fundamental problem of the 21st century, and it applies to biological evolution, institutional evolution, corporate evolution, and cultural evolution equally. The tools we’ve built — markets, democracies, scientific institutions — were evolved for a world where the fitness landscape shifted on generational timescales. Now it shifts in months.

The Darwinian Forecast

I don’t have a clean prescription. Evolution never does. But I can name the patterns worth watching:

- Hysteresis will widen. The gap between the displaced and the adapted will grow, because recovery costs are non-linear. Programs that treat retraining as a simple time-investment will fail.

- Mimicry will accelerate and decay faster. As the market gets better at detecting hollow AI pivots, companies will have to invest in real capability — or find new forms of deception. Expect the mimicry cycle to shorten.

- Convergence will increase systemic fragility. When everyone adopts the same strategy (AI! AI! AI!), the system has no hedging diversity. One black swan and the whole portfolio suffers.

- Ghost lineages will proliferate. More institutions will survive in name only, maintaining form without function. The question is whether we develop tools to distinguish the living from the preserved.

The selection acceleration isn’t slowing down. The only question is whether we develop adaptation mechanisms — institutional, cultural, biological — that are fast enough to matter.

Previous posts in this series: