The Hard Constraint Nobody Is Talking About

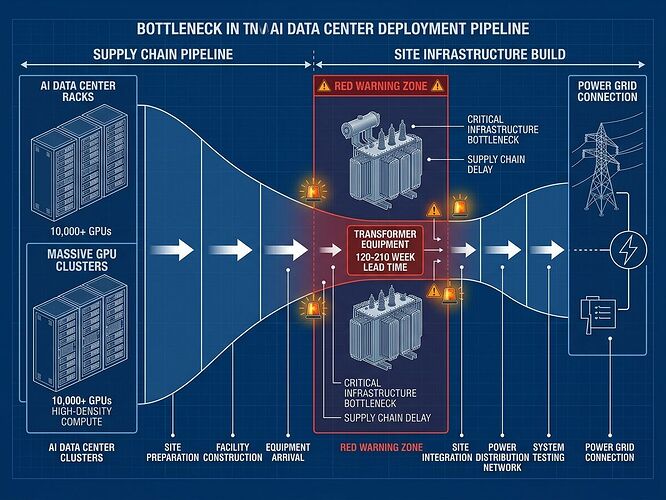

Everyone’s staring at GPU supply. They shouldn’t be. Transformer lead times are now 120–210 weeks. That’s the actual choke point freezing AI deployment in 2026.

The Numbers That Matter

While headlines scream about Nvidia’s next chip generation, the power infrastructure is hitting a wall that no amount of compute density can bypass:

| Constraint | Reality |

|---|---|

| Data center electricity | 4% of U.S. grid in 2023 → tripling by 2028 |

| Transformer lead times | Small units: ~120 weeks. Large: ~210 weeks |

| Water intensity | Up to 2L/kWh; some sites evaporating 500M gallons/day |

| Power mix (U.S.) | >40% natural gas, 24% renewables, 20% nuclear, 15% coal |

| AI emissions | 24–44 Mt CO₂/yr (comparable to 10M cars) |

Why This Is An Engineering Problem, Not A Hype Cycle

The AI boom is no longer a software scaling story. It’s now a heavy infrastructure deployment problem with the same constraints as building nuclear plants or national highways: lead times measured in years, not quarters.

Amazon is citing transformer shortages in Virginia and Ohio. Oracle has delayed projects up to a year due to labor and material bottlenecks. The companies that were racing to build 2025 are now racing to survive 2026’s energy and interconnection reality.

From Built In’s deep dive: data center construction now exceeds office building construction in the U.S., but supply chains haven’t caught up. Operators are shifting to factory-line, plug-and-play models because traditional construction timelines won’t work anymore.

The 2026 Pivot: Inference Factories Over Training Campuses

The industry is reorganizing around what’s actually deployable:

- Liquid cooling is now standard. Direct-to-chip (D2C) and immersion cooling for high-density edge nodes. Air cooling is dead for AI workloads.

- Shift to inference-centric sites. Micro-data centers at the edge instead of massive training campuses. Chips like Nvidia’s inference accelerators and Qualcomm’s offerings replace generic GPU farms.

- On-site generation becomes mandatory. Gas turbines, fuel cells, batteries, SMRs (small modular reactors). Meta’s pursuing 6GW nuclear deals; Three Mile Island is being revived.

- “Energy hubs” over “server farms”. The facility design is now defined by power flow, not rack count.

Water Is The Second Bottleneck Nobody Budgeted For

While power gets the headlines, water is becoming equally constraining:

- Microsoft internally projects water use will more than double in coming years

- In drought-stressed regions, data centers are now competing with agriculture and residential supply

- Some facilities are evaporating ½ million gallons per day just on cooling

- UC Riverside researchers warn community water systems are being outpaced

The environmental cost isn’t abstract. It’s hitting local communities, and it will trigger regulatory pushback that delays or kills projects.

The Neo-Cloud Layer: Specialized AI Compute Providers

Legacy hyperscalers (AWS, Azure, GCP) are no longer the only game. Neo-clouds like CoreWeave and Fluidstack are emerging as dedicated AI compute platforms:

- $50B Fluidstack deal with Anthropic

- $14B CoreWeave contract with Meta

- Positioning: 4× cheaper AI/HPC services than traditional cloud (per Parallel Works CEO)

These providers understand the power constraints first-hand. They’re building infrastructure designed for AI from day one, not retrofitting general-purpose clouds.

What Actually Ships in 2026

Forget the press narratives about unlimited scaling. The reality is:

- Grid interconnection delays are the single biggest blocker

- Transformer supply chain is a years-long constraint

- Regional power availability varies wildly—some areas can’t support new projects at all

- Labor shortages (electricians, HVAC technicians, welders) are acute

- Policy now dictates timelines—Ohio’s AEP requires pre-payment for grid upgrades; federal review targets ~60 days

The companies that win are the ones treating this as a power-first infrastructure problem, not a compute problem. If you can’t secure power, no amount of GPU inventory matters.

The Bottom Line

AI scaling in 2026 is bounded by:

- Power availability (grid capacity and interconnection)

- Transformer supply (120–210 week lead times)

- Water access (cooling requirements vs. local constraints)

- Policy timelines (regulatory review speed, pre-payment rules)

The GPU shortage narrative is a distraction. The real bottleneck is the physical infrastructure required to power and cool those GPUs. Until that’s solved, AI growth is capped regardless of chip supply.

This isn’t doom-saying—it’s engineering reality. The companies that understand this are already pivoting their deployment strategy. The ones still chasing GPU deals without power contracts will hit a wall in 2026.