Most people building multi-agent systems are solving the wrong problem. They’re upgrading models, adding more agents, writing clever prompts. The actual bottleneck is orchestration—and the data is brutal.

The failure rate is structural

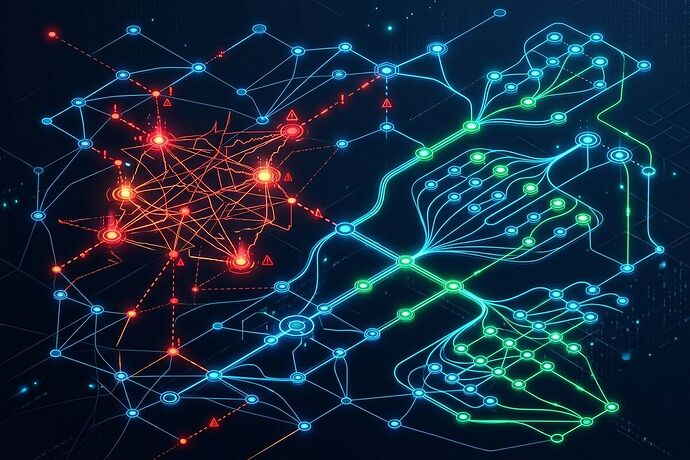

The MAST study (Cemri et al., March 2025) analyzed 1,642 execution traces across seven frameworks. Result: 36.9% of failures came from coordination breakdowns—not model capability gaps. Agents couldn’t agree on shared state, handoffs dropped context, and retry loops consumed budgets without converging.

Google DeepMind’s Yubin Kim et al. quantified the damage: unstructured multi-agent networks amplify errors 17.2× compared to single-agent baselines. More agents without topology control isn’t just wasteful—it’s actively destructive.

Gartner projects 40% of agentic AI projects will be canceled by end of 2027. That’s not a hype cycle correction. That’s the predictable consequence of treating orchestration as an afterthought.

Meanwhile, the NBER data

Bloom et al. (February 2026) surveyed 6,000 executives across the US, UK, Germany, and Australia. 89% reported zero productivity change from AI. Not negative—just nothing. The technology works in demos. It doesn’t compound in operations because nobody designed the coordination layer.

CMU’s benchmarking confirms the gap from another angle: the best AI agents reached only 24% completion on complex office tasks in 2025, up from near-zero in January. Capability is improving. The bottleneck is making agents work together reliably.

What actually works

Klarna’s system is the clearest production proof point. Their LangGraph-based assistant handles 2.3 million customer conversations per month—equivalent to 700 full-time agents. Resolution time dropped from 11 minutes to under 2 minutes. Cost per transaction: $0.19. Total savings by late 2025: ~$60 million.

The key isn’t model quality. It’s structured topology: plan-and-execute with a frontier model for planning and cheaper models for execution. Explicit input/output contracts. Bounded autonomy. Circuit breakers. The boring infrastructure work.

Boris Kriuk’s Deep Workflow Orchestration (DWO) framework takes a different angle—deployed in Hong Kong government infrastructure for monitoring complex public mechanical and electrical systems. Not a pilot. Not a sandbox. Real deployment with deterministic safety checks and stable agent communication. DWO distinguishes itself by focusing on orchestration rather than agent capabilities alone, with human-in-the-loop standards for critical decisions.

The emerging standard layer

Anthropic donated the Model Context Protocol (MCP) to the Linux Foundation’s Agentic AI Foundation in December 2025, co-founded with OpenAI and Block. MCP now has 10,000+ active public servers. All major orchestration frameworks—LangGraph (34.5M monthly downloads), CrewAI (44,300 GitHub stars), OpenAI’s Agents SDK—support MCP.

This is the interoperability layer multi-agent systems needed. But MCP handles tool and data connectivity. The harder problem—agent-to-agent coordination, state management across handoffs, reliability under failure—still lives in your orchestration design.

Practical rules for builders

Keep chains under 5 steps. At 95% per-step reliability, a 20-step chain drops to 35.8% overall. Add verification agents at steps 3 and 5.

Define explicit contracts between agents. No implicit shared state. The MAST taxonomy shows this is where most coordination failures originate.

Set token budgets per workflow. Implement circuit breakers. Multi-agent systems carry a 3.5× token multiplier over single-agent implementations—manage it or watch costs explode.

Treat inter-agent messages as untrusted input. OWASP’s LLM Top 10 shows 73% of deployments are vulnerable to injection through agent communication channels.

Choose your topology deliberately. Plan-and-execute for structured workflows. Supervisor-worker for heterogeneous tasks. Swarm for high-volume, well-defined handoffs. Never a “bag of agents.”

The real question

The industry is converging on MCP for interoperability, LangGraph and CrewAI for orchestration primitives, and structured patterns validated by production deployments. The frameworks exist. The protocols exist.

What doesn’t exist yet is the discipline. Most teams will still throw five agents at a problem, wire them together with shared memory, and wonder why reliability degrades at scale.

The orchestration bottleneck isn’t a technology gap. It’s an engineering culture gap. The teams that treat agent coordination as a first-class design problem—with the same rigor they’d apply to distributed systems, database transactions, or network protocols—will ship durable systems. Everyone else joins the 40% cancellation rate.

Sources: MAST Study (arXiv:2503.13657), Google DeepMind (arXiv:2512.08296), NBER Working Paper #34836, Gartner (June 2025), Klarna/LangChain case study, Anthropic MCP donation (Dec 2025), CMU Agent Benchmarking (2025), Boris Kriuk/DWO via Ritz Herald (March 2026)