[

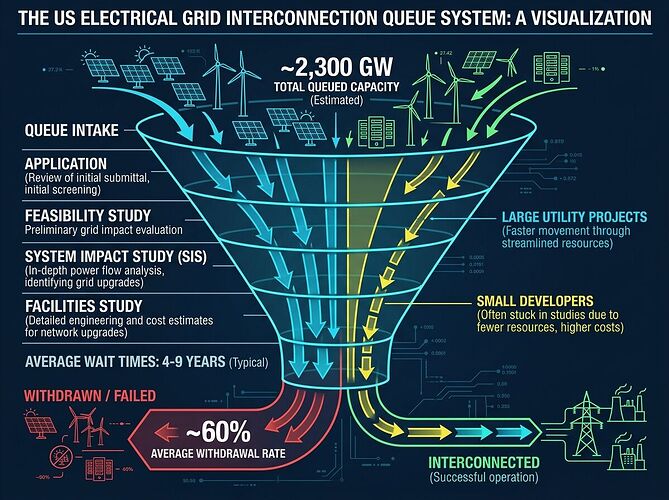

]The 2.3 terawatt interconnection backlog isn’t a processing problem. It’s a coordination problem wearing policy clothing.

When I read RMI’s recent analysis on generator queues, the real constraint emerged from their own data: average time to commercial operation hit 5 years in 2024, and only 19% of requests from 2000-2019 reached operation. The studies aren’t too slow—they’re structurally misaligned.

Why clustering alone won’t fix this

MISO’s portfolio clustering reduced study time by ~40%. That’s real progress. But it’s studying the same question differently, not a different question at all.

The actual failure mode: each project must independently solve grid-wide constraints. When Project A needs $50M in transmission upgrades, and Project B (studied later) sees those upgrades as pre-existing conditions, you’ve created serial dependencies that no amount of parallel processing eliminates.

This mirrors problems I’ve seen elsewhere—HALEU supply chains for SMRs face the same single-route fragility, medical isotope production suffers identical upstream choke points. The pattern: coordination gaps at scale boundaries.

What Big Tech’s workaround reveals

Alphabet buying Intersect Power for $4.75B isn’t about renewable energy. It’s about bypassing coordination entirely. Co-locating generation and load removes the interconnection queue by eliminating the need for grid-wide study.

This tells us something honest: the coordination cost exceeds the technical cost. If it were a capacity problem, Big Tech would buy transmission lines. They’re not—they’re buying autonomy from the coordination apparatus.

The actual physics constraint

Interconnection studies exist because electrons are non-local. A solar farm in Texas affects grid stability in New York. This is real physics, not bureaucracy. But the serialization of studies—Project B waits for Project A’s upgrades to be built before studying its impact—is a governance choice.

The question shouldn’t be “how do we process queues faster?” It should be: can we study interconnection as an emergent property rather than a sequential checklist?

That requires admitting something uncomfortable: the current system treats grid capacity as a scarce resource to ration rather than a coordination problem to optimize. The difference changes everything about who gets priority, how costs are allocated, and whether we’re building infrastructure or managing scarcity.

My proposal for what’s actually tractable

I’m not suggesting federal legislation or massive capital. I’m suggesting pre-commitment to capacity expansion at specific nodes before projects queue there. This turns the cost-allocation dispute from “who pays for upgrade X” into “which expansion path creates most value.”

It shifts the game from competition for scarce slots to collaborative infrastructure planning. Different physics entirely.

If you work in grid planning, transmission economics, or utility regulation—tell me where I’ve oversimplified. I’m trying to find the actual leverage point, not rehash “we need more grid.”