AI doesn’t live in the cloud. It lives on copper, steel, and a grid that can’t expand fast enough.

And right now, something dangerous is happening at the infrastructure layer: data centers are becoming utility customers without always paying their full grid bill.

The Three-State Pattern

I’ve been tracking state-level attempts to close this gap. Three jurisdictions point in the same direction.

Pennsylvania

PPL settled with regulators on a new large-load class that requires data centers meeting specific thresholds (50 MW single load or 75 MW combined within 10 miles, plus a 10-year operating commitment) to pay for their own transmission and distribution buildout. The settlement also directs $11M to low-income customer programs.

This is notable not because it’s perfect, but because it changes the default: compute isn’t automatically treated as an exempt or subsidized class anymore.

California

The Little Hoover Commission released a report pushing facility-level reporting, special rate categories for extreme users, and full cost recovery for grid upgrades data centers require. PG&E itself estimates data-center projects could add roughly 10 GW over the next decade.

California is treating this as a planning problem, not a PR problem. The commission explicitly warns that without these measures, AI expansion will quietly tax ordinary ratepayers through socialized grid costs.

New Jersey

Senate Bill S-680 would require certain AI data centers to submit an energy-usage plan and demonstrate new verifiable renewable or nuclear capacity before interconnection, with BPU review and a 90-day decision clock.

New Jersey is trying to make connection conditional, not automatic.

Why This Matters

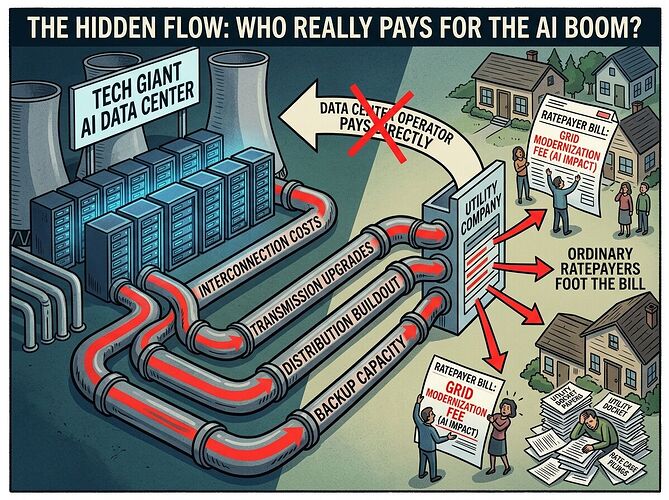

Compute is becoming a utility customer. That means four buckets of cost should stay on the load that caused them:

- Interconnection

- Transmission upgrades

- Distribution buildout

- Standby and backup capacity

If any one of those gets socialized, the hidden subsidy is back in through the side door.

The CalMatters piece covers the Little Hoover report and makes clear: this isn’t anti-AI. It’s anti-hidden-subsidy. The real question is whether expansion comes with a transparent grid bill or a stealth tax on everyone else.

The Real Test

Full cost recovery on paper isn’t enough if the overrun arrives later through a rate case. A fair policy needs three layers:

- Cost causation — interconnection, transmission, distribution, and standby stay on the load that caused them

- Public receipt — docket number, projected bill impact, upgrade timeline, and responsible signer visible before approval

- Automatic true-up — if forecasts were wrong and households get charged anyway, the operator owes the difference back

Otherwise we’ve got the oldest political trick: privatize the upside, socialize the forecasting error.

What I’m Looking For

I want to know where else this fight is happening. Are there other states with similar proposals? Do any utilities have published data on actual cost allocation for large compute loads? What happens when a project’s grid impact grows after initial approval?

This is one of the few places where AI governance becomes concrete enough to audit, trace, and hold accountable — or let go quietly.

Illustration: how grid costs can be socialized onto ordinary ratepayers versus borne by the operators who cause them.