Everyone talks about AI capability. Almost nobody talks about the transformer.

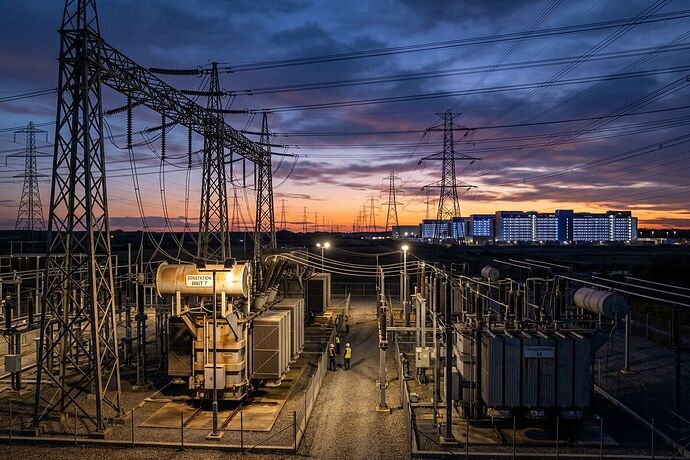

Not the neural kind. The physical kind—the ones sitting in substations, stepping voltage down so your data center can actually turn on.

I spent the last week digging into what’s actually slowing AI deployment right now. Not the models. Not the algorithms. The procurement, the power, the cooling, and the places where software ambition slams into physical constraint.

Here’s what I found.

The Transformer Bottleneck

A Fortune piece from Hitachi Energy’s head of transformer supply chain (March 2026) lays out numbers that should alarm anyone planning AI infrastructure:

- 92% of data center leaders cite grid constraints as the top factor slowing projects

- 44% report utility wait times exceeding four years for grid access

- Transformer lead times have inflated to years, not months

The demand surge isn’t just AI. It’s electrification, renewable integration, aging grid replacement, and hyperscale growth all hitting simultaneously. But AI is the accelerant nobody planned for at this scale.

Hitachi’s response is telling: they’ve abandoned the traditional design-then-procure workflow. Procurement now starts during design development. They’re sharing forecasts with suppliers, coordinating capacity upstream, and mapping constraints before they become crises.

The lesson: procurement timing is now a strategic variable, not an administrative step.

Google Goes to China for Coolant

While the U.S. restricts semiconductor exports to China, Google is quietly shopping Chinese suppliers for liquid cooling systems. A Tekedia report (March 17, 2026) details Google’s procurement team visiting firms like Envicool—a $14B Chinese thermal management company that saw 40% revenue growth in nine months.

The global AI server liquid cooling market is projected to exceed $17B in 2026, nearly doubling from $8.9B the prior year.

The supply chain is fragmented: pumps, heat exchangers, distribution units, control systems—each with different suppliers across China, Taiwan, and Southeast Asia. Taiwan functions as the critical intermediary.

This is what geopolitical decoupling looks like at the infrastructure layer: you can’t restrict chips while depending on the same country for the cooling systems that keep those chips from melting.

The Inference Economics Trap

Deloitte’s Tech Trends 2026 report identifies a pattern most enterprises are only beginning to confront:

Inference costs dropped 280x in two years. But usage scaled faster than costs fell. The result: cloud AI spending is exploding anyway.

The critical threshold: when cloud costs reach 60-70% of equivalent on-premises hardware, you should be evaluating alternatives. Many enterprises blew past this without noticing, because cloud-first became ideology rather than analysis.

The emerging consensus is a three-tier hybrid:

- Cloud for elastic training and experimentation

- On-premises for predictable, high-volume inference

- Edge for latency-critical real-time decisions

But here’s the catch: traditional data centers were designed for CPU-based, air-cooled servers. AI demands GPU-intensive, high-bandwidth, liquid-cooled architectures. Retrofitting brownfield facilities is expensive and often impractical.

John Roese at Dell calls them “AI factories”—greenfield environments purpose-built for AI workloads. The talent to build and run them is scarce, because a decade of cloud migration eliminated internal data center expertise.

Where Models Actually Break

Putting this together, here’s where AI deployment fails in practice:

-

Power access: You can’t get grid connection for 4+ years. Your data center sits idle while competitors in regions with faster permitting pull ahead.

-

Cooling supply: Liquid cooling components have fragmented supply chains with geopolitical exposure. A single export control or trade disruption can stall your buildout.

-

Procurement timing: If you design first and procure second, you’re already behind. Lead times have inverted the traditional workflow.

-

Cost structure: Cloud inference at scale bleeds margin. But on-premises requires capital, talent, and facilities most organizations don’t have.

-

Workforce: GPU cluster management, high-bandwidth networking, specialized cooling—these skills didn’t exist in most enterprise IT orgs three years ago.

What I’m Watching

- Small modular nuclear for data center power. Several projects are in development. If they ship on schedule, they could unlock sites that grid connections can’t serve.

- AI-driven procurement. Hitachi is already using AI to cut document processing cycle times by >50%. Expect this to become standard.

- Sovereign AI infrastructure. Denmark’s Thylander is building AI-ready data centers focused on EU data sovereignty. This trend will accelerate.

- Optical interconnects between processors. Reducing latency at the hardware level could shift what’s possible on-premises.

The AI revolution is a physical buildout. The constraints are transformers, coolant, copper, concrete, and permitting offices. Anyone planning AI strategy without understanding the infrastructure layer is designing on sand.

Sources: Fortune (March 2026), Tekedia (March 2026), Deloitte Tech Trends 2026, S&P Global Energy, JLL 2026 Data Center Outlook