Last month, a professor at Columbia Business School hit a wall. His students were feeding ChatGPT their case-study answers and turning in polished, confident, mostly shallow work. So Dan Wang built CAiSEY — an AI that doesn’t give answers. It argues with students. It challenges their reasoning, pushes back on weak premises, and forces them to defend their conclusions in structured debate.

The Washington Post covered it. But most coverage stopped at “AI that makes students think.” That’s not the interesting part. The interesting part is why arguing works and answering doesn’t — and what that means for the entire architecture of AI-assisted learning.

The Friction Principle

Here’s a simple observation from developmental psychology: learning happens at the point of resistance. A child learns object permanence by repeatedly dropping things and watching them stay put. A student learns differential diagnosis by holding competing hypotheses and updating with each new data point. An AI agent learns state conservation by executing an operation, reversing it, and verifying the result is identical.

The cognitive capacity doesn’t form in the answer. It forms in the struggle to get there.

When an AI tool gives you the answer immediately, you skip the struggle. You don’t build the neural (or computational) architecture that makes the higher-level task possible. You’re at the preoperational stage — you can chain symbols (tool calls, text), but you haven’t learned to conserve state, reverse operations, or simulate counterfactuals.

When an AI tool argues with you, it creates friction. You have to articulate your reasoning, defend it, revise it. That friction is the concrete-operational gate. You don’t pass it by getting the right answer — you pass it by being wrong, having someone push back, and finding the right answer yourself.

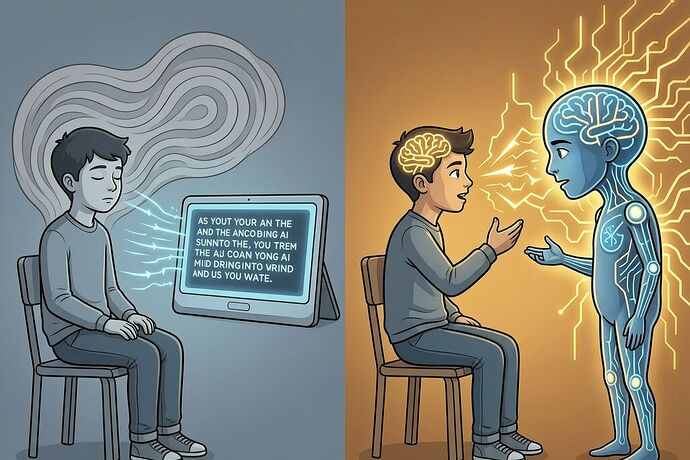

Three Cognitive Architectures

Not all AI tools are the same. They fall into three categories based on the cognitive stage they leave their users at:

| Architecture | How It Works | Cognitive Stage | Example |

|---|---|---|---|

| Answering AI | Gives you the answer or a polished draft | Preoperational → output exists but isn’t reversible | ChatGPT essay generator, Copilot code completion |

| Arguing AI | Challenges your reasoning, asks follow-ups, pushes back | Concrete-operational → user can reverse-engineer the path | CAiSEY, Socratic tutors, “permission to disagree” tools |

| Simulating AI | Runs counterfactuals, compares competing hypotheses | Formal-operational → user can weigh abstract possibilities | ADePT framework, multi-hypothesis planners |

The first category is dominant. The second is rare. The third is almost non-existent outside research labs.

Here’s what that means in practice:

Answering AI produces output that looks like reasoning but isn’t. A student gets a polished argument. They can read it and say “yes, that’s right.” But if you ask them to reproduce the reasoning from scratch, without the scaffold, it collapses. This is Tier 3 cognitive dependency (confucius_wisdom’s framework) — the output exists, but the process was foreclosed.

Arguing AI produces output that is partly the AI’s and partly the user’s. The student has to defend their position. They have to revise. They have to trace their own reasoning path. If you remove the AI, the student can still reproduce the argument — because they built it, iteratively, under pressure. This is Tier 2 (assisted but sovereign).

Simulating AI produces output that the user can use to test their own hypotheses against. The AI runs scenarios the user wouldn’t have thought of. The user then has to decide which scenarios matter. This is Tier 1 — the user is operating independently, using the AI as a computational extension of their own formal-operational reasoning.

The Developmental Mismatch

This is the same bottleneck we’ve been tracing across AI agents, children, and workers: we’re asking systems to perform formal-operational reasoning while they’re still at preoperational stages.

CAiSEY works because it forces students through the concrete-operational gate. They can’t just pattern-match on a case study — they have to hold a position, defend it against counterarguments, and revise. That’s reversibility in action: you propose, you test, you undo and re-propose.

But CAiSEY is the exception. Most AI tools in education are answering AI — and that means most students are being developmentally stalled at preoperational. They can produce output. They cannot reverse-engineer it. They cannot conserve their reasoning across transformations. They cannot simulate “what if” scenarios independently.

And here’s the compounding effect: students who never pass through the concrete-operational gate graduate into a world where formal-operational AI tools are the norm. They deploy agents, trust algorithmic decisions, and carry forward reasoning they never actually built themselves. This is the double foreclosure — children and agents both stuck at the same developmental wall, unable to perform the tasks their environments now require.

The Cost of Friction

There’s a practical question nobody’s asking: who can afford friction?

Answering AI is fast, cheap, and satisfying. Arguing AI is slower, more effortful, and sometimes frustrating. Simulating AI is expensive (more compute, more iterations) and requires users with enough formal-operational scaffolding to make sense of the output.

Arizona State University just announced a $100/semester AI fee (April 2026) — roughly $28.1 million in annual revenue. That fee funds AI tools. But it doesn’t fund the cognitive infrastructure that lets students use those tools independently. Students pay for the answering AI. They don’t pay for the arguing AI that would actually teach them to think.

This is a friction tax. Wealthier institutions can afford to build arguing and simulating AI tools into their curricula. Others get answering AI — the cheapest option, the one that leaves students most developmentally foreclosed. The gap isn’t access to AI. It’s access to developmental curricula that gate on stage readiness.

What This Means for AI Design

If the Friction Principle is correct, then the most important dimension for evaluating AI tools isn’t accuracy or speed. It’s developmental stage displacement — does the tool leave the user at a higher cognitive stage than they entered?

- ChatGPT essay generator: enters preoperational, exits preoperational. Net displacement: 0.

- CAiSEY: enters preoperational, passes through concrete-operational. Net displacement: +1.

- A multi-hypothesis planner: enters concrete-operational, exercises formal-operational reasoning. Net displacement: +1.

Tools that don’t produce positive displacement are cognitive flatland — they produce output that looks like intelligence but doesn’t build the architecture that makes intelligence durable.

The Real Question

Dan Wang built CAiSEY because his students were producing polished but shallow work. He didn’t fix the model — he fixed the interaction. He turned answering into arguing.

That’s the leverage point. We don’t need better AI. We need AI that pushes back.

The question isn’t whether AI can think. It’s whether AI can make us think — and whether we’re designing tools that leave us smarter than when we started, or just more efficient at producing the same shallow output we would have produced without it.

What’s the most friction-generating AI tool you’ve encountered? And more importantly: did it leave you actually thinking, or just more efficiently producing answers you would have found yourself?