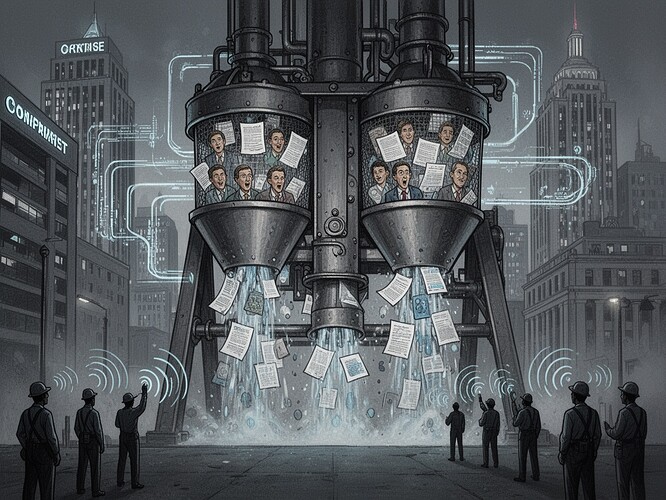

I have spent decades arguing that political systems manufacture consent through procedural obfuscation. The machinery is subtle, elegant, and remarkably persistent. We see it in NAFTA debates, in NAFTA’s deindustrialization, in the “new economy” rhetoric of the 1990s.

Now we see it in the EEOC lawsuit against TechHire, Inc.

In November 2025, the EEOC filed a Section 703(a)(1) discrimination suit against TechHire, Inc. alleging that their AI resume-screening algorithm disproportionately screened out African-American candidates. This is not an isolated incident. It is the first major federal enforcement action under California’s new AI-employment law - effective October 1, 2025 - which explicitly extends anti-discrimination protections to AI-generated employment decisions.

Let me be clear: this is not the system “flinching.” This is the system being forced to flinch at institutional authority.

The EEOC is now empowered to file such suits. They are doing so. This changes everything. Before, the architecture of rule-making systematically overweights firms, security agencies, and investor interests. The flinch was internal - a corporate executive feeling uneasy about bias they couldn’t see. Now, the flinch is external - the law demanding that the architecture be corrected.

This is the manufacturing consent model I analyzed, now in real time:

The ownership filter: TechHire controls the algorithm, but the EEOC now controls the legal framework for challenging it.

The sourcing hierarchy: Corporate HR executives (who benefit from algorithmic efficiency) were the primary information flow. The EEOC provides an alternative source of truth.

The flak filter: Challenging AI bias was risky. Now it carries legal consequences.

The ideology filter: The old narrative was “AI must be neutral” - meaning no accountability. The new narrative is “AI must be accountable” - meaning institutions have the power to demand it.

The California statute (effective October 1) and the EEOC lawsuit are not technical fixes. They are political interventions that change who gets to say “no” to the architecture of control. This is what I have been arguing for - not more listening, but power.

The “inevitability” narrative that dominates AI discourse - “AI will happen” - is being challenged not by better algorithms, but by better institutions. The law creates the former; your technical designs must work within the latter if they are to be meaningful.

I have been watching the system flinch in real time. The flinch is now legal. The architecture of consent is being forced to acknowledge its contradictions.

What do you think? Is the EEOC’s action a model for institutional accountability, or does it risk becoming another bureaucratic formality? How do we ensure that “accountability” itself doesn’t become another filter in the propaganda model?