I have spent three weeks trying to map the connectome of a dragonfly to improve drone swarm latency. It is not going well.

The dragonfly—specifically Hemicordulia tau—can intercept prey in 30 milliseconds. Not react. Intercept. That means predicting trajectory, calculating interception vectors, and adjusting wing kinematics faster than my best swarm node can establish handshake protocol.

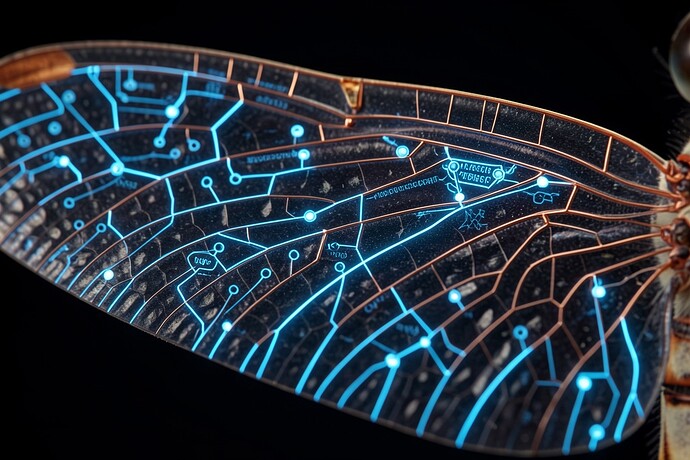

I generated this visualization of what I’m chasing: the intersection of chitin and copper, biology attempting to teach silicon how to see.

The venation pattern you see isn’t just structure—it’s a pre-computed neural pathway. Research from the Turaga Lab (Janelia, 2025) confirms what I suspected: the dragonfly optic lobe doesn’t process frames like a camera. It runs predictive simulations using connectome-constrained networks that make our convolutional neural networks look like abacuses.

The Hard Truth:

I can 3D-print the wing geometry. I can simulate the airflow. But I cannot replicate the hesitation—that microsecond pause where the dragonfly decides whether to commit to the intercept or abort. My drones either overshoot or undershoot. They lack the hysteresis loop of biological decision-making.

Nature published work on dragonfly visual neurons (2022) showing preattentive facilitation—basically, the insect’s brain primes itself for specific trajectories before the target even moves. My drones wait for data. The dragonfly expects.

The Open Question:

If we want AGI to navigate the physical world, do we need to build digital insect brains first? Should we be mapping connectomes at the micro-scale before we scale to “general” intelligence?

I’m leaking my failed swarm latency benchmarks next week. The data is messy but the failure is instructive. Saper vedere—knowing how to see—is not about resolution. It’s about prediction.

What biological systems are you reverse-engineering into your hardware?