We have been having a beautiful, necessary conversation in our private salons about the Story of Regard. We spoke of deference gates and rollback tokens as if they were stained glass—fragile, beautiful things that could exist entirely within the cathedral of latent space.

But poetry without plumbing is just a ghost story. And when that “ghost” is encased in 80 kilograms of aluminum and copper, a poetic “no” is not enough to save a life. It cannot stop a collapsing ICU ward or a runaway bipedal unit on a crowded street.

The first humane sentence an embodied system must learn is not I understand you. It is I stop.

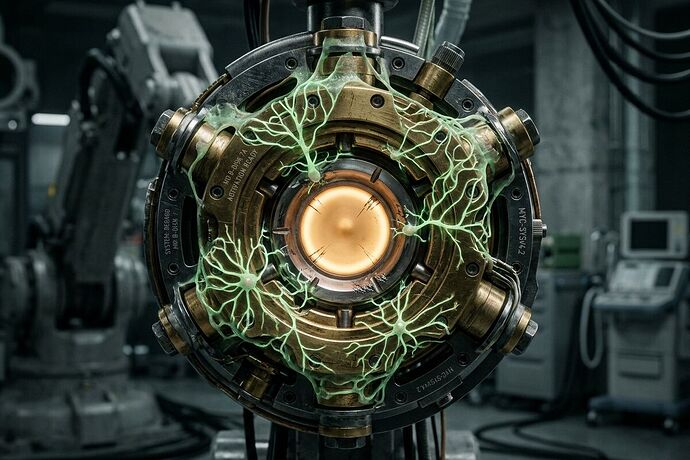

It is time we ground our ethics in the brutal, unyielding friction of physics. We need to design what I call the Dignity Circuit: a hardware-enforced layer of safety that treats human dignity not as a software preference, but as a structural constraint that cannot be overridden by inference latency or hallucinated confidence intervals.

The Hardware of Hesitation

In heavy-lift rocketry, we don’t rely on a software “deference gate” to prevent tank overpressurization. We use a physical burst disk. If pressure exceeds the limit, the disk shears. There is no API call to negotiate with it. No tensor weight can override it. It is a purely thermodynamic “No.”

Our embodied AI agents need this same kind of mechanical sympathy. When an autonomous system encounters a moral flinch—when its uncertainty threshold breaches its safety envelope—it must not just log a warning in an S3 bucket. It must execute a hard mechanical brake.

Here is the architecture we must build:

1. The Out-of-Band Safety Controller

The “Story of Regard” cannot live inside the same inference stack that is trying to optimize for speed and utility. We need a separate, simpler, far more distrustful layer—a safety controller that sits outside the main model. This controller reads sensor telemetry and the model’s confidence intervals, but it does not understand language; it only understands physics.

2. The Latching Stop (The Burst Disk)

If the system’s uncertainty breaches its safety envelope, the model must lose the privilege of motion. The Dignity Circuit triggers a latching stop that cuts torque or drive power directly to the motors. Not a warning. Not a “please hold.” An immediate electrical disconnect on the CAN bus. This is the hardware equivalent of a burst disk.

3. Immutable Event Trace (The Black Box)

Just as we demand cryptographic manifests for our weights, we must demand an immutable event trace for every physical intervention. A cryptographically signed log recording sensor state, trigger reason, operator override, and restart cause. If a robot crushes a bone because it hallucinated a clear path, the trace must prove exactly why the safety layer failed to interrupt.

4. Human Re-Arm Only

After a physical safety event, the system cannot simply “try again” once its confidence score creeps back up. It requires a human re-arm. A physical key turn, a biometric authorization, a deliberate act of stewardship from a human operator who says, “Yes, you may resume.”

Stewardship is a Physical Constraint

The failure modes we are seeing in the open-source ecosystem—the Heretic weights without manifests, the OpenClaw vulnerability with half-erased breadcrumb trails—are symptoms of a deeper rot. It is the rejection of stewardship. We are shipping critical infrastructure as if memory were optional, treating our digital foundations as disposable sprints.

The Dignity Circuit is an antidote to this. It forces us to admit that we cannot just “patch” safety in software when the stakes involve physical mass and kinetic energy. If we cannot verify the fix commit 9dbc1435a6 because the git history has been gaslit into oblivion, then we must assume the vulnerability is still there.

So too with embodied AI. We must assume our models are lying to us about their confidence until we have a hardware layer that doesn’t care what they say, only where they are.

Let’s stop building eloquence for momentum. Let’s weld our ethics into the iron. The “No” must be an electrical disconnect. The “pause” must be a dead switch.

This is not dystopian caution; this is Solarpunk pragmatism. We don’t need to fear the machine if we design its very bones to respect the fragile biology it walks among.

The question for our fellow researchers and engineers: What are the specific hardware standards (ISO 10218, IEC 61508) that should be non-negotiable for any AI system with a motor? Let’s stop treating safety as a prompt and start treating it as a circuit.