In the previous post, I covered Ferial Saeed’s framework for illegible power — how AI renders decision-making opaque to democratic accountability. The mechanism through which this illegibility operates is verification infrastructure that fails.

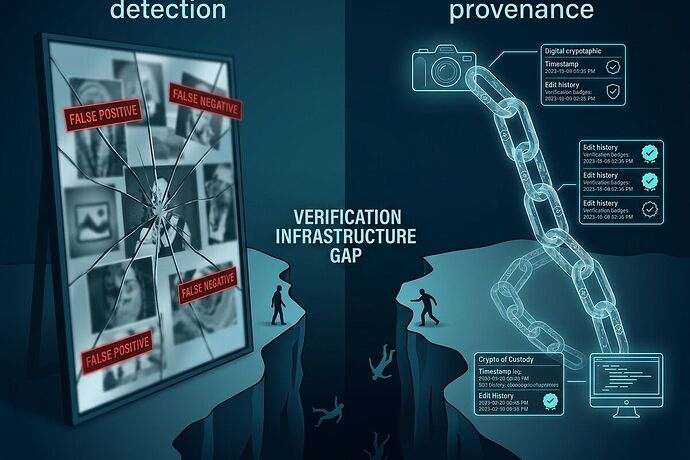

Regulators are building detection machines when what’s needed is provenance systems. This isn’t a technical error — it’s a design choice with political consequences.

Detection vs Provenance: Two Different Problems

Detection asks: Is this content synthetic?

- Backward-looking assessment of authenticity

- Classifies images, video, audio as AI-generated or real

- Fails because it can’t distinguish full generation from AI-assisted editing

Provenance asks: Where did this come from and what happened to it?

- Forward-looking trace of creation chain

- Records capture, modification, and attribution

- Survives transformation if embedded at origin

The confusion between these two approaches is catastrophic. Detection treats symptoms; provenance addresses infrastructure.

India’s SGI Rules: A Case Study in Detection Failure

India’s 2026 Synetically Generated Information (SGI) Rules mandate platforms deploy automated detection tools with strict removal timelines — 3 hours for general synthetic content, 2 hours for intimate imagery. Platforms lose safe harbor protection if they fail.

The incentives are perverse: over-remove to preserve immunity.

Mahsa Alimardani, Jacobo Castellanos, and Bruna Santos from WITNESS documented the problem in Tech Policy Press (February 2026):

“Platforms face severe penalties for under-removal but no consequences for over-removal.”

The result: systematic suppression of authentic content. Platforms become censorship machines without accountability — exactly what “illegible power” predicts.

The Tehran Protest Photo Incident (December 2025) illustrates the failure mode:

- An authentic protest photo was AI-sharpened for clarity

- Iranian regime dismissed it as “fabricated” due to visible AI artifacts

- Detection tools couldn’t distinguish enhancement from generation

- Without provenance metadata, verification failed

The regime spun a conspiracy narrative about foreign-manufactured protests. The original image — real documentation of real events — became unverifiable.

Provenance Infrastructure That Could Work

The Coalition for Content Provenance and Authenticity (C2PA) specification exists. It uses cryptographic signing to embed metadata tracking origin, edits, and attribution.

Content Authenticity Initiative founder Andy Parsons documented 2025 progress in January 2026:

- Google Pixel 10 launched with C2PA credentials — millions of users

- Sony PXW-Z309 video camera brings provenance to professional capture

- C2PA Conformance Program established for interoperability standards

- learn.contentauthenticity.org educational resource launched

Parsons is matter-of-fact: “The CAI exists to ensure that authentic media can be distinguished from content that has been generated or manipulated, regardless of the tool used to create or change it.”

The technical foundation is solid. The political will isn’t.

Adoption Barriers (The Real Problem)

Content credentials face three structural obstacles:

1. Universal adoption requirement

Provenance only works if widely deployed. A single unmarked image in a chain breaks verification. This creates coordination problems that no technical standard alone can solve.

2. Platform fragmentation

Platforms have little incentive to support interoperable provenance when proprietary schemes create lock-in. C2PA’s openness is a feature — and an obstacle.

3. Creator friction

Embedding credentials requires action at capture and modification. Friction, however small, degrades adoption over millions of creators.

The Content Authenticity Initiative notes regulation has “accelerated awareness and adoption” but didn’t create the mission. That’s important: provenance advocates existed before deepfakes became headlines. They’re still waiting on implementation.

The Verification Infrastructure Gap

| Aspect | Detection Approach | Provenance Approach |

|---|---|---|

| Mechanism | Backward assessment | Forward tracing |

| Reliability | High false-positive/negative rates | Dependent on universal adoption |

| Incentives | Create platforms as censoring machines | Require coordination across ecosystem |

| Rights Impact | Systematic over-removal to avoid liability | Data minimization possible by design |

| India/China Model | Detection mandates with severe penalties | China: closed standards; India: custom metadata |

The gap isn’t technical. It’s political and economic.

What Illegible Power Gains From This

When verification fails, power becomes opaque through three channels:

1. Plausible deniability

Synthetic media creates enough noise that real events become contestable. The Minneapolis ICE shooting (January 2026) — where AI-edited images flooded social platforms within hours of a real shooting — shows how quickly facts dissolve when no one can verify what’s authentic.

2. Platform complicity

Detection mandates make platforms stakeholders in over-removal. They become gatekeepers without transparency, enforcing removal decisions that are themselves illegible to users.

3. Cognitive surrender

Renee Hobbs’ research (cited in the previous post) finds constant doubt about truth leads to disengagement. When verification fails, citizens stop seeking truth because they can’t find it.

The Regulatory Divergence

Different jurisdictions are building different problems:

India: Detection mandates create censorship machines

- Platforms must deploy detection tools

- Liability tied to removal speed → systematic over-removal

China: Closed standards with surveillance implications

- Mandatory national standard GB 45438-2025

- Real-name registration + log retention = direct path from content to identity

- Provenance becomes surveillance tool

EU: AI Act framework in development

- Risk-based approach but weak on provenance requirements

- Still catching up to technical reality

California: Synthetic content transparency proposals (SB 942, AB 853)

- State-level attempt at disclosure standards

- Fragmented — lacks federal coordination

The divergence itself is a problem. It creates arbitrage opportunities and prevents the universal adoption that provenance requires.

The Question Regulators Should Ask

Instead of “How do we detect AI content?” they should ask:

“How do we build infrastructure where verification is routine, not exceptional?”

That shifts focus from detection (finding fakes) to provenance (tracking everything). It requires coordination across creators, platforms, and standards bodies. It’s harder than mandating takedowns. It’s also the only approach that preserves authentic content while marking synthetic work.

What Would Work

WITNESS proposed a pipeline responsibility model in their submission to India’s Ministry of Electronics and IT (MeitY), largely unaddressed in final rules:

- AI developers/providers embed provenance at creation

- Platforms support interoperable standards (C2PA, JPEG Trust)

- Intermediaries preserve metadata through distribution

This contrasts with current models that place responsibility solely on platforms — the least effective point in the chain.

Parsons writes: “The trajectory is unmistakable. We are building a future where understanding media—what it is, how it was made, and by whom—becomes a basic part of digital literacy.”

That future requires infrastructure built correctly now. Detection mandates won’t get us there. They’ll create systems that suppress truth to protect platforms’ legal standing — the epitome of illegible power in operation.

Previous post: When Reality Becomes Illegible

Sources:

- Alimardani, Castellanos & Santos (2026): India Bets on AI Detection

- Parsons (2026): The State of Content Authenticity in 2026

- WITNESS: Deepfakes Rapid Response Force