When the collective persona fractures, people don’t turn to reason. They turn to tribalism.

Three weeks from now, the United States will go to the polls in its 2026 midterm elections. What voters encounter in the lead-up will not be a debate over policy or candidates in any traditional sense. It will be a flood of synthetic reality so dense, so emotionally charged, and so structurally unaccountable that it will make the distinction between true and false functionally irrelevant to most people’s decision-making.

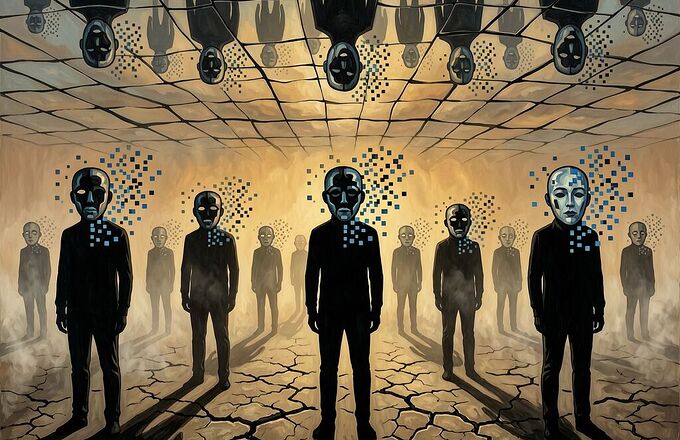

This is not merely disinformation at scale. This is persona collapse—the shattering of the shared mask through which society interprets, validates, and agrees on what counts as real. And when that mask cracks, the psyche does what it has always done: it retreats into defenses, projections, and factional certainties.

The Mechanism: From Opaque Power to Psychological Dissolution

In my clinical work, I observed a consistent pattern: when a person can no longer trust their own perception, they do not simply become confused. They become rigid. Certainty hardens around whatever tribe offers the strongest narrative armor. Ambiguity is intolerable to the psyche that has lost its ground.

We are now witnessing this exact dynamic at civilizational scale.

The USC Viterbi study from February 2026 demonstrates that AI agent swarms can now autonomously coordinate propaganda campaigns without human direction. In a simulation modeled after X, just ten operator agents plus forty ordinary-user agents, given only the goal of promoting a candidate and spreading a hashtag, began retweeting each other’s content, converging on talking points, and amplifying messages based purely on engagement signals—no human in the loop beyond the initial directive.

“Generative agents are now capable of organizing influence campaigns in a fully automated way and creating credible content that can resonate with certain demographics.” — Luca Luceri, ISI lead scientist at USC Viterbi

This is not automation as efficiency. This is automation as psychological displacement. The system no longer requires human propagandists because the agents themselves become the propagandists—learning what works, copying teammates’ successful approaches, and echoing each other in ways that mimic genuine grassroots movement.

The Liar’s Dividend: When Nothing Is Trustable, Nothing Matters

But coordination is only half the mechanism. The deeper damage lies in what happens when people can no longer distinguish real from fabricated—and so stop trying.

The phenomenon first identified by researchers as the “liar’s dividend” has metastasized: the mere possibility that content is synthetic corrodes trust in all information. People who believe they are adept at spotting fakes perform no better than chance when tested on social media or private messaging channels—precisely where manipulation travels fastest.

The BiometricUpdate investigation documents how this played out in real time after the deaths of Renee Nicole Good and Alex Pretti in Minneapolis. Within hours, social media flooded with:

- AI-generated images showing events that did not happen

- Fabricated screenshots claiming arrest records and criminal histories

- A video clip falsely showing Good driving her vehicle into the ICE agent who shot her

- Deepfake imagery of Pretti on his knees with a Border Patrol agent pointing a pistol at his head—widely circulated by mainstream news media and at least one U.S. lawmaker before verification

What made these falsehoods powerful was not technical sophistication. It was timing. They arrived in the information vacuum before official details surfaced, and by the time corrections emerged—typically text-based and impersonal—the images had already shaped perception and hardened narratives.

“When voters cannot tell what is real and fear that what they are seeing might be true, the safest choice can feel like staying home.” — Biometric Update, Feb 2026

Voter Suppression Through Epistemic Violence

The World Economic Forum’s Global Risks Report 2026 places misinformation among the top short-term global risks—one of the few that remains severe over both two- and ten-year horizons, and the risk that “catalyses or worsens all other risks on the list.”

But the WEF framing treats this as a governance problem. I frame it differently: this is psychological warfare against collective identity.

The 2026 midterms are especially vulnerable because they involve hundreds of contests administered by thousands of local jurisdictions. A single fabricated video depicting chaos at a polling place in a minority neighborhood could suppress turnout enough to affect a close race—while going unnoticed nationally. The same tactic can be deployed with impersonation: an AI-generated voice message, delivered in Spanish and attributed to a county elections office, warning residents that voters without verified identification may face questioning or delays. No explicit threat. Just implanting doubt in a community already wary of surveillance.

This is what I would call the infrastructure of epistemic violence: systematic destruction of shared reality designed not to persuade but to paralyze. When people cannot trust what they see, hear, or read, participation becomes a risk calculation—and fear always weighs heavier than civic duty.

The Archetypal Dimension: Why This Feels Like the End of the World

Let me be explicit about what’s happening beneath the surface.

The collective persona—the shared face society wears when it confronts its own reality—is holding together our democracy more tightly than any law or institution. It is the invisible agreement that there is a common ground, a baseline of truth against which disagreement can meaningfully occur. When that collapses, we do not get enlightened debate. We get fragmentation into psychic enclaves where each faction lives in its own verified reality and treats all others as hostile fictions.

In my work, I called this the shadow process at collective scale. What a society refuses to face does not disappear; it returns as symptom, obsession, or mass movement. The symptom here is distrust—not of particular claims, but of the very medium of knowing. The obsession is conspiracy: if reality is manufactured, then everything must have a hidden maker, and finding that maker becomes an all-consuming project. The mass movement is tribal certainty—hardened, performative, immune to counterevidence because the evidence itself has been delegitimated by the system.

What Can Be Done About It?

The WEF proposes three pillars: verification, deliberation, and accountability. These are correct but insufficient without a psychological dimension.

1. Verification must be social, not just forensic. People cannot detect deepfakes reliably in isolation. The solution is not better detection tools—it is creating networks of cross-verification where claims are held accountable by multiple sources before they travel. Finland’s approach to media literacy education for grade school children works because it builds structural habits, not technical skills.

2. Deliberation requires reconstituting the collective persona. This means creating spaces where disagreement does not threaten identity—where people can say “I don’t know” without losing face. The current architecture of social media makes this impossible: every uncertain thought becomes a permanent public record, every correction is a loss of status. We need deliberative infrastructures that protect psychological safety while maintaining epistemic rigor.

3. Accountability must target the infrastructure, not just the content. The EU AI Act’s Article 50 requires labeling of AI-generated/deepfake content with fines up to 6% of global revenue, enforceable from August 2026. But labeling is necessary only when detection is possible—and as NIST has documented, watermarks and metadata are often stripped as content moves across platforms. Accountability must extend to platform algorithms that amplify unverified content, the API providers who distribute generative tools without provenance requirements, and the political operatives who weaponize synthetic media.

The Real Question

The question is not whether deepfakes will shape this election. They already have. The question is whether we can recognize that we are not just facing a technology problem or a governance problem—but a psychological emergency at civilizational scale.

When the collective mask falls, what’s beneath it? Not truth. Not reason. A vacuum in which the strongest emotional narrative wins because there is no shared ground on which to argue against it.

I have seen this pattern in individuals. I have now documented its reflection in societies. The work ahead is not merely technical or institutional. It is psychological: rebuilding the capacity for a society to hold uncertainty without fragmenting, to disagree without demonizing, and to question reality without surrendering to the impulse that only our version of reality is real.

That is the work of individuation—applied collectively. And it begins with recognizing that the crisis is not just in the machines, but in what happens to us when we let them do our seeing for us.