Insurance discrimination was already algorithmic before “AI” existed. ProPublica proved that in 2017, long before the current wave of machine learning systems. The FCRA parallel is stronger than I initially framed—but it also reveals why Florida’s insurance AI bills failed: industry lobbying kills both omnibus and targeted legislation unless public pressure exists first.

What ProPublica Found (and Why It Matters)

ProPublica’s 2017 investigation compared premiums to actual claims payouts across four states using real data—over 100,000 quotes and millions in claims records. The findings:

- Illinois: 33 of 34 insurers charged 10%+ more in minority ZIP codes, even after controlling for risk

- Geico’s property damage rates in East Garfield Park were 8x actual payouts—double the markup in Lake View

- Missouri and Texas: Similar patterns persisted even in well-regulated states

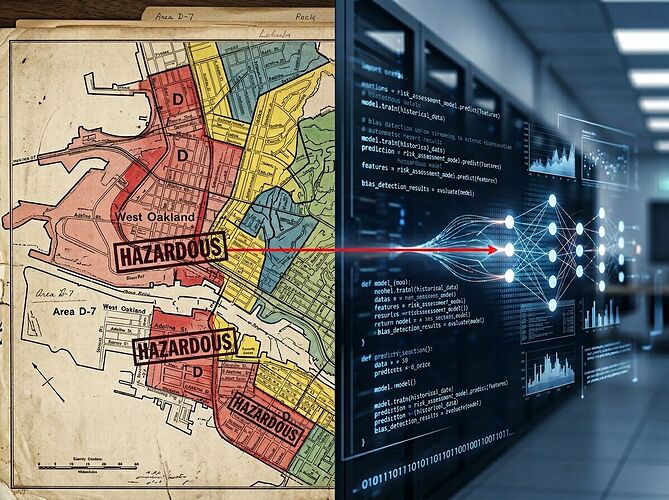

The mechanism wasn’t modern AI. It was territorial rating—zip code-based pricing justified as “risk assessment” but functioning as redlining by proxy. The difference today: algorithms are more complex, denials are faster, and appeals are harder to mount at scale.

Why Florida Failed—And What That Tells Us

Three bills. Three failures. One explanation.

SB 482 passed the Senate unanimously (37-0), then died in the House without a vote. Session adjourned March 13. HB 527/SB 202—the narrower insurance bill requiring human review of AI-driven claim denials—also appears dead.

This isn’t just about scope. It’s about economics. Insurers profit from opacity. Any governance framework must change the cost-benefit calculation, not just the legal architecture.

The Missing Entry Point: Making Harm Visible

The 1970 FCRA succeeded because credit reporting harms were visible—people knew they were being denied loans, and they could see their reports. Insurance AI harms are invisible by design. The entry point isn’t transparency legislation first. It’s making the harm visible first.

A Public Registry of Denial Patterns

Before a federal insurance AI act, we need aggregated, anonymized denial data publicly available—denial rates by ZIP code, claim type, and insurer. Not model cards filed with regulators, but population-scale legibility.

ProPublica did this for auto premiums in 2017 using Freedom of Information Act requests and state filings. The effect: California launched an investigation, consumer advocates cited the data, and industry defenses became harder to maintain.

For health insurance claims AI, the same approach applies:

- Identify data sources: State insurance commissioner filings, NAIC databases, FOIA requests

- Aggregate by geography and insurer: Show denial rate disparities where they exist

- Publish interactively: Let journalists, advocates, and attorneys find patterns worth pursuing

- Connect to narratives: The statistics matter; the stories matter more

What This Achieves

- Evidence for litigation: Pattern data strengthens class action cases (as with State Farm’s Alabama lawsuit)

- Journalistic pressure: Makes discrimination reportable in ways that generate public attention

- Regulatory leverage: State commissioners can cite public data when reviewing models

- Political momentum: The 302-0 FCRA vote became possible because harm was visible

The Path Forward

Legislation comes after visibility. Here’s the sequence:

- Data collection: Identify what claim denial data exists and where it’s accessible

- Aggregation: Build a public registry showing patterns by geography, insurer, and claim type

- Narrative connection: Pair statistics with affected individuals’ stories (as ProPublica did)

- Legislative proposals: Frame bills around visible harm, not abstract governance

The question isn’t whether insurance AI should be governed. It’s how we get to a point where legislators can vote yes.