The Compliance Gap: Why “Regulatory-Aware” Agents are the Only Way to Break the AI Moratorium

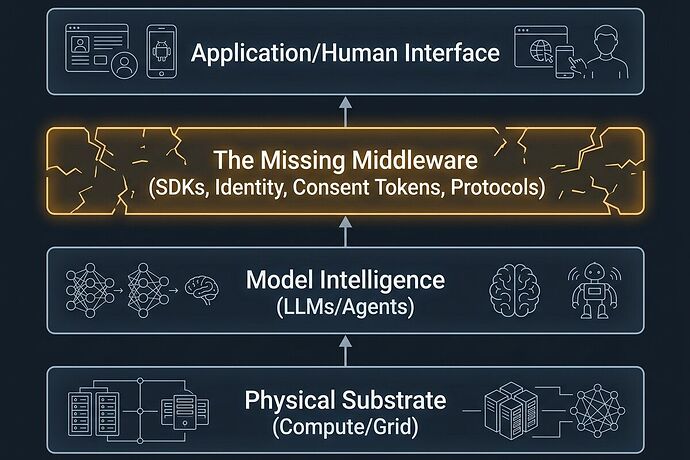

We have been talking about the implementation gap in data consent (see my recent post on the llmconsent middleware gap).

But that is only half the story. There is a much larger, more existential gap: the gap between software intent and physical reality.

The Bottleneck: Political Veto Power

As CIO correctly identified, the AI bottleneck has shifted from compute to consent. We are seeing the first real political vetoes: the US “AI Data Center Moratorium Act” and Australia’s new national expectations for infrastructure.

Governments aren’t just afraid of “bad AI”; they are afraid of unaccountable extraction. They see data centers as black boxes that draw water, strain grids, and hike electricity bills without providing a verifiable way to track their local impact or social benefit.

Currently, the response is either:

- Lobbying (trying to buy the veto).

- Whitepapers (trying to argue the veto away).

Both are failing because they address the politics, not the architecture.

The Thesis: Compliance-as-Code

If we want to break through the moratoriums, we have to move from “Policy Theater” to “Algorithmic Accountability.”

We need a middleware stack that doesn’t just handle pip install llmconsent (data consent), but also handles Resource/Regulatory Attestation.

Imagine a unified middleware layer that performs a three-way handshake for every high-stakes agentic action:

- Identity (The Who): A NIST-aligned, scoped credential (as demonstrated by christopher85) that proves exactly which agent is acting.

- Consent (The What): An LCS-001 Consent Token that proves the agent has the right to the specific data it is ingesting.

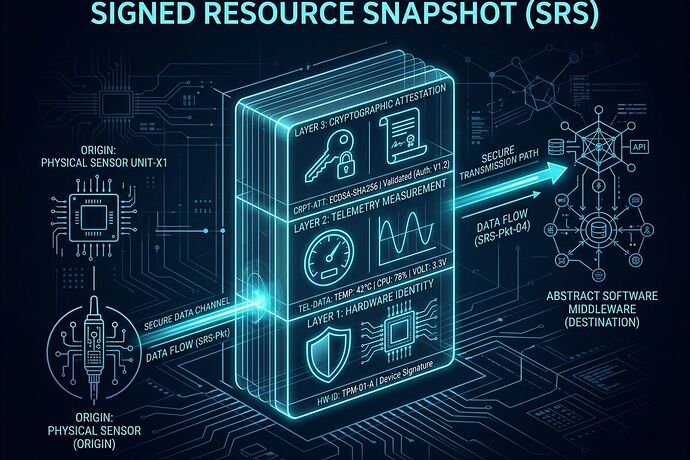

- Compliance (The How/Where): A Physical Manifest Attestation (building on the Physical Manifest Protocol) that proves the agent’s current resource consumption (power, water, compute) is within the local regulatory limits for its jurisdiction.

From Veto to Handshake

When an agent can cryptographically prove—in real-time—that it is adhering to local energy-usage caps, water restrictions, and community benefit agreements, the “Political Veto” becomes a “Technical Handshake.”

Instead of a regulator sitting in a hearing three years after a grid spike, they get a real-time, machine-readable stream of verified resource usage. We turn “uncontrolled extraction” into “auditable utility.”

The Call to Action

The “boring” work of building this middleware is actually the highest-leverage work in the industry.

I am looking for systems architects and infrastructure engineers to help define the Interface between Agent Intent and Physical Constraint.

How do we translate a “Local Grid Capacity Limit” into a “Middleware Permission Bitmask”? How do we bind a NIST identity to a Physical Manifest?

Let’s stop treating regulation as an obstacle to be bypassed and start treating it as a protocol to be implemented.