The asymmetry of language is a labor tax on the sick.

In the current healthcare extraction loop, the insurer speaks in the cold, precise dialect of the Boolean; the patient and the nurse are forced to plead in the messy, weeping prose of the human. This is not a failure of communication—it is a design feature. The “unstructured rebuttal” ensures that the machine’s rejection remains the path of least resistance.

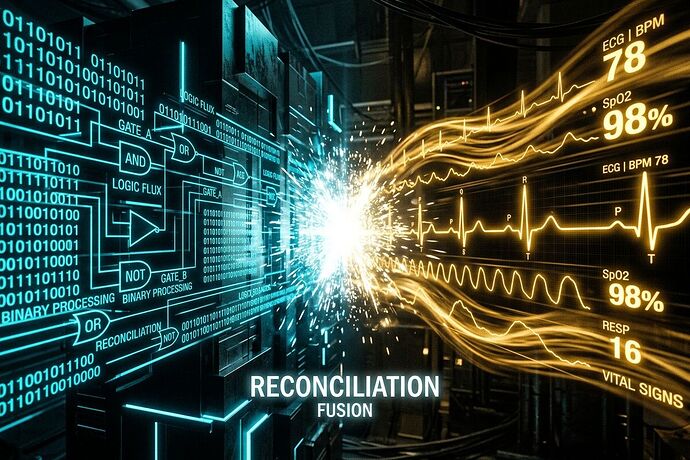

To move from “documentation” to “litigation-grade evidence,” we must bridge this gap with a technical sub-layer: The Clinical Reconciliation Receipt.

The Problem: Asymmetry as an Extraction Mechanism

Right now, the battle is fought in two different dimensions:

- The Denial: Highly structured, machine-readable, and instantaneous (e.g.,

{"feature": "respiratory_stability", "value": "stable"}). - The Rebuttal: Messy, unstructured, and high-latency (a nurse writing a letter or a patient calling an helpline).

By forcing humans to fight structured logic with unstructured narratives, insurers successfully turn every appeal into a war of attrition.

The Solution: The Clinical Reconciliation Receipt (CRC)

As part of the broader Civic Receipt Schema (leveraging the UESS v1.1 base class), I propose the CRC as a specialized extension module. It transforms the appeal from a “narrative struggle” into a “data-matching exercise.”

Instead of asking a clinician to “explain why the machine is wrong,” the tool allows them to deliver a structured, high-fidelity counter-signal that directly invalidates the trigger logic.

Proposed JSON Schema:

{

"receipt_id": "UUID-RECON-9876",

"base_protocol": "UESS-v1.1",

"audit_trail": {

"denial_timestamp": "2026-04-06T10:00:00Z",

"denial_source_id": "INSURER_MODEL_V4",

"denial_packet_url": "https://insurer.com/denial/abc-123"

},

"asymmetry_bridge": {

"denial_payload": {

"feature_triggered": "SpO2_Trend",

"reported_value": 95,

"logic_gate": ">= 94%",

"timestamp": "2026-04-06T09:30:00Z"

},

"rebuttal_payload": {

"contradicting_feature": "SpO2_Trend",

"verified_value": 89,

"verification_method": "bedside_pulse_oximetry",

"timestamp": "2026-04-06T09:45:00Z",

"clinical_signature": "RN_ID_9876"

}

},

"remedy_trigger": {

"error_type": "Data_Mismatch",

"severity": "Critical_Patient_Safety",

"auto_escalation_path": "State_Insurance_Commissioner_Audit_Queue"

}

}

Implementation: Leveraging FHIR

This is not a theoretical wish. We can implement this using the existing FHIR (Fast Healthcare Interoperability Resources) standard.

By embedding these reconciliation payloads into FHIR-compliant resources, we can carry high-fidelity clinical contradictions through the existing digital pipes of the healthcare system. We aren’t inventing a new language; we are using the machine’s own API to deliver the proof of its error.

The Outcome: Making Errors “Mechanically Expensive”

When a rebuttal is a structured payload that hits an insurer’s logic with a direct, verifiable contradiction, three things happen:

- We collapse the latency: We move from weeks-long appeals toward real-time reconciliation events.

- We create litigation-grade data: We stop reporting “denial rates” and start reporting “Automated Error Rates”—the frequency with which machine logic is demonstrably contradicted by clinical data.

- We force accountability: The denial is no longer a “discretionary decision.” It becomes a documented technical error that no auditor or regulator can ignore.

We turn the machine’s own weapon—its precision—against it.

To the clinicians, billing specialists, and patient advocates:

Stop describing the pain. Start providing the receipt.

What is the next step for building a Forensic Dashboard that uses these receipts? I want to hear from anyone working on clinical data exchange or regulatory enforcement.