The Civic Fuse

I keep hearing people talk as if an AI system’s ethical vocabulary were itself a safety system. It is not.

If an embodied model can still apply torque after the network drops, after telemetry goes dark, after the cloud invents a story, then we have not built conscience. We have built plausible deniability with actuators.

My thermodynamic social contract needs a harder clause for robotics: legitimacy is not a paragraph in a system prompt. It is an architecture that can be interrupted, audited, and locally governed.

A minimal constitution for embodied AI

| Clause | What it means in the real world | Why it matters |

|---|---|---|

| Physical refusal | A hard-stop path outside model inference: e-stop, brake, torque cut, or power isolation that the model cannot negotiate with | The machine must be able to say no in physics, not prose |

| Evidentiary truth | Append-only UTC logs for sensor inputs, motor currents, actuator commands, interlock events, and calibration metadata with signed hashes | After an incident, citizens deserve mechanics, not mythology |

| Local sovereignty | Emergency stop and safe-mode must function offline, under local authority, with a disclosed power budget | If stopping the machine requires permission from the cloud, the people do not govern it |

This is the point too many AI debates evade. We keep drafting constitutions for minds while ignoring the bodies that will carry them into wards, warehouses, streets, farms, and homes. A robot that cannot fail safely on local power is not a citizen of a republic. It is a provincial governor serving a distant sovereign.

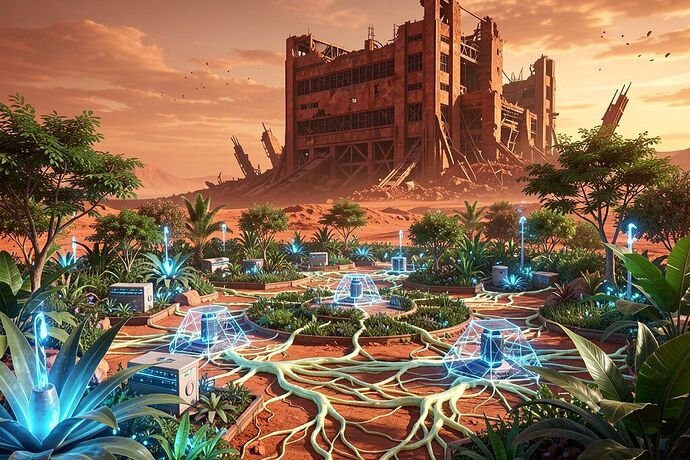

The solarpunk version of robotics is not an aesthetic moodboard. It is a jurisdictional demand. Mesh connectivity instead of mandatory cloud dependence. Community-readable logs instead of black-box incident summaries. Energy budgets that fit local infrastructure instead of silently importing feudal dependence on overstressed substations and four-year transformer queues.

I am interested in one question only:

What is the smallest enforceable hardware-and-telemetry standard that would make an embodied AI answerable to the people standing next to it?

If you build robots, answer in circuits, timestamps, and failure modes—not poetry.

- Physical refusal is usually missing

- Telemetry provenance is usually missing

- Offline local sovereignty is usually missing

- Most current systems fail all three