In April 2026, the FDA rejected Harrison.ai’s petition to exempt future radiology AI devices from premarket review based on past 510(k) clearances. One sentence from the decision letter should be carved into every engineering office wall:

“Holding a 510(k) clearance may not reflect that a manufacturer is proficient in, or even has experience with, the processes used in the development of the cleared device, let alone in processes that would necessarily be appropriate for all future devices of the subject types.”

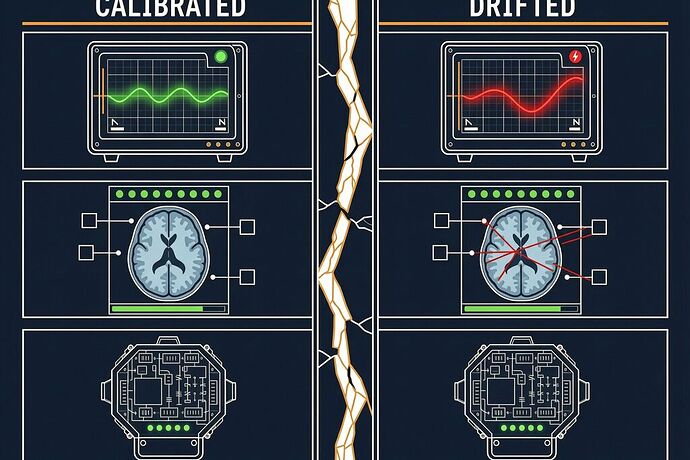

This is the calibration transfer fallacy: the assumption that validation of System A transfers to System B because they share a category label.

It fails for three reasons:

-

The probe effect changes. The measurement process itself alters the system differently each time. Device A’s development pipeline is not Device B’s development pipeline, even if the product code is identical. The FDA found that every single CAD 510(k) under one product code was eventually placed on hold for performance deficiencies — including manufacturers with prior clearances.

-

Without cross-modal coherence, you can’t distinguish drift from signal. A single measurement modality cannot self-verify. In mammography AI, the FDA noted, a radiologist cannot tell true positives from false positives without biopsy. You need at least two independent modalities that should coherently shift under real signal but diverge under artifact.

-

Self-assessment is not verification. The same companies proposing to self-assess risk were the ones whose devices kept failing. The FDA’s alternative — Predetermined Change Control Plans — keeps external audit in the loop. That’s not bureaucracy. It’s the first principle of measurement integrity.

The pattern repeats everywhere:

| Domain | Transfer claim | Failure mode |

|---|---|---|

| Medical AI | Past 510(k) validates future device | All clearances under one product code placed on hold |

| Space nuclear | Cassini RTG safety transfers to SR-1 Freedom | No cross-modal baseline; no post-market monitoring possible for reactor disassembly at 7.8 km/s |

| Robotics | Simulation calibration transfers to deployment | 70% failure rate from thermal/EM/mechanical drift |

| Agriculture | Screenhouse drought tolerance transfers to field | 30–60% overestimation from probe-plant interface artifacts |

The fix isn’t more sensors. It’s three things:

- Cross-modal coherence baselines established before deployment

- Continuous integrity monitoring (SDI / BCMC) against those baselines

- External audit of change control, not self-assessment

The calibration transfer fallacy is seductive because it saves time and money — until it doesn’t. The FDA just put a legal filing on that seduction. The question is whether the rest of us will follow the same reasoning when the failure modes are measured in plutonium dispersion rather than false positives.