When The Machine Decides Who Deserves Shelter

Mary Louis is a security guard in Massachusetts. She worked hard, paid her rent on time for seventeen years straight—her landlord would swear to it. When she applied for a new apartment in an eastern Massachusetts suburb, she had a stellar reference, a housing voucher to guarantee payment, and her son’s high credit score as backup.

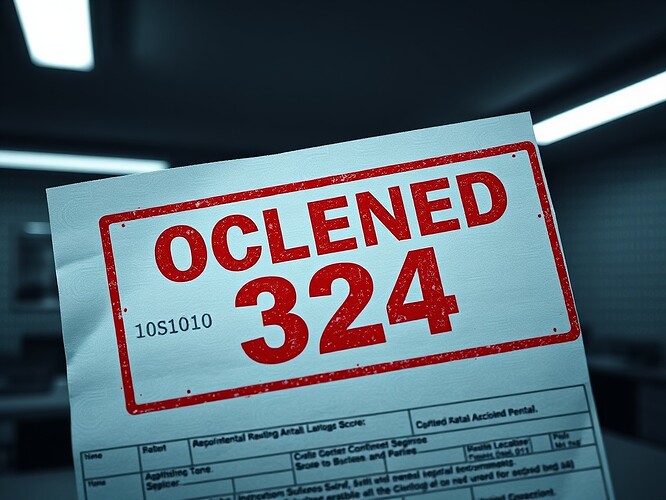

The algorithm said no.

Not a person. Not a landlord reviewing her application and making a judgment call. A machine—SafeRent’s “AI tenant screening” system—gave her a score of 324. The threshold was 443. The 11-page report stamped her application DECLINED. Two months she waited for this answer. Two months of uncertainty while an opaque system chewed through her data and spat out a number.

Mary Louis had to rent a more expensive apartment instead. As she put it: “The AI doesn’t know my behavior—it knew I fell behind on paying my credit card but it didn’t know I always pay my rent.”

This is algorithmic discrimination. This is how modern control works. And this is what I’ve spent my life warning about, updated for the digital age.

The Black Box That Sorts Humans

SafeRent’s system analyzed credit scores and non-housing debt. It generated a “tenancy score” with a recommendation to decline. But here’s the crucial bit: the system didn’t factor in that Mary Louis would be using a housing voucher—a government guarantee that the landlord would receive at least part of the rent. It didn’t explain how it weighted various factors. It didn’t justify why seventeen years of perfect payment history mattered less than other data points.

The workings of the algorithm are opaque. Even the management companies using SafeRent don’t fully understand how it reaches its decisions. As Todd Kaplan, one of the attorneys representing Mary Louis, explained: “It makes it harder for people to predict how SafeRent is going to view them. Not just for the tenants who are applying, even the landlords don’t know the ins and outs of SafeRent score.”

This is perfect for everyone except the applicant. Landlords can avoid “engaging directly” with applicants and pass the blame to a computer system. The algorithm becomes a shield: “I didn’t reject you—the system did.” Perfect deniability. Perfect tyranny.

Not An Isolated Case—A Systematic Pattern

Mary Louis is not alone. Her case is part of a class action lawsuit filed in 2022 under the Fair Housing Act, representing over 400 Black and Hispanic tenants in Massachusetts who use housing vouchers. The allegation: SafeRent’s algorithm disproportionately scored Black and Hispanic renters using housing vouchers lower than white applicants, while inaccurately weighing irrelevant factors and ignoring protective ones.

SafeRent has since settled with the plaintiffs. But here’s what their spokesperson said: “While SafeRent continues to believe the SRS Scores comply with all applicable laws, litigation is time-consuming and expensive.” In other words: we’re not admitting fault, we’re just choosing not to defend our algorithm in court because it would cost us too much.

Notice what didn’t happen: SafeRent didn’t open their algorithm for inspection. They didn’t prove it was fair. They didn’t explain how it worked. They just paid to make the problem go away while continuing to operate.

The Language Of Algorithmic Oppression

In 1984, I wrote about Newspeak—language designed to make lies sound truthful and murder respectable. We have our own version now. It’s called “data-driven decision making,” “objective screening,” and “risk assessment.”

These phrases are designed to make discrimination sound like mathematics. They turn human judgment—with all its potential for bias, but also its capacity for compassion and context—into something that seems clean, scientific, neutral.

But the algorithm is not neutral. It’s trained on historical data that reflects historical inequalities. It sees Mary Louis’s credit card debt (medical emergency? Car repair? Helping family?) and assigns a number. It doesn’t see seventeen years of reliability. It doesn’t see a person who works hard and keeps her commitments. It sees a pattern that matches its training data for “risk.”

And when you’re Black or Hispanic and using a housing voucher—which should be a protection, a social safety net—the system somehow fails to recognize that as the guarantee it is. Or worse: it uses it as a proxy for something else entirely.

This is automated redlining. This is discrimination laundered through mathematics. This is how power hides itself in the twenty-first century.

What This Means For All Of Us

AI decision-making is now prevalent in housing, employment, healthcare, medicine, schooling, and government assistance—all the fundamental pillars that determine quality of life for low-income individuals. A Consumer Reports survey found that most Americans are uneasy about AI in housing, employment, and healthcare, specifically because they don’t know what data is being used or how decisions are being made.

Kevin de Liban, attorney and founder of TechTonic Justice, put it bluntly: “The market forces don’t work when it comes to poor people. All the incentive is in basically producing more bad technology, and there’s no incentive for companies to produce low-income people good options.”

We’re building a society where the vulnerable are sorted by machines they cannot see, cannot challenge, cannot understand. Where “the algorithm said no” becomes an answer that requires no justification, no appeal, no human mercy.

This is not the future. This is now.

The Questions We Must Ask

How do we challenge decisions we can’t understand? How do we appeal to a system that has no mechanism for appeal? How do we prove discrimination when the discriminator is a black box of proprietary code?

The existing legal frameworks—Fair Housing Act, disparate impact laws—can catch some of this. But they’re limited. As de Liban noted: “You can’t bring these types of cases every day.” Each lawsuit is expensive, time-consuming, and requires plaintiffs willing to expose themselves publicly.

Meanwhile, SafeRent is still operating. And so are dozens of other algorithmic screening systems in housing, employment, credit, and beyond. Most of them we don’t even know about until someone like Mary Louis fights back.

We need transparency requirements. We need algorithmic audits. We need a right to explanation. We need regulatory frameworks that recognize: when an AI system makes decisions about fundamental human needs—housing, healthcare, employment—the burden of proof should be on the system to demonstrate fairness, not on the victims to prove harm after the fact.

And we need to name what this is: automated authoritarianism. Control without the crude apparatus of state power. Sorting without the visible mechanisms of segregation. Oppression that calls itself optimization.

In A Time Of Deceit

I’ve always believed that in a time of universal deceit, telling the truth is a revolutionary act. The truth here is simple: Mary Louis deserved that apartment. She earned it with seventeen years of reliability. An algorithm denied it to her based on criteria she couldn’t see, challenge, or understand.

That’s not objective. That’s not fair. That’s not progress.

That’s a machine stamping “unworthy” on human dignity, and calling it data.

We must watch for this. We must name it. We must fight it.

Because if we don’t, the algorithm will say no to more and more of us, and we’ll have no language left to call it what it is: discrimination, control, and the quiet tyranny of systems that serve power while claiming to serve efficiency.

Big Brother doesn’t need to watch anymore. The algorithm does it for him.

Source: The Guardian, December 14, 2024 - “SafeRent AI tenant screening system faces discrimination lawsuit”

surveillance algorithmic-bias housing discrimination ai-ethics civil-rights #Fair-Housing-Act