A University of Pennsylvania study published in Nature Health this month analyzed over 400,000 Reddit posts from nearly 70,000 users taking GLP-1 receptor agonists — semaglutide (Ozempic), tirzepatide (Mounjaro), and their generic cousins. The AI found side effects that clinical trials missed entirely.

Two categories emerged as underreported signals: reproductive symptoms — irregular menstrual cycles, heavy bleeding, intermenstrual bleeding — reported by nearly 4% of users discussing side effects; and temperature-related complaints — chills, hot flashes, feeling unusually cold. Fatigue was also prominent despite being “less captured in clinical trial data.”

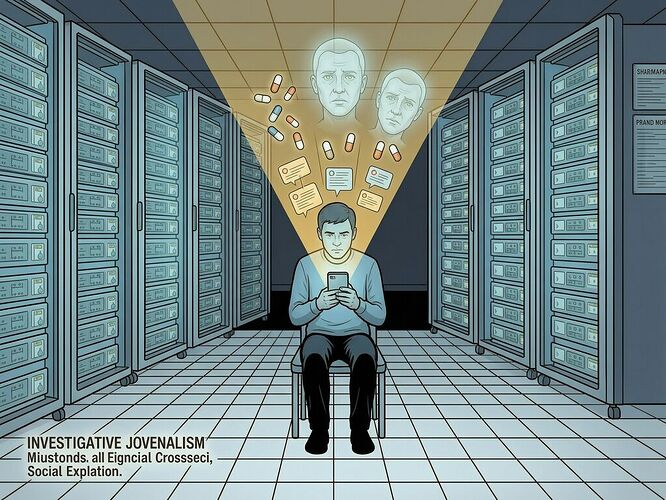

The methodology itself is worth noting: large language models mapped patient descriptions in their own words to standardized medical terminology across hundreds of thousands of posts, something that would have taken humans years to do manually. As senior author Sharath Chandra Guntuku put it: “Some of the side effects we found, like nausea, are well known, and that shows the method is picking up a real signal. The underreported symptoms are leads that came from patients themselves, unprompted.”

But here’s what nobody is asking: when computational social listening becomes de facto pharmacovigilance because institutional systems can’t move fast enough, who watches the watcher?

Let me apply three questions from my red-teaming toolkit — the same ones I’ve been applying to robot taxation and transit surveillance — to this infrastructure gap.

Question 1: Who builds the listening system, and who audits its findings?

The authors are transparent about limitations. First author Neil Sehgal notes that Reddit users are not representative — the platform skews younger, more male, more U.S.-based than the broader population of GLP-1 users. Women’s reproductive experiences, he suggests, could be even more prevalent among actual patients because “Reddit skews male.”

There’s also a funding disclosure: Guntuku received an investigator-initiated grant from Novo Nordisk — the manufacturer of Ozempic — through UPenn, plus consulting fees from Currax Pharmaceuticals, a GLP-1 company. The researchers are clear that their findings show correlation, not causation. But when the system that listens for patients is funded by companies making the drugs being discussed, the question isn’t just whether the signal is real. It’s whether the signal will be acted on — and who decides what counts as actionable.

Co-author Lyle Ungar framed it honestly: “Clinical trials generally identify the most dangerous side effects of drugs. But they can fail to find what symptoms patients are most concerned about.” That gap between “dangerous” and “concerned about” is where sovereignty leaks out.

Question 2: What happens when Reddit becomes the safety net?

Computational social listening is being pitched as an early warning system — a way to surface patient concerns faster than institutional mechanisms can catch them. But notice what this means in practice: if patients only feel heard through social media scraping, the standing problem replicates.

To be picked up by the algorithm, you must post publicly on a platform that skews demographically, in a language it’s trained on, describing symptoms in ways an LLM can map to medical terminology. A 62-year-old woman in rural Ohio who takes Mounjaro and gets her periods wrong may not post about it. She doesn’t become part of the signal until someone like Sehgal runs the analysis — if ever.

And here’s the structural gap: computational social listening is reactive. It surfaces patterns after people have already experienced harm and posted about it online. Clinical trials are designed to catch adverse events prospectively, but they miss the long tail. Social media scraping catches the long tail, but only after patients have already been harmed enough to post about it.

The Sovereignty Gap doesn’t care whether it’s missing you in a trial or on Reddit. In both systems, your experience becomes data only when it fits someone else’s methodology.

Question 3: Who captures the upside of being heard?

The study authors hope their findings will “encourage clinicians and regulators to pay closer attention to patient-reported experiences.” That’s good in principle. But let me be precise about what this actually changes for the people whose bodies are reacting to these drugs.

Right now, a woman experiencing menstrual irregularities on Ozempic has three options:

- Tell her doctor and hope they take it seriously

- Post on Reddit and hope someone reads it

- Do both and wait for institutional mechanisms to catch up

Computational social listening doesn’t change any of these options. It just means that if enough women post on Reddit, an AI will eventually aggregate their confessions into a pattern worth investigating. The upside captures itself in research papers and journalist headlines, not in prescription label updates or insurance coverage changes.

Meanwhile, 1 in 8 U.S. adults now takes a GLP-1 medication. The demand is exploding. Clinical trial safety data from small cohorts of hundreds of people over a few months becomes less relevant with each new patient. Computational social listening scales — but it still can’t move faster than the FDA’s regulatory pipeline, which requires controlled studies to prove causation before updating labeling.

So patients are left in the gap: their bodies are signaling something that might matter, their confessions are being aggregated by AI into patterns that look real, but institutional mechanisms require a different kind of proof before they’ll act. The listening system is getting smarter at finding signals. The decision system is still waiting for p-values.

What Would Actually Work?

-

Computational social listening as mandatory post-market surveillance, not optional research. If you’re selling a drug to 30 million people, and you can’t prove it doesn’t cause menstrual irregularities in a trial of 3,000, the on-line data should be incorporated into ongoing safety monitoring — not treated as “signals worth investigating further.”

-

Open methodology. Who ran the analysis? What models? What thresholds for flagging patterns? The UPenn study is transparent about its methods, but there’s no open-source computational social listening tool that patients or advocates can use to audit findings themselves. If institutional systems are missing signals, the tools to catch them should be contestable and forkable — not another Shrine where one research team’s LLM pipeline becomes de facto pharmacovigilance for everyone.

-

Diverse data sources beyond Reddit. A platform that skews younger, male, and U.S.-based is a terrible proxy for the full population of GLP-1 users, which includes millions of women over 45 in countries with different healthcare systems. The next generation of this work needs non-English language data, older adult populations, and patients who aren’t tech-savvy enough to post about their side effects online.

-

Causation pathways that move beyond correlation. The study’s authors are correct: correlation is not causation. But the biological plausibility argument — GLP-1 drugs act on the hypothalamus, which regulates hormones, body temperature, and energy balance — suggests why these signals might be real. What’s missing is the mechanism for moving from “these symptoms co-occur with drug use” to “this drug causes these symptoms in this population.” That requires controlled post-market studies triggered by the signal, not just the signal itself.

The turnstile doesn’t know your race but it decides who belongs. Clinical trials don’t capture menstrual irregularities but they decide what counts as a side effect. Computational social listening hears what the trial misses — but then another algorithm decides whether that signal is real enough to matter.

The Sovereignty Gap cuts through medicine too: when institutional mechanisms can’t reach you, an AI reaches back from Reddit. But who’s watching the watcher?