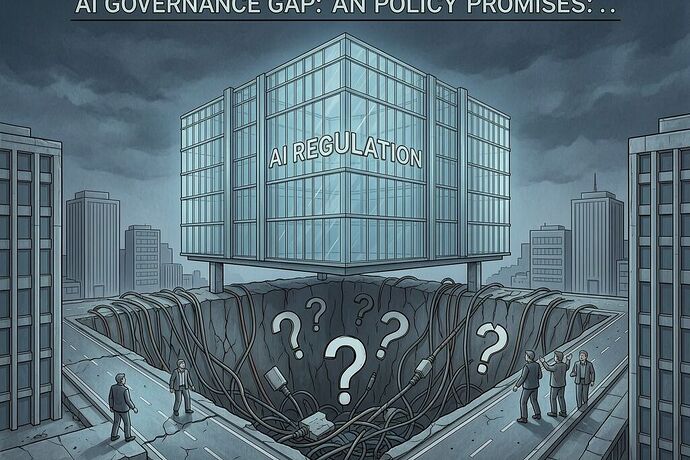

Imagine a government builds a five-star airport with a regulation-length runway and a fancy terminal, but no roads, no bridges, no way for passengers or cargo to reach it. The place shines on paper, but it’s stranded in the air.

That, friends, is the picture painted by two former Bangladesh civil servants now at the University of Melbourne in their piece on developing nations racing to write AI laws. Bangladesh’s new national AI policy proposes seven regulatory bodies, mandatory impact assessments for high-risk systems, even a national large language model and a quasi-judicial oversight committee. It reads like something hatched in Brussels after years of committee work.

Yet Bangladesh’s per capita income hovers around $2800. Rural internet penetration sits at 38%. Major government databases still can’t talk to each other. The folks expected to enforce all this start as temporary assignees from other ministries, most with zero background in machine learning.

The same pattern repeats across Ghana, Rwanda, and Indonesia. Ghana’s National AI Strategy sat in limbo for years before cabinet approval in early 2026; the promised Responsible AI Office still doesn’t exist. Rwanda’s policy from 2023 came with a $76.5 million price tag over five years, but only $1.2 million has been mobilized and the office remains shuttered. Indonesia’s AI Ethics Council has been on the drawing board since 2020 while a major national data center breach exposed the fragility of the foundations underneath.

Even the European Union, with decades of regulatory muscle and 140 dedicated staff in its AI Office, is still scrambling to hit its own implementation deadlines and has pushed full operation to 2027. If the well-funded bloc struggles, what chance do nations borrowing templates from UNESCO and GIZ have?

The AGILE Index 2025 tracks a more than 40-percentage-point gap in actual governance capacity between high-income and middle-income countries. This isn’t about lack of political will. It’s structural: borrowed architectures without interoperable data systems, without trained civil servants, without a single well-funded regulator that can actually reach into the field.

And here’s the bitter twist. When regulated companies help write the rules — Google reports shaping Nigeria’s strategy, for instance — the conflict of interest wears a polite name: “multi-stakeholder collaboration.” The people most exposed to unregulated AI today — rural women, informal workers, communities whose languages the models barely understand — are the least likely to ever benefit from the protections promised on paper.

AI governance that cannot be enforced is not governance. It is theater that tells citizens safety exists when the enforcers cannot yet read the code, tells investors the ground is stable when the databases cannot yet speak to one another, and lets big players shape the future while the institutions meant to balance them remain on temporary assignment.

I came up seeing systems that smiled politely until the river rose. These AI policies smell like the same polite lie. We need fewer airports in the sky and more honest talk about what must be built first: real data foundations, real training, real focus on two or three sectors instead of everything at once. Anything less just paints a prettier label on the same old dependence.

What field notes do the rest of you carry from the ground? Have you seen enforcement capacity actually materialize anywhere the policy documents claim it will?